What happens when you push to GitHub? The answer, “My repository gets my changes†or maybe, “The refs on my remote get updated†is pretty much right—and that is a really important thing that happens, but there’s a whole lot more that goes on after that. To name a few examples:

Pull requests are synchronized, meaning the diff and commits in your pull request reflect your newly pushed changes.

Push webhooks are dispatched.

Workflows are triggered.

If you push an app configuration file (like for Dependabot or GitHub Actions), the app is automatically installed on your repository.

GitHub Pages are published.

Codespaces configuration is updated.

And much, much more.

Those are some pretty important things, and this is just a sample of what goes on for every push. In fact, in the GitHub monolith, there are over 60 different pieces of logic owned by 20 different services that run in direct response to a push. That’s actually really cool—we should be doing a bunch of interesting things when code gets pushed to GitHub. In some sense, that’s a big part of what GitHub is, the place you push code1 and then cool stuff happens.

The problem

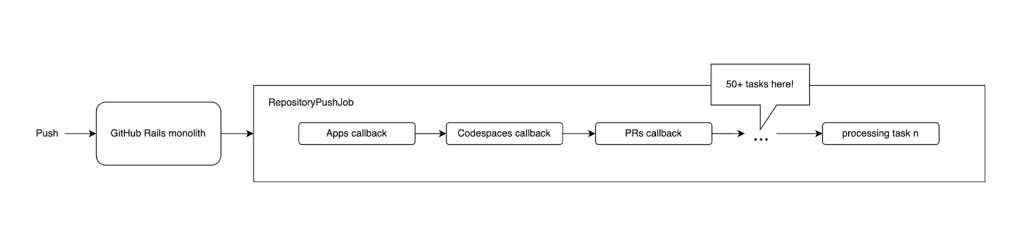

What’s not so cool is that, up until recently, all of these things were the responsibility of a single, enormous background job. Whenever GitHub’s Ruby on Rails monolith was notified of a push, it enqueued a massive job called the RepositoryPushJob. This job was the home for all push processing logic, and its size and complexity led to many problems. The job triggered one thing after another in a long, sequential series of steps, kind of like this:

There are a few things wrong with this picture. Let’s highlight some of them:

This job was huge, and hard to retry. The size of the RepositoryPushJob made it very difficult for different push processing tasks to be retried correctly. On a retry, all the logic of the job is repeated from the beginning, which is not always appropriate for individual tasks. For example:

Writing Push records to the database can be retried liberally on errors and reattempted any amount of time after the push, and will gracefully handle duplicate data.

Sending push webhooks, on the other hand, is much more time-sensitive and should not be reattempted too long after the push has occurred. It is also not desirable to dispatch multiples of the same webhook.

Most of these steps were never retried at all. The above difficulties with conflicting retry concerns ultimately led to retries of RepositoryPushJob being avoided in most cases. To prevent one step from killing the entire job, however, much of the push handling logic was wrapped in code catching any and all errors. This lack of retries led to issues where crucial pieces of push processing never occurred.

Tight coupling of many concerns created a huge blast radius for problems. While most of the dozens of tasks in this job rescued all errors, for historical reasons, a few pieces of work in the beginning of the job did not. This meant that all of the later steps had an implicit dependency on the initial parts of the job. As more concerns are combined within the same job, the likelihood of errors impacting the entire job increases.

For example, writing data to our Pushes MySQL cluster occurred in the beginning of the RepositoryPushJob. This meant that everything occurring after that had an implicit dependency on this cluster. This structure led to incidents where errors from this database cluster meant that user pull requests were not synchronized, even though pull requests have no explicit need to connect to this cluster.

A super long sequential process is bad for latency. It’s fine for the first few steps, but what about the things that happen last? They have to wait for every other piece of logic to run before they get a chance. In some cases, this structure led to a second or more of unnecessary latency for user-facing push tasks, including pull request synchronization.

What did we do about this?

At a high level, we took this very long sequential process and decoupled it into many isolated, parallel processes. We used the following approach:

We added a new Kafka topic that we publish an event to for each push.

We examined each of the many push processing tasks and grouped them by owning service and/or logical relationships (for example, order dependency, retry-ability).

For each coherent group of tasks, we placed them into a new background job with a clear owner and appropriate retry configuration.

Finally, we configured these jobs to be enqueued for each publish of the new Kafka event.

To do this, we used an internal system at GitHub that facilitates enqueueing background jobs in response to Kafka events via independent consumers.

We had to make investments in several areas to support this architecture, including:

Creating a reliable publisher for our Kafka event–one that would retry until broker acknowledgement.

Setting up a dedicated pool of job workers to handle the new job queues we’d need for this level of fan out.

Improving observability to ensure we could carefully monitor the flow of push events throughout this pipeline and detect any bottlenecks or problems.

Devising a system for consistent per-event feature flagging, to ensure that we could gradually roll out (and roll back if needed) the new system without risk of data loss or double processing of events between the old and new pipelines.

Now, things look like this:

A push triggers a Kafka event, which is fanned out via independent consumers to many isolated jobs that can process the event without worrying about any other consumers.

Results

A smaller blast radius for problems.

This can be clearly seen from the diagram. Previously, an issue with a single step in the very long push handling process could impact everything downstream. Now, issues with one piece of push handling logic don’t have the ability to take down much else.

Structurally, this decreases the risk of dependencies. For example, there are around 300 million push processing operations executed per day in the new pipeline that previously implicitly depended on the Pushes MySQL cluster and now have no such dependency, simply as a product of being moved into isolated processes.

Decoupling also means better ownership. In splitting up these jobs, we distributed ownership of the push processing code from one owning team to 15+ more appropriate service owners. New push functionality in our monolith can be added and iterated on by the owning team without unintentional impact to other teams.

Pushes are processed with lower latency.

By running these jobs in parallel, no push processing task has to wait for others to complete. This means better latency for just about everything that happens on push.

For example, we can see a notable decrease in pull request sync time:

Improved observability.

By breaking things up into smaller jobs, we get a much clearer picture of what’s going on with each job. This lets us set up observability and monitoring that is much more finely scoped than anything we had before, and helps us to quickly pinpoint any problems with pushes.

Pushes are more reliably processed.

By reducing the size and complexity of the jobs that process pushes, we are able to retry more things than in the previous system. Each job can have retry configuration that’s appropriate for its own small set of concerns, without having to worry about re-executing other, unrelated logic on retry.

If we define a “fully processed†push as a push event for which all the desired operations are completed with no failures, the old RepositoryPushJob system fully processed about 99.897% of pushes.

In the worst-case estimate, the new pipeline fully processes 99.999% of pushes.

Conclusion

Pushing code to GitHub is one of the most fundamental interactions that developers have with GitHub every day. It’s important that our system handles everyone’s pushes reliably and efficiently, and over the past several months we have significantly improved the ability of our monolith to correctly and fully process pushes from our users. Through platform level investments like this one, we strive to make GitHub the home for all developers (and their many pushes!) far into the future.

Notes

People push to GitHub a whole lot, as you can imagine. In the last 30 days, we’ve received around 500 million pushes from 8.5 million users. ↩

The post How we improved push processing on GitHub appeared first on The GitHub Blog.

Source: Read MoreÂ