Researchers are reimagining how models operate as demand skyrockets for faster, smarter, and more private AI on phones, tablets, and laptops. The next generation of AI isn’t just lighter and faster; it’s local. By embedding intelligence directly into devices, developers are unlocking near-instant responsiveness, slashing memory demands, and putting privacy back into users’ hands. With mobile hardware rapidly advancing, the race is on to build compact, lightning-fast models that are intelligent enough to redefine everyday digital experiences.

A major concern is delivering high-quality, multimodal intelligence within the constrained environments of mobile devices. Unlike cloud-based systems that have access to extensive computational power, on-device models must perform under strict RAM and processing limits. Multimodal AI, capable of interpreting text, images, audio, and video, typically requires large models, which most mobile devices cannot handle efficiently. Also, cloud dependency introduces latency and privacy concerns, making it essential to design models that can run locally without sacrificing performance.

Earlier models like Gemma 3 and Gemma 3 QAT attempted to bridge this gap by reducing size while maintaining performance. Designed for use on cloud or desktop GPUs, they significantly improved model efficiency. However, these models still required robust hardware and could not fully overcome mobile platforms’ memory and responsiveness constraints. Despite supporting advanced functions, they often involved compromises limiting their real-time smartphone usability.

Researchers from Google and Google DeepMind introduced Gemma 3n. The architecture behind Gemma 3n has been optimized for mobile-first deployment, targeting performance across Android and Chrome platforms. It also forms the underlying basis for the next version of Gemini Nano. The innovation represents a significant leap forward by supporting multimodal AI functionalities with a much lower memory footprint while maintaining real-time response capabilities. This marks the first open model built on this shared infrastructure and is made available to developers in preview, allowing immediate experimentation.

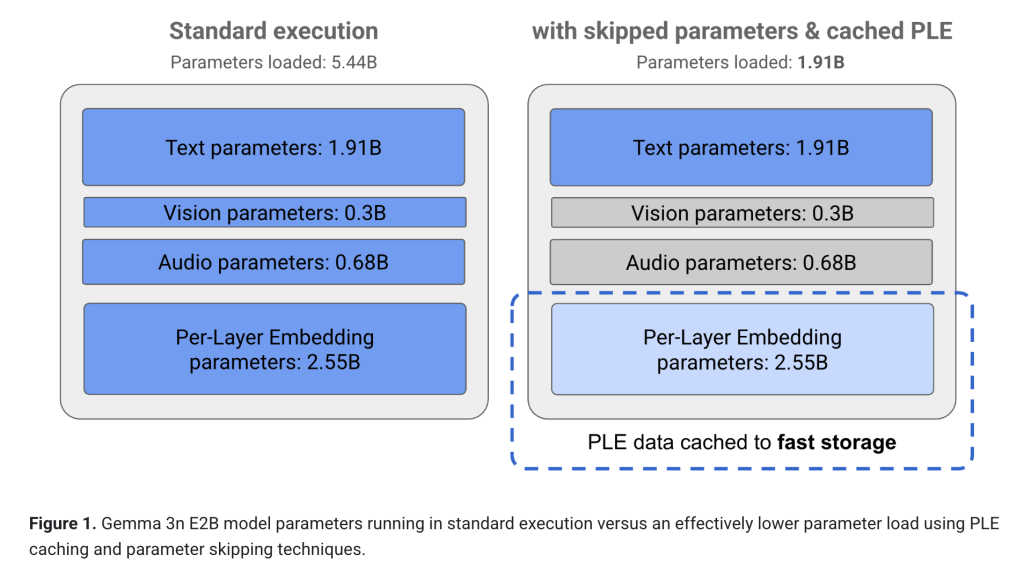

The core innovation in Gemma 3n is the application of Per-Layer Embeddings (PLE), a method that drastically reduces RAM usage. While the raw model sizes include 5 billion and 8 billion parameters, they behave with memory footprints equivalent to 2 billion and 4 billion parameter models. The dynamic memory consumption is just 2GB for the 5B model and 3GB for the 8B version. Also, it uses a nested model configuration where a 4B active memory footprint model includes a 2B submodel trained through a technique known as MatFormer. This allows developers to dynamically switch performance modes without loading separate models. Further advancements include KVC sharing and activation quantization, which reduce latency and increase response speed. For example, response time on mobile improved by 1.5x compared to Gemma 3 4B while maintaining better output quality.

The performance metrics achieved by Gemma 3n reinforce its suitability for mobile deployment. It excels in automatic speech recognition and translation, allowing seamless speech conversion to translated text. On multilingual benchmarks like WMT24++ (ChrF), it scores 50.1%, highlighting its strength in Japanese, German, Korean, Spanish, and French. Its mix’n’match capability allows the creation of submodels optimized for various quality and latency combinations, offering developers further customization. The architecture supports interleaved inputs from different modalities, text, audio, images, and video, allowing more natural and context-rich interactions. It also performs offline, ensuring privacy and reliability even without network connectivity. Use cases include live visual and auditory feedback, context-aware content generation, and advanced voice-based applications.

Several Key Takeaways from the Research on Gemma 3n include:

- Built using collaboration between Google, DeepMind, Qualcomm, MediaTek, and Samsung System LSI. Designed for mobile-first deployment.

- Raw model size of 5B and 8B parameters, with operational footprints of 2GB and 3GB, respectively, using Per-Layer Embeddings (PLE).

- 1.5x faster response on mobile vs Gemma 3 4B. Multilingual benchmark score of 50.1% on WMT24++ (ChrF).

- Accepts and understands audio, text, image, and video, enabling complex multimodal processing and interleaved inputs.

- Supports dynamic trade-offs using MatFormer training with nested submodels and mix’n’match capabilities.

- Operates without an internet connection, ensuring privacy and reliability.

- Preview is available via Google AI Studio and Google AI Edge, with text and image processing capabilities.

In conclusion, this innovation provides a clear pathway for making high-performance AI portable and private. By tackling RAM constraints through innovative architecture and enhancing multilingual and multimodal capabilities, researchers offer a viable solution for bringing sophisticated AI directly into everyday devices. The flexible submodel switching, offline readiness, and fast response time mark a comprehensive approach to mobile-first AI. The research addresses the balance of computational efficiency, user privacy, and dynamic responsiveness. The result is a system capable of delivering real-time AI experiences without sacrificing capability or versatility, fundamentally expanding what users can expect from on-device intelligence.

Check out the Technical details and Try it here. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit and Subscribe to our Newsletter.

The post Google DeepMind Releases Gemma 3n: A Compact, High-Efficiency Multimodal AI Model for Real-Time On-Device Use appeared first on MarkTechPost.

Source: Read MoreÂ