In machine learning, sequence models are designed to process data with temporal structure, such as language, time series, or signals. These models track dependencies across time steps, making it possible to generate coherent outputs by learning from the progression of inputs. Neural architectures like recurrent neural networks and attention mechanisms manage temporal relationships through internal states. The ability of a model to remember and relate previous inputs to current tasks depends on how well it utilizes its memory mechanisms, which are crucial in determining model effectiveness across real-world tasks involving sequential data.

One of the persistent challenges in the study of sequence models is determining how memory is used during computation. While the size of a model’s memory—often measured as state or cache size—is easy to quantify, it does not reveal whether that memory is being effectively used. Two models might have similar memory capacities but very different ways of applying that capacity during learning. This discrepancy means existing evaluations fail to capture critical nuances in model behavior, leading to inefficiencies in design and optimization. A more refined metric is needed to observe memory utilization rather than mere memory size.

Previous approaches to understanding memory use in sequence models relied on surface-level indicators. Visualizations of operators like attention maps or basic metrics, such as model width and cache capacity, provided some insight. However, these methods are limited because they often apply only to narrow classes of models or do not account for important architectural features like causal masking. Further, techniques like spectral analysis are hindered by assumptions that do not hold across all models, especially those with dynamic or input-varying structures. As a result, they fall short of guiding how models can be optimized or compressed without degrading performance.

Researchers from Liquid AI, The University of Tokyo, RIKEN, and Stanford University introduced an Effective State-Size (ESS) metric to measure how much of a model’s memory is truly being utilized. ESS is developed using principles from control theory and signal processing, and it targets a general class of models that include input-invariant and input-varying linear operators. These cover a range of structures such as attention variants, convolutional layers, and recurrence mechanisms. ESS operates by analyzing the rank of submatrices within the operator, specifically focusing on how past inputs contribute to current outputs, providing a measurable way to assess memory utilization.

The calculation of ESS is grounded in analyzing the rank of operator submatrices that link earlier input segments to later outputs. Two variants were developed: tolerance-ESS, which uses a user-defined threshold on singular values, and entropy-ESS, which uses normalized spectral entropy for a more adaptive view. Both methods are designed to handle practical computation issues and are scalable across multi-layer models. The ESS can be computed per channel and sequence index and aggregated as average or total ESS for comprehensive analysis. The researchers emphasize that ESS is a lower bound on required memory and can reflect dynamic patterns in model learning.

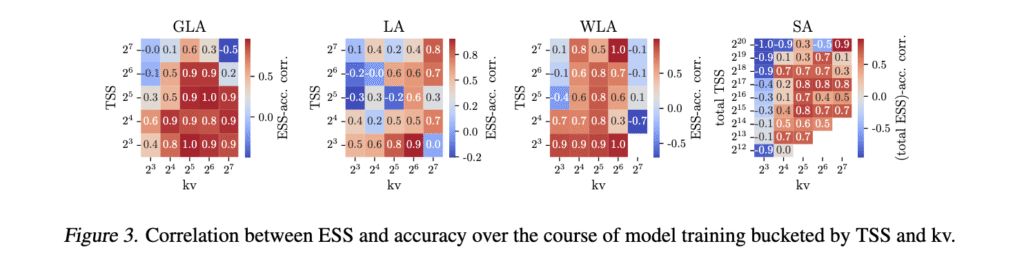

Empirical evaluation confirmed that ESS correlates closely with performance across various tasks. In multi-query associative recall (MQAR) tasks, ESS normalized by the number of key-value pairs (ESS/kv) showed a stronger correlation with model accuracy than theoretical state-size (TSS/kv). For instance, models with high ESS consistently achieved higher accuracy. The study also revealed two failure modes in model memory usage: state saturation, where ESS nearly equals TSS, and state collapse, where ESS remains underused. Also, ESS was successfully applied to model compression via distillation. Higher ESS in teacher models resulted in greater loss when compressing to smaller models, showing ESS’s utility in predicting compressibility. It also tracked how end-of-sequence tokens modulated memory use in large language models like Falcon Mamba 7B.

The study outlines a precise and effective approach to solving the gap between theoretical memory size and actual memory use in sequence models. Through the development of ESS, the researchers offer a robust metric that brings clarity to model evaluation and optimization. It paves the way for designing more efficient sequence models and enables using ESS in regularization, initialization, and model compression strategies grounded in clear, quantifiable memory behavior.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 90k+ ML SubReddit.

Here’s a brief overview of what we’re building at Marktechpost:

- ML News Community – r/machinelearningnews (92k+ members)

- Newsletter– airesearchinsights.com/(30k+ subscribers)

- miniCON AI Events – minicon.marktechpost.com

- AI Reports & Magazines – magazine.marktechpost.com

- AI Dev & Research News – marktechpost.com (1M+ monthly readers)

- Partner with us

The post This AI Paper Introduces Effective State-Size (ESS): A Metric to Quantify Memory Utilization in Sequence Models for Performance Optimization appeared first on MarkTechPost.

Source: Read MoreÂ