Mathematical reasoning has long presented a formidable challenge for AI, demanding not only an understanding of abstract concepts but also the ability to perform multi-step logical deductions with precision. Traditional language models, while adept at generating fluent text, often struggle when tasked with solving complex mathematical problems that require both deep domain knowledge and structured reasoning. This gap has driven research toward specialized architectures and training regimens designed to imbue models with robust mathematical capabilities. By focusing on targeted datasets and fine-tuning strategies, AI developers aim to bridge the gap between natural language understanding and formal mathematical problem-solving.

NVIDIA has introduced OpenMath-Nemotron-32B and OpenMath-Nemotron-14B-Kaggle, each meticulously engineered to excel in mathematical reasoning tasks. Building on the success of the Qwen family of transformer models, these Nemotron variants utilize large-scale fine-tuning on an extensive corpus of mathematical problems, collectively known as the OpenMathReasoning dataset. The design philosophy underlying both releases centers on maximizing accuracy across competitive benchmarks while maintaining practical considerations for inference speed and resource efficiency. By offering multiple model sizes and configurations, NVIDIA provides researchers and practitioners with a flexible toolkit for integrating advanced math capabilities into diverse applications.

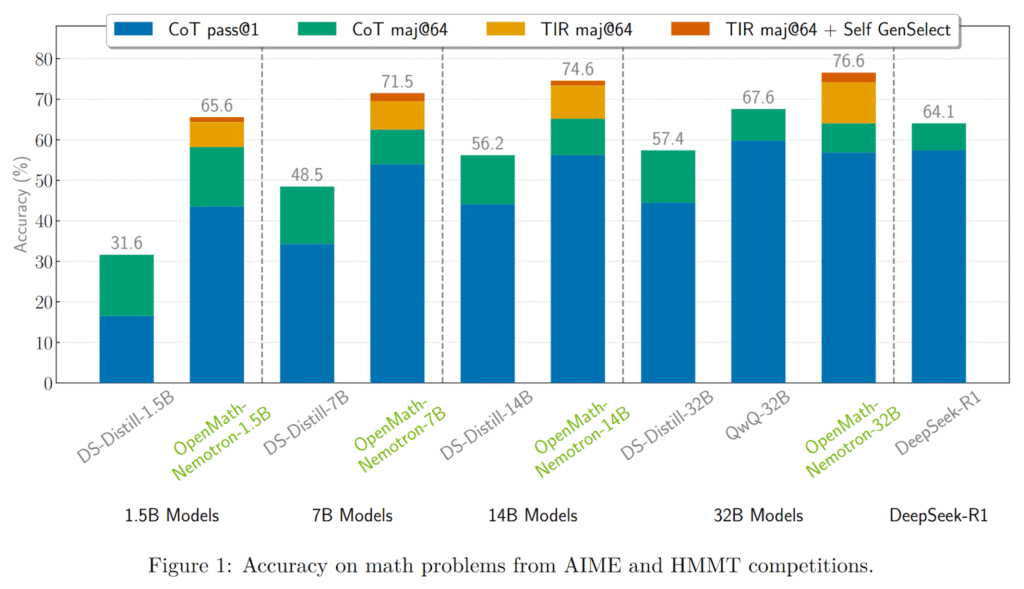

OpenMath-Nemotron-32B represents the flagship of this series, featuring 32.8 billion parameters and leveraging BF16 tensor operations for efficient hardware utilization. It is built by fine-tuning Qwen2.5-32B on the OpenMathReasoning dataset, a curated collection that emphasizes challenging problems drawn from mathematical Olympiads and standardized exams. This model achieves state-of-the-art results on several rigorous benchmarks, including the American Invitational Mathematics Examination (AIME) 2024 and 2025, the Harvard–MIT Mathematics Tournament (HMMT) 2024-25, and the Harvard–London–Edinburgh Mathematics Exam (HLE-Math) series. In its tool-integrated reasoning (TIR) configuration, OpenMath-Nemotron-32B achieves an average pass@1 score of 78.4 percent on AIME24, with a majority-voting accuracy of 93.3 percent, surpassing previous top-performing models by notable margins.

To accommodate different inference scenarios, OpenMath-Nemotron-32B supports three distinct modes: chain-of-thought (CoT), tool-integrated reasoning (TIR), and generative solution selection (GenSelect). In CoT mode, the model generates intermediate reasoning steps before presenting a final answer, achieving a pass@1 accuracy of 76.5% on AIME24. When augmented with GenSelect, which produces multiple candidate solutions and selects the most consistent answer, the model’s performance improves further, achieving a remarkable 93.3% accuracy on the same benchmark. These configurations enable users to balance between explanation richness and answer precision, catering to research environments that require transparency as well as production settings that prioritize speed and reliability.

Complementing the 32 billion-parameter variant, NVIDIA has also released OpenMath-Nemotron-14B-Kaggle, a 14.8 billion-parameter model fine-tuned on a strategically selected subset of the OpenMathReasoning dataset to optimize for competitive performance. This version served as the cornerstone of NVIDIA’s first-place solution in the AIMO-2 Kaggle competition, a contest that focused on automated problem-solving techniques for advanced mathematical challenges. By calibrating the training data to emphasize problems reflective of the competition’s format and difficulty, the 14B-Kaggle model demonstrated exceptional adaptability, outpacing rival approaches and securing the top leaderboard position.

Performance benchmarks for OpenMath-Nemotron-14B-Kaggle mirror those of its larger counterpart, with the model achieving a pass@1 accuracy of 73.7% on AIME24 in CoT mode and improving to 86.7% under GenSelect protocols. On the AIME25 benchmark, it achieves a pass rate of 57.9 percent (majority at 64 of 73.3 percent), and on HMMT-24-25, it attains 50.5 percent (majority at 64 of 64.8 percent). These figures highlight the model’s ability to deliver high-quality solutions, even with a more compact parameter footprint, making it well-suited for scenarios where resource constraints or inference latency are critical factors.

Both OpenMath-Nemotron models are accompanied by an open‐source pipeline, enabling full reproducibility of data generation, training procedures, and evaluation protocols. NVIDIA has integrated these workflows into its NeMo-Skills framework, providing reference implementations for CoT, TIR, and GenSelect inference modes. With example code snippets that demonstrate how to instantiate a transformer pipeline, configure dtype and device mapping, and parse model outputs, developers can rapidly prototype applications that query these models for step-by-step solutions or streamlined final answers.

Under the hood, both models are optimized to run efficiently on NVIDIA GPU architectures, ranging from the Ampere to the Hopper microarchitectures, leveraging highly tuned CUDA libraries and TensorRT optimizations. For production deployments, users can serve models via Triton Inference Server, enabling low-latency, high-throughput integrations in web services or batch processing pipelines. The adoption of BF16 tensor formats strikes an ideal balance between numerical precision and memory footprint, enabling these large-scale models to fit within GPU memory constraints while maintaining robust performance across various hardware platforms.

Several Key Takeaways from the release of OpenMath-Nemotron-32B and OpenMath-Nemotron-14B-Kaggle include:

- NVIDIA’s OpenMath-Nemotron series addresses the longstanding challenge of equipping language models with robust mathematical reasoning through targeted fine-tuning on the OpenMathReasoning dataset.

- The 32 B-parameter variant achieves state-of-the-art accuracy on benchmarks like AIME24/25 and HMMT, offering three inference modes (CoT, TIR, GenSelect) to balance explanation richness and precision.

- The 14 B-parameter “Kaggle” model, fine-tuned on a competition-focused subset, secured first place in the AIMO-2 Kaggle competition while maintaining high pass@1 scores, demonstrating efficiency in a smaller footprint.

- Both models are fully reproducible via an open-source pipeline integrated into NVIDIA’s NeMo-Skills framework, with reference implementations for all inference modes.

- Optimized for NVIDIA GPUs (Ampere and Hopper), the models leverage BF16 tensor operations, CUDA libraries, TensorRT, and Triton Inference Server for low-latency, high-throughput deployments.

- Potential applications include AI-driven tutoring systems, academic competition preparation tools, and integration into scientific computing workflows requiring formal or symbolic reasoning.

- Future directions may expand to advanced university-level mathematics, multimodal inputs (e.g., handwritten equations), and tighter integration with symbolic computation engines to verify and augment generated solutions.

Check out the OpenMath-Nemotron-32B and OpenMath-Nemotron-14B-Kaggle. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post NVIDIA AI Releases OpenMath-Nemotron-32B and 14B-Kaggle: Advanced AI Models for Mathematical Reasoning that Secured First Place in the AIMO-2 Competition and Set New Benchmark Records appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop