LLMs show impressive capabilities across numerous applications, yet they face challenges due to computational demands and memory requirements. This challenge is acute in scenarios requiring local deployment for privacy concerns, such as processing sensitive patient records, or compute-constrained environments like real-time customer service systems and edge devices. Post-training quantization (PTQ) is a promising solution that allows efficient compression of pre-trained models, reducing memory consumption by 2-4 times. However, current processes have a bottleneck at 4-bit compression, with substantial performance degradation when attempting 2- or 3-bit precision. Most PTQ methods rely on small mini-batches of general-purpose pre-training data to account for activation changes resulting from quantization.

Current methods for LLM compression primarily fall into three categories. Uniform quantization represents the most basic approach, where weights stored as 16-bit float tensors are compressed by treating each row independently, mapping floats to integers based on maximum and minimum values within each channel. GPTQ-based quantization techniques advance this concept by focusing on layerwise reconstruction, aiming to minimize reconstruction loss after quantization. Further, Mixed-precision quantization methods offer a more nuanced strategy, moving beyond fixed precision for all weights. These techniques assign bit-width based on weight importance to maintain performance, with some approaches preserving high-sensitivity “outlier” weights at higher precision.

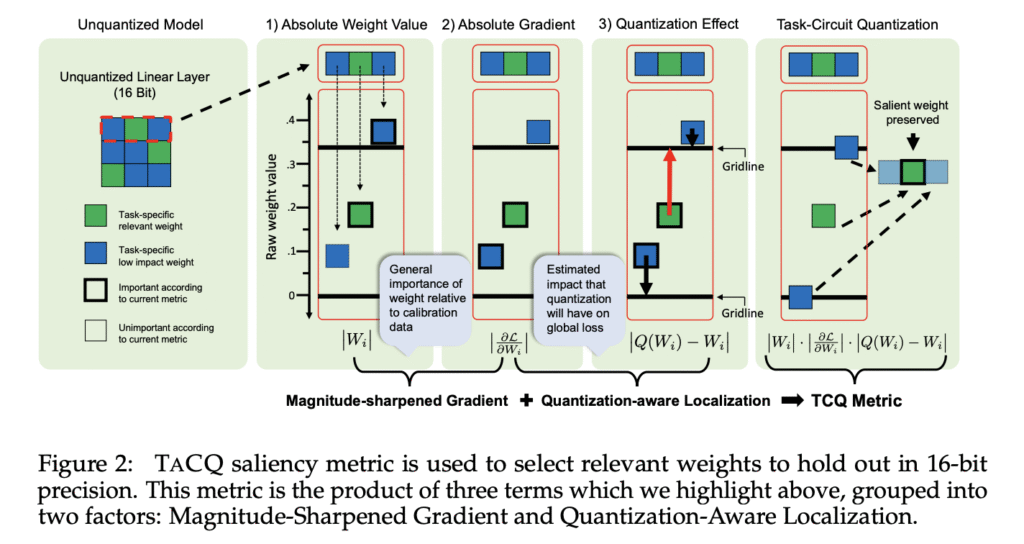

Researchers from UNC Chapel Hill have proposed a novel mixed-precision post-training quantization approach called TaskCircuit Quantization (TACQ). The method shows similarities to automated circuit discovery by directly conditioning the quantization process on specific weight circuits, defined as sets of weights associated with downstream task performance. TACQ compares unquantized model weights with uniformly quantized ones to estimate expected weight changes from quantization, then uses gradient information to predict impacts on task performance, enabling preservation of task-specific weights. TACQ consistently outperforms baselines with the same calibration data and lower weight budgets, and achieves significant improvements in the challenging 2-bit and 3-bit regimes.

TACQ is defined by a saliency metric that identifies critical weights to preserve during quantization, building on concepts from model interpretability like automatic circuit discovery, knowledge localization, and input attribution. This metric uses two components:

- Quantization-aware Localization (QAL): Trace how model performance is affected by estimating expected weight changes due to quantization.

- Magnitude-sharpened Gradient (MSG): A generalized metric for absolute weight importance adapted from input attribution techniques.

MSG helps stabilize TACQ and addresses biases from QAL’s estimations. These factors combine into a unified saliency metric that can be efficiently evaluated for every weight in a single backward pass, allowing preservation of the top p% highest-scoring weights at 16-bit precision.

In the challenging 2-bit setting, TACQ outperforms SliM-LLM with absolute margin improvements of 16.0% (from 20.1% to 36.1%) on GSM8k, 14.1% (from 34.8% to 49.2%) on MMLU, and 21.9% (from 0% to 21.9%) on Spider. Other baseline methods like GPTQ, SqueezeLLM, and SPQR deteriorate to near-random performance at this compression level. At 3-bit precision, TACQ preserves approximately 91%, 96%, and 89% of the unquantized accuracy on GSM8k, MMLU, and Spider, respectively, while outperforming the strongest baseline, SliM-LLM, by 1-2% across most datasets. TACQ’s advantages become evident in generation tasks requiring sequential token outputs, where it is the only method capable of recovering non-negligible performance in the 2-bit setting for the Spider text-to-SQL task.

In conclusion, researchers introduced TACQ, a significant advancement in task-aware post-training quantization. It improves model performance at ultra-low bit-widths (2- to 3-bits) where previous methods degrade to near-random outputs. TACQ aligns with automatic circuit discovery research by selectively preserving only a small fraction of salient weights at 16-bit precision, indicating that sparse weight “circuits” disproportionately influence specific tasks. Moreover, experiments on Spider show that TACQ better preserves model generation capabilities, making it suitable for program-prediction tasks. This also applies to situations involving agents, where models frequently generate many executable outputs, and where efficiency is a concern.

Check out the Paper and GitHub Page. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post LLMs Can Now Retain High Accuracy at 2-Bit Precision: Researchers from UNC Chapel Hill Introduce TACQ, a Task-Aware Quantization Approach that Preserves Critical Weight Circuits for Compression Without Performance Loss appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop