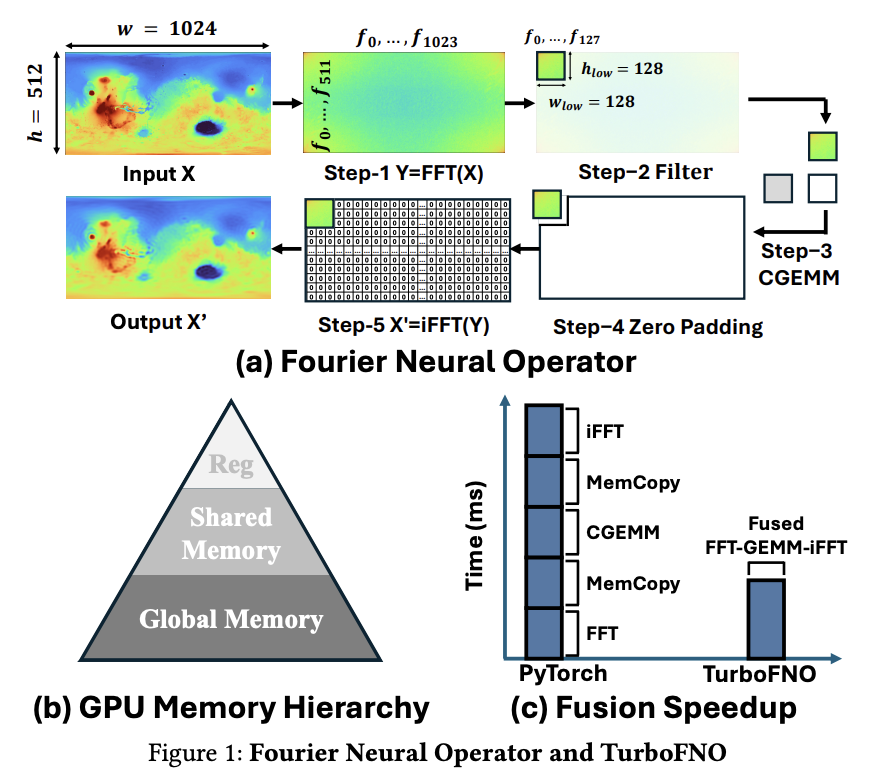

Fourier Neural Operators (FNO) are powerful tools for learning partial differential equation solution operators, but lack architecture-aware optimizations, with their Fourier layer executing FFT, filtering, GEMM, zero padding, and iFFT as separate stages, resulting in multiple kernel launches and excessive global memory traffic. The FFT -> GEMM -> iFFT computational pattern has received inadequate attention regarding GPU kernel fusion and memory layout optimization. Current methods like Quantum ESPRESSO, Octopus, and CP2K make separate calls to FFT and BLAS routines. However, they have three limitations: partial frequency utilization with additional memory copy operations, lack of native frequency filtering capabilities in cuFFT, and excessive memory transactions between processing stages.

FNO implements a pipeline that begins with a forward FFT on input feature maps, applies spectral filtering, and reconstructs output through inverse FFT. This process necessitates frequency domain truncation and zero-padding steps, which current frameworks like PyTorch execute as separate memory-copy kernels due to cuFFT’s limitations in native input/output trimming support. Leading FFT libraries such as cuFFT and VkFFT lack built-in data truncation capabilities. Traditional 2D FFTs apply both 1D-FFT stages along spatial dimensions, but FNO applies spectral weights across the channel dimension, suggesting an opportunity for decoupling the FFT stages by keeping the first 1D FFT along spatial axes while reinterpreting the second FFT stage along the hidden dimension.

Researchers from the University of California, Riverside, CA, USA, have proposed TurboFNO, the first fully fused FFT-GEMM-iFFT GPU kernel with built-in FFT optimizations. The approach begins with developing FFT and GEMM kernels from scratch that achieve performance comparable to or faster than closed-source state-of-the-art cuBLAS and cuFFT. An FFT variant is introduced to effectively fuse FFT and GEMM workloads where a single thread block iterates over the hidden dimension, aligning with the k-loop in GEMM. Moreover, two shared memory swizzling patterns are designed to achieve 100% memory bank utilization when forwarding FFT output to GEMM and enable iFFT to retrieve GEMM results directly from shared memory.

TurboFNO integrates optimized implementations of FFT and CGEMM kernels to enable effective fusion and built-in FFT optimizations. The kernel fusion strategy in TurboFNO progresses through three levels: FFT-GEMM fusion, GEMM-iFFT fusion, and full FFT-GEMM-iFFT fusion. Each stage involves aligning the FFT workflow with GEMM, resolving data layout mismatches, and eliminating shared memory bank conflicts. Key techniques include modifying FFT output layout to match GEMM’s input format, applying thread swizzling for conflict-free shared memory access, and integrating inverse FFT as an epilogue stage of CGEMM to bypass intermediate global memory writes and enhance memory locality.

TurboFNO shows great performance in both 1D and 2D FNO evaluations. In 1D FNO tests, the optimized FFT-CGEMM-iFFT workflow achieves up to 100% speedup over PyTorch, averaging 50% improvement. These gains come from FFT pruning, which reduces computation by 25%-67.5%. The fully fused FFT-CGEMM-iFFT kernel delivers up to 150% speedup over PyTorch and provides an additional 10%-20% improvement over partial fusion strategies. Similarly, in 2D FNO, the optimized workflow outperforms PyTorch with average speedups above 50% and maximum improvements reaching 100%. The 2D fully fused kernel achieves 50%-105% speedup over PyTorch without performance degradation, despite the additional overhead of aligning FFT workload layout with CGEMM dataflow.

In this paper, researchers introduced TurboFNO, the first fully fused GPU kernel that integrates FFT, CGEMM, and iFFT for accelerating Fourier Neural Operators. They developed a series of architecture-aware optimizations to overcome inefficiencies in conventional FNO implementations, such as excessive kernel launches and global memory traffic. These include a custom FFT kernel with built-in frequency filtering and zero padding, a GEMM-compatible FFT variant that mimics k-loop behavior, and shared memory swizzling strategies that improve bank utilization from 25% to 100%. TurboFNO achieves up to 150% speedup and maintains an average 67% performance gain across all tested configurations.

Here is the Paper. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post Fourier Neural Operators Just Got a Turbo Boost: Researchers from UC Riverside Introduce TurboFNO, a Fully Fused FFT-GEMM-iFFT Kernel Achieving Up to 150% Speedup over PyTorch appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop