The transformer architecture has revolutionized natural language processing, enabling models like GPT to predict the next token in a sequence efficiently. However, these models suffer from a fundamental limitation of performing a one-pass projection of all previous tokens to predict the next token, which restricts their capacity for iterative refinement. Transformers apply constant computational effort regardless of the complexity or ambiguity of the predicted token, lacking mechanisms to reconsider or refine their predictions. Traditional neural networks, including transformers, map input sequences to predict in a single forward pass, processing inputs through multiple layers to refine internal representations.

Universal Transformers introduced the recurrent application of transformer layers to capture short-term and long-term dependencies by iteratively refining representations. However, experiments were limited to smaller models and datasets rather than large-scale language models like GPT-2. Adaptive Computation Time models allowed dynamic determination of computational steps per input but are mainly applied to simple RNN architectures and tested on small-scale tasks without using transformer architecture or large-scale pretraining. Depth-Adaptive Transformers adjusted network depth based on input, enabling dynamic inference by selecting the number of layers to apply per input sequence. However, these approaches lack the predictive residual design found in more advanced architectures.

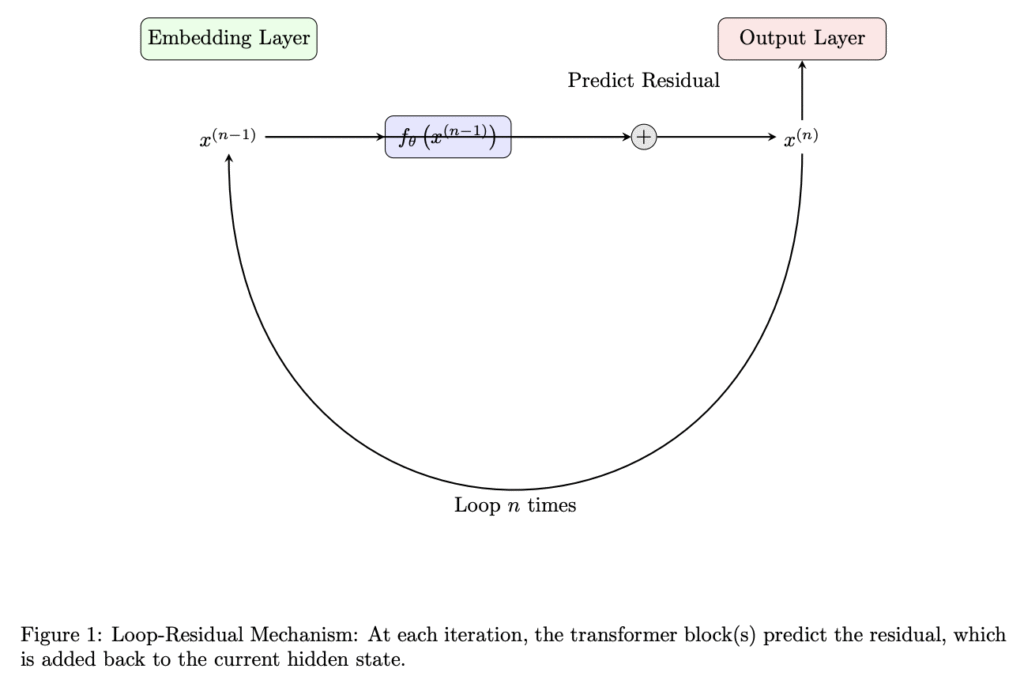

Researchers from HKU have proposed a novel Loop-Residual Neural Network that revisits input multiple times, refining predictions by iteratively looping over a subset of the model with residual connections. It improves transformer performance with longer inference times using a novel loop architecture with residual prediction. This approach works effectively for large neural networks without requiring extra training data, extending the model’s approximation capacity. Its effectiveness is shown through experiments comparing standard GPT-2 versions with Loop-Residual models. Notably, their GPT-2-81M model achieves a validation loss of 3.11 on the OpenWebText dataset, comparable to the GPT-2-124M model’s loss of 3.12.

The Loop-Residual involves two experiments. First, a Loop-Residual GPT-2 model with 81M parameters (GPT2-81M) is compared with the GPT-2 model with 124M parameters (GPT2-124M). While GPT2-124M consists of 12 transformer layers as the baseline, the Loop-Residual GPT2-81M uses 6 loops over 6 transformer layers. The second experiment compares a Loop-Residual GPT-2 with 45M parameters (GPT2-45M) to a Lite GPT-2 model of identical size (GPT2-45M-Lite). The GPT2-45M-Lite features a single transformer block layer for one-pass prediction, while the Loop-Residual version loops twice over a single transformer block. Both experiments use the OpenWebText dataset with measured training epoch times of 150ms for GPT2-45M-Lite, 177ms for Loop-Residual GPT2-45M, and 1,377ms for GPT2-81M.

In the first experiment, the Loop-Residual GPT2-81M model achieves a validation loss of 3.11 on the OpenWebText dataset, comparable to the GPT2-124M model’s loss of 3.12. This result is significant because the Loop-Residual model uses 35% fewer parameters and half the number of unique layers compared to the GPT2-124M model. This shows that iterative refinement through the loop-residual mechanism enhances the model’s approximation capacity. In the second experiment, the Loop-Residual model achieves a validation loss of 3.67 compared to 3.98 and a training loss of 3.65 compared to 3.96. By looping twice over a single transformer block, the model effectively simulates a deeper network, resulting in substantial performance gains over the one-pass baseline without increasing model size.

In conclusion, researchers introduced the Loop-Residual Neural Network, which enables smaller neural network models to achieve better results on lower-end devices by utilizing longer inference times through iterative refinement. This method captures complex patterns and dependencies more effectively than conventional one-pass models. Experiments show that Loop-Residual models can achieve improved performance over baseline models of the same size and comparable performance to larger models with fewer parameters. The future direction includes new possibilities for neural network architectures, especially for tasks that benefit from deeper computational reasoning on resource-constrained devices.

Here is the Paper. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

The post Model Compression Without Compromise: Loop-Residual Neural Networks Show Comparable Results to Larger GPT-2 Variants Using Iterative Refinement appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

[Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop