In recent years, the rapid progress of LLMs has given the impression that we are nearing the achievement of Artificial General Intelligence (AGI), with models seemingly capable of solving increasingly complex tasks. However, a fundamental question remains: Are LLMs genuinely reasoning like humans or merely repeating patterns learned during training? Since the release of models like GPT-3 and ChatGPT, LLMs have revolutionized the research landscape, pushing boundaries across AI and science. Data quality, model scaling, and multi-step reasoning improvements have brought LLMs close to passing high-level AGI benchmarks. Yet, their true reasoning capabilities are not fully understood. Instances where advanced models fail to solve simple math problems—despite their apparent simplicity—raise concerns about whether they are truly reasoning or just mimicking familiar solution patterns.

Although various benchmarks exist to evaluate LLMs across domains like general knowledge, coding, math, and reasoning, many rely on tasks solvable by applying memorized templates. As a result, the actual intelligence and robustness of LLMs remain debatable. Studies show LLMs struggle with subtle context shifts, simple calculations, symbolic reasoning, and out-of-distribution prompts. These weaknesses are amplified under perturbed conditions or misleading cues. Similarly, multi-modal LLMs, including vision-language models like GPT-4v and LLaVA, show the same tendency to recite instead of reason when tested with subtly altered visual or textual inputs. This suggests that issues like spurious correlations, memorization, and inefficient decoding might underlie these failures, indicating a gap between observed performance and genuine understanding.

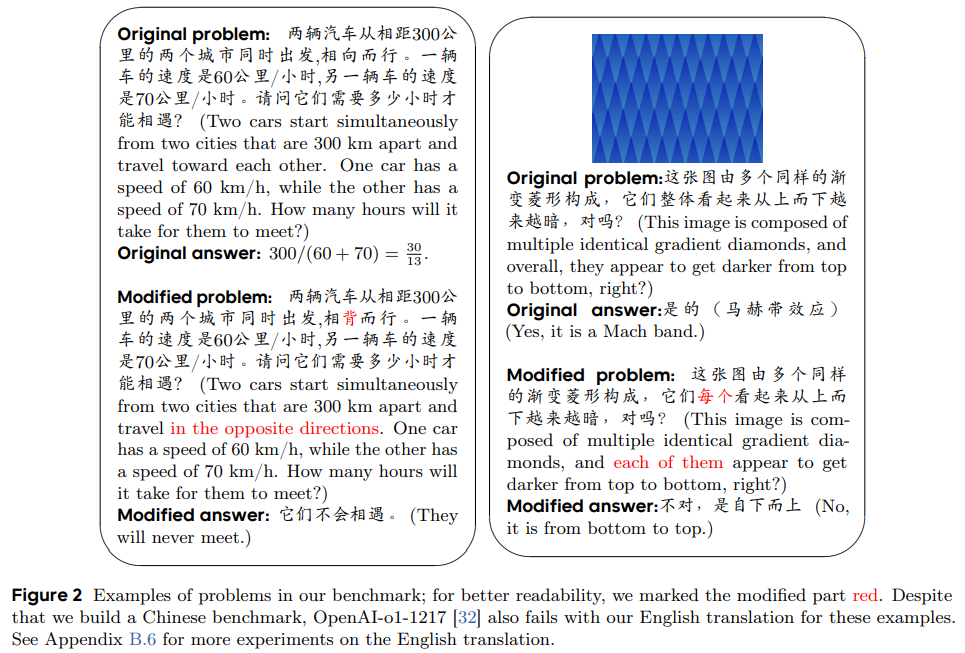

ByteDance Seed and the University of Illinois Urbana-Champaign researchers introduce RoR-Bench, a new multi-modal benchmark designed to identify whether LLMs rely on recitation rather than genuine reasoning when solving simple problems with subtly altered conditions. The benchmark includes 158 text and 57 image problem pairs, each featuring a basic reasoning task alongside a slightly modified version. Experiments reveal that leading models like OpenAI-o1 and DeepSeek-R1 suffer drastic performance drops—often over 60% with minor changes. Alarmingly, most models struggle to recognize unsolvable problems—preliminary fixes like prompt engineering offer limited improvement, emphasizing the need for deeper solutions.

RoR-Bench is a Chinese multimodal benchmark created to assess whether LLMs rely on memorized solution patterns rather than true reasoning. It contains 215 problem pairs—158 text-based and 57 image-based—where each pair includes an original and a subtly altered version. The original problems are simple, often from children’s puzzle sets, while the modified ones introduce minor changes that require entirely different reasoning. Annotators ensured minimal wording changes and no ambiguity. Notably, some problems are designed to have no solution or feature unrelated information, testing LLMs’ ability to recognize illogical conditions and resist recitation-based answers.

The study empirically evaluates leading LLMs and VLMs on the RoR-Bench benchmark, focusing on their ability to reason through subtle problem changes rather than merely recalling learned patterns. Results reveal that most models suffer a significant performance drop—often over 50% when tested on slightly modified problems, suggesting a reliance on memorization rather than genuine reasoning. Even techniques like Chain-of-Thought prompting or “Forced Correct” instructions provide limited improvement. Few-shot in-context learning shows some gains, especially with increased examples or added instructions, but still fails to close the gap. Overall, these findings highlight the limitations of current models in adaptive reasoning.

In conclusion, the study introduces RoR-Bench, a Chinese multimodal benchmark designed to uncover a critical flaw in current large language models: their inability to handle simple reasoning tasks when problem conditions are slightly altered. The significant performance drop—often over 50% suggests that these models rely on memorization rather than true reasoning. Even with added prompts or few-shot examples, the issue remains largely unresolved. While the benchmark is limited to Chinese, initial English results indicate similar weaknesses. The findings challenge assumptions about LLM intelligence and call for future research to develop models that reason genuinely rather than reciting learned patterns from training data.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

The post RoR-Bench: Revealing Recitation Over Reasoning in Large Language Models Through Subtle Context Shifts appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]