Recent advancements in LLMs have significantly enhanced their reasoning capabilities, particularly through RL-based fine-tuning. Initially trained with supervised learning for token prediction, these models undergo RL post-training, exploring various reasoning paths to arrive at correct answers, similar to how an agent navigates a game. This process leads to emergent behaviors such as self-correction, often called the “aha moment,” where models begin revising their mistakes without explicit instruction. While this improves accuracy, it also results in much longer responses, increasing token usage, computational costs, and latency. Despite assumptions that longer outputs equate to better reasoning, research shows mixed results—some improvements are seen, but excessively lengthy answers can also reduce performance, indicating diminishing returns.

Researchers are exploring ways to balance reasoning quality and efficiency to address this. Methods include using smaller, faster models, applying prompt engineering to reduce verbosity, and developing reward-shaping techniques encouraging concise yet effective reasoning. One notable approach is long-to-short distillation, where models learn from detailed explanations and are trained to produce shorter yet accurate answers. Using these techniques, models like Kimi have demonstrated competitive performance even against larger models like GPT-4 while consuming fewer tokens. Studies also highlight the concept of “token complexity,” showing that problems require a minimum token threshold for accurate resolution, and prompt strategies aimed at conciseness often fall short of this optimal point. Overall, the findings emphasize the importance of developing more efficient reasoning methods without compromising performance.

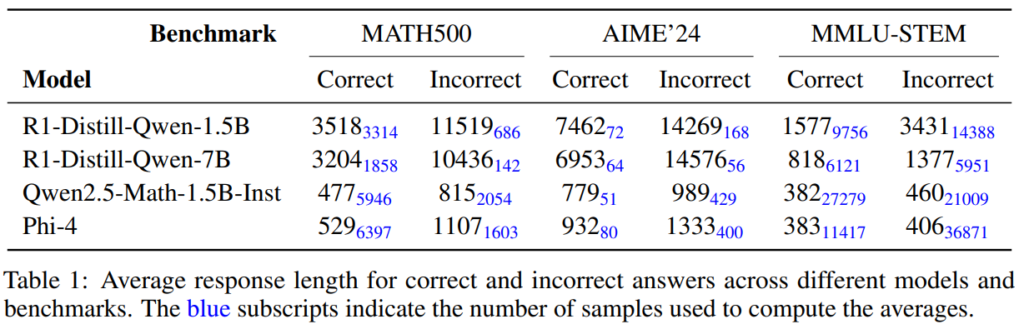

Researchers from Wand AI challenge the belief that longer responses inherently lead to better reasoning in large language models. Through theoretical analysis and experiments, they show that this verbosity is a by-product of RL optimization rather than a necessity for accuracy. Interestingly, concise answers often correlate with higher correctness, and correct responses are shorter than incorrect ones. They propose a two-phase RL training approach: The first phase enhances reasoning ability, while the second enforces conciseness using a small dataset. This method reduces response length without sacrificing accuracy, offering improved efficiency and performance with minimal computational cost.

Longer responses do not always lead to better performance in language models. RL post-training tends to reduce response length while maintaining or improving accuracy, especially early in training. This counters the belief that long reasoning chains are necessary for correctness. The phenomenon is tied to “deadends,” where excessively long outputs risk veering off-course. Analyzing language tasks as Markov Decision Processes reveals that RL minimizes loss, not length, and longer outputs only arise when rewards are consistently negative. A two-phase RL strategy—first on hard problems, then on solvable ones—can boost reasoning while eventually promoting conciseness and robustness.

The two-phase RL strategy led to notable performance gains across different model sizes. Training on varying difficulty levels showed that easier problems helped models shorten responses while maintaining or improving accuracy. A second RL phase using just eight math problems produced more concise and robust outputs across benchmarks like AIME, AMC, and MATH-500, with similar trends seen in STEM tasks from MMLU. Even minimal RL post-training improved accuracy and stability under low-temperature sampling. Furthermore, models without prior RL refinement, such as Qwen-Math-v2.5, showed large accuracy boosts—up to 30% from training on only four math problems.

In conclusion, the study presents a two-phase RL post-training method that improves reasoning and conciseness in language models. The first phase enhances accuracy, while the second focuses on shortening responses without sacrificing performance. Applied to R1 models, this approach reduced response length by over 40% while maintaining accuracy, especially at low temperatures. The findings reveal that longer answers are not inherently better and that targeted RL can achieve concise reasoning. The study also highlights that even minimal RL training can greatly benefit non-reasoning models, emphasizing the value of including moderately solvable problems and carefully tuning PPO parameters.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

]Recommended Read] Boson AI Introduces Higgs Audio Understanding and Higgs Audio Generation Achieving top scores (60.3 average on AirBench Foundation) with its reasoning enhancements [Sponsored]

]Recommended Read] Boson AI Introduces Higgs Audio Understanding and Higgs Audio Generation Achieving top scores (60.3 average on AirBench Foundation) with its reasoning enhancements [Sponsored]

The post Balancing Accuracy and Efficiency in Language Models: A Two-Phase RL Post-Training Approach for Concise Reasoning appeared first on MarkTechPost.

Source: Read MoreÂ