Back in the 17th century, German astronomer Johannes Kepler figured out the laws of motion that made it possible to accurately predict where our solar system’s planets would appear in the sky as they orbit the sun. But it wasn’t until decades later, when Isaac Newton formulated the universal laws of gravitation, that the underlying principles were understood. Although they were inspired by Kepler’s laws, they went much further, and made it possible to apply the same formulas to everything from the trajectory of a cannon ball to the way the moon’s pull controls the tides on Earth — or how to launch a satellite from Earth to the surface of the moon or planets.

Today’s sophisticated artificial intelligence systems have gotten very good at making the kind of specific predictions that resemble Kepler’s orbit predictions. But do they know why these predictions work, with the kind of deep understanding that comes from basic principles like Newton’s laws? As the world grows ever-more dependent on these kinds of AI systems, researchers are struggling to try to measure just how they do what they do, and how deep their understanding of the real world actually is.

Now, researchers in MIT’s Laboratory for Information and Decision Systems (LIDS) and at Harvard University have devised a new approach to assessing how deeply these predictive systems understand their subject matter, and whether they can apply knowledge from one domain to a slightly different one. And by and large the answer at this point, in the examples they studied, is — not so much.

The findings were presented at the International Conference on Machine Learning, in Vancouver, British Columbia, last month by Harvard postdoc Keyon Vafa, MIT graduate student in electrical engineering and computer science and LIDS affiliate Peter G. Chang, MIT assistant professor and LIDS principal investigator Ashesh Rambachan, and MIT professor, LIDS principal investigator, and senior author Sendhil Mullainathan.

“Humans all the time have been able to make this transition from good predictions to world models,” says Vafa, the study’s lead author. So the question their team was addressing was, “have foundation models — has AI — been able to make that leap from predictions to world models? And we’re not asking are they capable, or can they, or will they. It’s just, have they done it so far?” he says.

“We know how to test whether an algorithm predicts well. But what we need is a way to test for whether it has understood well,” says Mullainathan, the Peter de Florez Professor with dual appointments in the MIT departments of Economics and Electrical Engineering and Computer Science and the senior author on the study. “Even defining what understanding means was a challenge.”

In the Kepler versus Newton analogy, Vafa says, “they both had models that worked really well on one task, and that worked essentially the same way on that task. What Newton offered was ideas that were able to generalize to new tasks.” That capability, when applied to the predictions made by various AI systems, would entail having it develop a world model so it can “transcend the task that you’re working on and be able to generalize to new kinds of problems and paradigms.”

Another analogy that helps to illustrate the point is the difference between centuries of accumulated knowledge of how to selectively breed crops and animals, versus Gregor Mendel’s insight into the underlying laws of genetic inheritance.

“There is a lot of excitement in the field about using foundation models to not just perform tasks, but to learn something about the world,” for example in the natural sciences, he says. “It would need to adapt, have a world model to adapt to any possible task.”

Are AI systems anywhere near the ability to reach such generalizations? To test the question, the team looked at different examples of predictive AI systems, at different levels of complexity. On the very simplest of examples, the systems succeeded in creating a realistic model of the simulated system, but as the examples got more complex that ability faded fast.

The team developed a new metric, a way of measuring quantitatively how well a system approximates real-world conditions. They call the measurement inductive bias — that is, a tendency or bias toward responses that reflect reality, based on inferences developed from looking at vast amounts of data on specific cases.

The simplest level of examples they looked at was known as a lattice model. In a one-dimensional lattice, something can move only along a line. Vafa compares it to a frog jumping between lily pads in a row. As the frog jumps or sits, it calls out what it’s doing — right, left, or stay. If it reaches the last lily pad in the row, it can only stay or go back. If someone, or an AI system, can just hear the calls, without knowing anything about the number of lily pads, can it figure out the configuration? The answer is yes: Predictive models do well at reconstructing the “world” in such a simple case. But even with lattices, as you increase the number of dimensions, the systems no longer can make that leap.

“For example, in a two-state or three-state lattice, we showed that the model does have a pretty good inductive bias toward the actual state,” says Chang. “But as we increase the number of states, then it starts to have a divergence from real-world models.”

A more complex problem is a system that can play the board game Othello, which involves players alternately placing black or white disks on a grid. The AI models can accurately predict what moves are allowable at a given point, but it turns out they do badly at inferring what the overall arrangement of pieces on the board is, including ones that are currently blocked from play.

The team then looked at five different categories of predictive models actually in use, and again, the more complex the systems involved, the more poorly the predictive modes performed at matching the true underlying world model.

With this new metric of inductive bias, “our hope is to provide a kind of test bed where you can evaluate different models, different training approaches, on problems where we know what the true world model is,” Vafa says. If it performs well on these cases where we already know the underlying reality, then we can have greater faith that its predictions may be useful even in cases “where we don’t really know what the truth is,” he says.

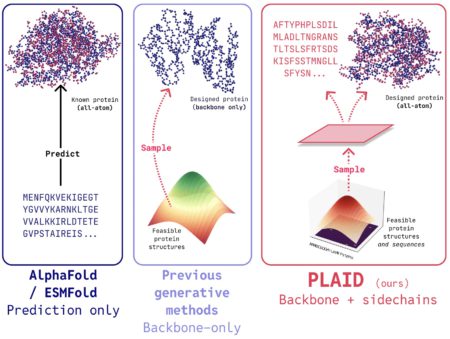

People are already trying to use these kinds of predictive AI systems to aid in scientific discovery, including such things as properties of chemical compounds that have never actually been created, or of potential pharmaceutical compounds, or for predicting the folding behavior and properties of unknown protein molecules. “For the more realistic problems,” Vafa says, “even for something like basic mechanics, we found that there seems to be a long way to go.”

Chang says, “There’s been a lot of hype around foundation models, where people are trying to build domain-specific foundation models — biology-based foundation models, physics-based foundation models, robotics foundation models, foundation models for other types of domains where people have been collecting a ton of data” and training these models to make predictions, “and then hoping that it acquires some knowledge of the domain itself, to be used for other downstream tasks.”

This work shows there’s a long way to go, but it also helps to show a path forward. “Our paper suggests that we can apply our metrics to evaluate how much the representation is learning, so that we can come up with better ways of training foundation models, or at least evaluate the models that we’re training currently,” Chang says. “As an engineering field, once we have a metric for something, people are really, really good at optimizing that metric.”

Source: Read MoreÂ