Large Language Models (LLMs) have become an integral part of modern AI applications, powering tools like chatbots and code generators. However, the increased reliance on these models has revealed critical inefficiencies in inference processes. Attention mechanisms, such as FlashAttention and SparseAttention, often struggle with diverse workloads, dynamic input patterns, and GPU resource limitations. These challenges, coupled with high latency and memory bottlenecks, underscore the need for a more efficient and flexible solution to support scalable and responsive LLM inference.

Researchers from the University of Washington, NVIDIA, Perplexity AI, and Carnegie Mellon University have developed FlashInfer, an AI library and kernel generator tailored for LLM inference. FlashInfer provides high-performance GPU kernel implementations for various attention mechanisms, including FlashAttention, SparseAttention, PageAttention, and sampling. Its design prioritizes flexibility and efficiency, addressing key challenges in LLM inference serving.

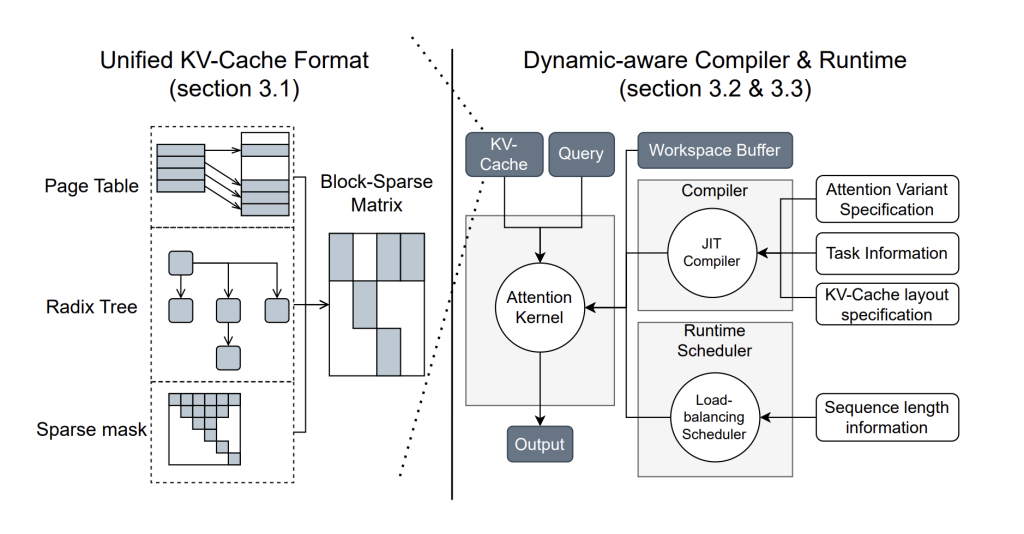

FlashInfer incorporates a block-sparse format to handle heterogeneous KV-cache storage efficiently and employs dynamic, load-balanced scheduling to optimize GPU usage. With integration into popular LLM serving frameworks like SGLang, vLLM, and MLC-Engine, FlashInfer offers a practical and adaptable approach to improving inference performance.

Technical Features and Benefits

FlashInfer introduces several technical innovations:

- Comprehensive Attention Kernels: FlashInfer supports a range of attention mechanisms, including prefill, decode, and append attention, ensuring compatibility with various KV-cache formats. This adaptability enhances performance for both single-request and batch-serving scenarios.

- Optimized Shared-Prefix Decoding: Through grouped-query attention (GQA) and fused-RoPE (Rotary Position Embedding) attention, FlashInfer achieves significant speedups, such as a 31x improvement over vLLM’s Page Attention implementation for long prompt decoding.

- Dynamic Load-Balanced Scheduling: FlashInfer’s scheduler dynamically adapts to input changes, reducing idle GPU time and ensuring efficient utilization. Its compatibility with CUDA Graphs further enhances its applicability in production environments.

- Customizable JIT Compilation: FlashInfer allows users to define and compile custom attention variants into high-performance kernels. This feature accommodates specialized use cases, such as sliding window attention or RoPE transformations.

Performance Insights

FlashInfer demonstrates notable performance improvements across various benchmarks:

- Latency Reduction: The library reduces inter-token latency by 29-69% compared to existing solutions like Triton. These gains are particularly evident in scenarios involving long-context inference and parallel generation.

- Throughput Improvements: On NVIDIA H100 GPUs, FlashInfer achieves a 13-17% speedup for parallel generation tasks, highlighting its effectiveness for high-demand applications.

- Enhanced GPU Utilization: FlashInfer’s dynamic scheduler and optimized kernels improve bandwidth and FLOP utilization, particularly in scenarios with skewed or uniform sequence lengths.

FlashInfer also excels in parallel decoding tasks, with composable formats enabling significant reductions in Time-To-First-Token (TTFT). For instance, tests on the Llama 3.1 model (70B parameters) show up to a 22.86% decrease in TTFT under specific configurations.

Conclusion

FlashInfer offers a practical and efficient solution to the challenges of LLM inference, providing significant improvements in performance and resource utilization. Its flexible design and integration capabilities make it a valuable tool for advancing LLM-serving frameworks. By addressing key inefficiencies and offering robust technical solutions, FlashInfer paves the way for more accessible and scalable AI applications. As an open-source project, it invites further collaboration and innovation from the research community, ensuring continuous improvement and adaptation to emerging challenges in AI infrastructure.

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence–Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence–Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

The post Researchers from NVIDIA, CMU and the University of Washington Released ‘FlashInfer’: A Kernel Library that Provides State-of-the-Art Kernel Implementations for LLM Inference and Serving appeared first on MarkTechPost.

Source: Read MoreÂ