In today’s world, Multimodal large language models (MLLMs) are advanced systems that process and understand multiple input forms, such as text and images. By interpreting these diverse inputs, they aim to reason through tasks and generate accurate outputs. However, MLLMs often fail at complex tasks because they lack structured processes to break problems into smaller steps and instead provide direct answers without clear intermediate reasoning. These limitations reduce the success and efficiency of MLLMs in solving intricate problems.

Traditional methods for reasoning in multimodal large language models (MLLMs) have many problems. Prompt-based methods, like Chain-of-Thought, use set steps to copy human reasoning but struggle with difficult tasks. Plant-based methods, like Tree or Graph-of-Thought, try to find reasoning paths but are not flexible or reliable. Learning-based methods, like Monte Carlo Tree Search (MCTS), are slow and do not help with deep thinking. Most MLLMs rely on “direct prediction,” giving short answers without clear steps. Although MCTS works well in games and robotics, it is unsuited for MLLMs, and collective learning does not build strong step-by-step reasoning. These issues make it hard for MLLMs to solve complex problems.

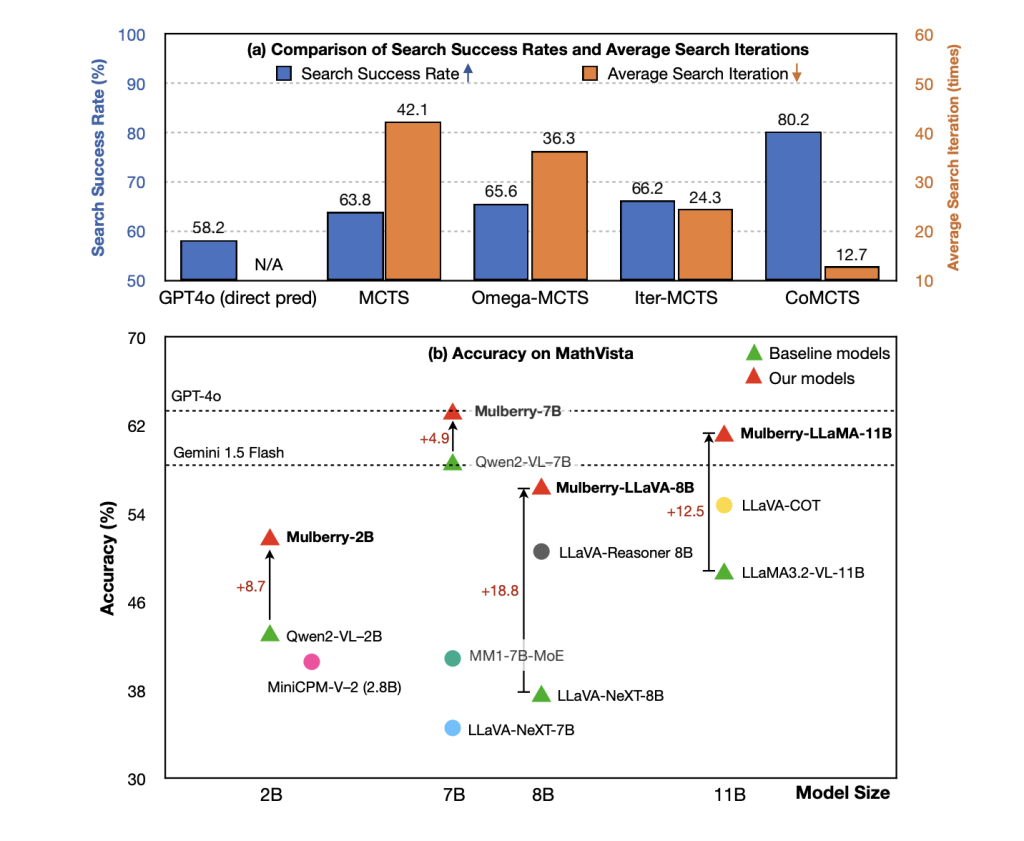

To mitigate these issues, a team researchers from Nanyang Technological University, Tsinghua University, Baidu, and Sun Yat-sen University proposed CoMCTS, a framework to improve reasoning-path search in tree search tasks. Instead of relying on one model, it combines multiple pre-trained models to expand and evaluate candidate paths. This approach differs from traditional methods because it uses a more efficient strategy: several models work together, allowing for better performance and reducing errors during the reasoning process.

It consisted of four key steps: Expansion, Simulation, Backpropagation, and Selection. In the Expansion step, several models looked for different solutions simultaneously, increasing the variety of possible answers. In the Simulation step, incorrect or less effective paths were removed, making the search easier. During the Backpropagation step, the models improved by learning from their past mistakes and using that knowledge to make better predictions. The last step used a statistical method to choose the best action for the model to take. Reflective reasoning in this process helped the model learn from previous errors to make better decisions in similar tasks.

The researchers created the Mulberry-260K dataset, which comprised 260K multimodal input questions, combining text instructions and images from various domains, including general multimodal understanding, mathematics, science, and medical image understanding. The dataset was constructed using CoMCTS with training limited to 15K samples to avoid overabundance. The reasoning tasks required an average of 7.5 steps, with most tasks falling within the 6 to 8-step range. CoMCTS was implemented using four models: GPT4o, Qwen2-VL-7B, LLaMA-3.2-11B-Vision-Instruct, and Qwen2-VL-72B. The training process involved a batch size of 128 and a learning rate 1e-5 for two epochs.

The results demonstrated significant performance improvements over the baseline models, with gains of +4.2% and +7.5% for Qwen2-VL-7B and LLaMA-3.2-11B-Vision-Instruct, respectively. Additionally, the Mulberry dataset outperformed reasoning models like LLaVA-Reasoner-8B and Insight-V-8B, showing superior performance on various benchmarks. Upon evaluation, CoMCTS improved its performance by 63.8%. The involvement of reflective reasoning data led to slight improvements in model performance. This reveals the effects of Mulberry-260K and CoMCTS in improving the accuracy and flexibility of reasoning.

In conclusion, the proposed CoMCTS proves to be an approach that improves reasoning in multimodal large language models (MLLMs) by incorporating collective learning into tree search methods. This framework improved the efficiency of searching for a reasoning path, as demonstrated by the Mulberry-260K dataset and the Mulberry model, which surpasses traditional models in complex reasoning tasks. The proposed methods provide valuable insights for future research, can serve as a basis for advancing MLLMs, and can act as a baseline for developing more efficient models capable of handling increasingly complex tasks.

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

The post Collective Monte Carlo Tree Search (CoMCTS): A New Learning-to-Reason Method for Multimodal Large Language Models appeared first on MarkTechPost.

Source: Read MoreÂ

Trending: LG AI Research Releases EXAONE 3.5: Three Open-Source Bilingual Frontier AI-level Models Delivering Unmatched Instruction Following and Long Context Understanding for Global Leadership in Generative AI Excellence….

Trending: LG AI Research Releases EXAONE 3.5: Three Open-Source Bilingual Frontier AI-level Models Delivering Unmatched Instruction Following and Long Context Understanding for Global Leadership in Generative AI Excellence….