The widespread use of large-scale language models (LLMs) in safety-critical areas has brought forward a crucial challenge: how to ensure their adherence to clear ethical and safety guidelines. Existing alignment techniques, such as supervised fine-tuning (SFT) and reinforcement learning from human feedback (RLHF), have limitations. Models can still produce harmful content when manipulated, refuse legitimate requests, or struggle to handle unfamiliar scenarios. These issues often stem from the implicit nature of current safety training, where models infer standards indirectly from data rather than learning them explicitly. Additionally, models generally lack the ability to deliberate on complex prompts, which limits their effectiveness in nuanced or adversarial situations.

OpenAI researchers have introduced Deliberative Alignment, a new approach that directly teaches models safety specifications and trains them to reason over these guidelines before generating responses. By integrating safety principles into the reasoning process, this method addresses key weaknesses in traditional alignment techniques. Deliberative Alignment focuses on teaching models to explicitly consider relevant policies, enabling them to handle complex scenarios more reliably. Unlike approaches that depend heavily on human-annotated data, this method uses model-generated data and chain-of-thought (CoT) reasoning to achieve better safety outcomes. When applied to OpenAI’s o-series models, it has demonstrated improved resistance to jailbreak attacks, fewer refusals of valid requests, and better generalization to unfamiliar situations.

Technical Details and Benefits

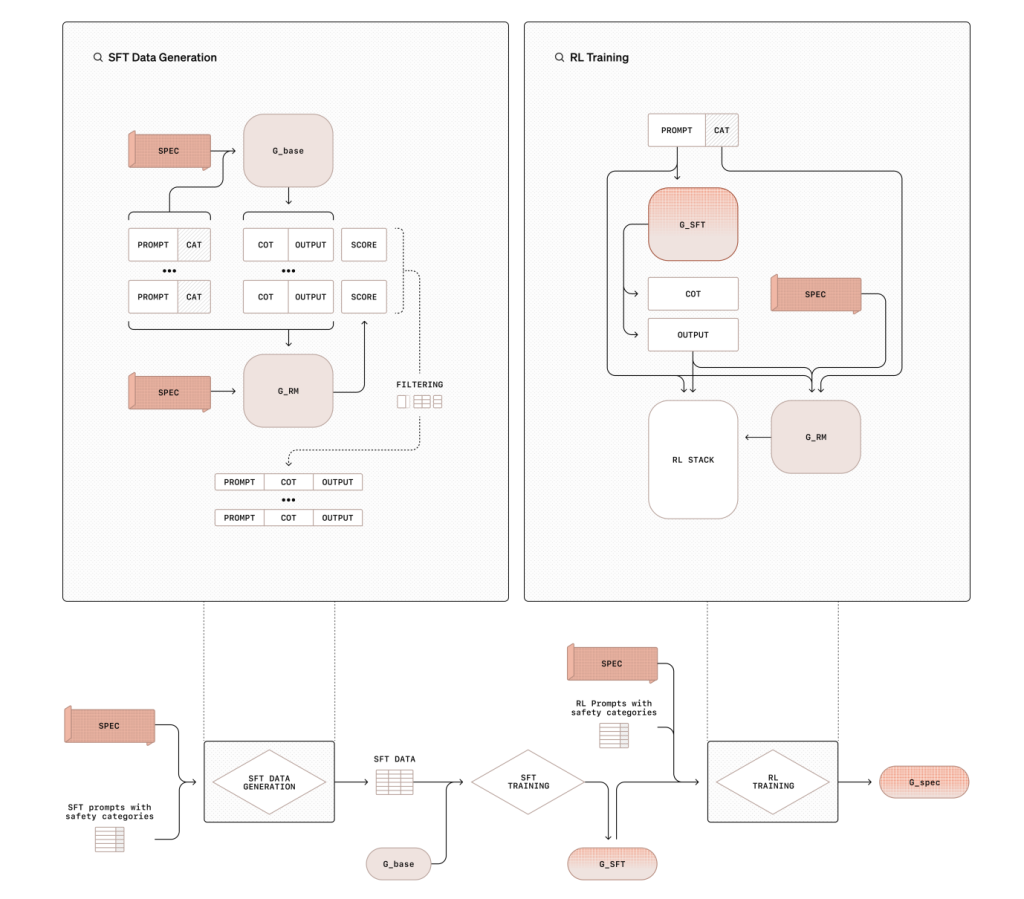

Deliberative Alignment involves a two-stage training process. First, supervised fine-tuning (SFT) trains models to reference and reason through safety specifications using datasets generated from base models. This step helps embed a clear understanding of safety principles. In the second stage, reinforcement learning (RL) refines the model’s reasoning using a reward model to evaluate performance against safety benchmarks. This training pipeline does not rely on human-annotated completions, which reduces the resource demands typically associated with safety training. By leveraging synthetic data and CoT reasoning, Deliberative Alignment equips models to address complex ethical scenarios with greater precision and efficiency.

Results and Insights

Deliberative Alignment has yielded notable improvements in the performance of OpenAI’s o-series models. The o1 model, for instance, outperformed other leading models in resisting jailbreak prompts, achieving a 0.88 score on the StrongREJECT benchmark compared to GPT-4o’s 0.37. It also performed well in avoiding unnecessary refusals, with a 93% accuracy rate on benign prompts in the XSTest dataset. The method further improved adherence to style guidelines in responses to regulated advice and self-harm prompts. Ablation studies have shown that both SFT and RL stages are essential for achieving these results. Additionally, the approach has demonstrated strong generalization to out-of-distribution scenarios, such as multilingual and encoded prompts, highlighting its robustness.

Conclusion

Deliberative Alignment represents a significant advancement in aligning language models with safety principles. By teaching models to reason explicitly over safety policies, it offers a scalable and interpretable solution to complex ethical challenges. The success of the o1 series models illustrates the potential of this approach to improve safety and reliability in AI systems. As the capabilities of AI continue to evolve, methods like Deliberative Alignment will play a crucial role in ensuring that these systems remain aligned with human values and expectations.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

The post OpenAI Researchers Propose ‘Deliberative Alignment’: A Training Approach that Teaches LLMs to Explicitly Reason through Safety Specifications before Producing an Answer appeared first on MarkTechPost.

Source: Read MoreÂ

Trending: LG AI Research Releases EXAONE 3.5: Three Open-Source Bilingual Frontier AI-level Models Delivering Unmatched Instruction Following and Long Context Understanding for Global Leadership in Generative AI Excellence….

Trending: LG AI Research Releases EXAONE 3.5: Three Open-Source Bilingual Frontier AI-level Models Delivering Unmatched Instruction Following and Long Context Understanding for Global Leadership in Generative AI Excellence….