The rapid development of artificial intelligence (AI) has produced models with powerful capabilities, such as language understanding and vision processing. However, deploying these models on edge devices remains challenging due to limitations in computational power, memory, and energy efficiency. The need for lightweight models that can run effectively on edge devices, while still delivering competitive performance, is growing as AI use cases extend beyond the cloud into everyday devices. Traditional large models are often resource-intensive, making them impractical for smaller devices and creating a gap in edge computing. Researchers have been seeking effective ways to bring AI to edge environments without significantly compromising model quality and efficiency.

Tsinghua University researchers recently released the GLM-Edge series, a family of models ranging from 1.5 billion to 5 billion parameters designed specifically for edge devices. The GLM-Edge models offer a combination of language processing and vision capabilities, emphasizing efficiency and accessibility without sacrificing performance. This series includes models that cater to both conversational AI and vision applications, designed to address the limitations of resource-constrained devices.

GLM-Edge includes multiple variants optimized for different tasks and device capabilities, providing a scalable solution for various use cases. The series is based on General Language Model (GLM) technology, extending its performance and modularity to edge scenarios. As AI-powered IoT devices and edge applications continue to grow in popularity, GLM-Edge helps bridge the gap between computationally intensive AI and the limitations of edge devices.

Technical Details

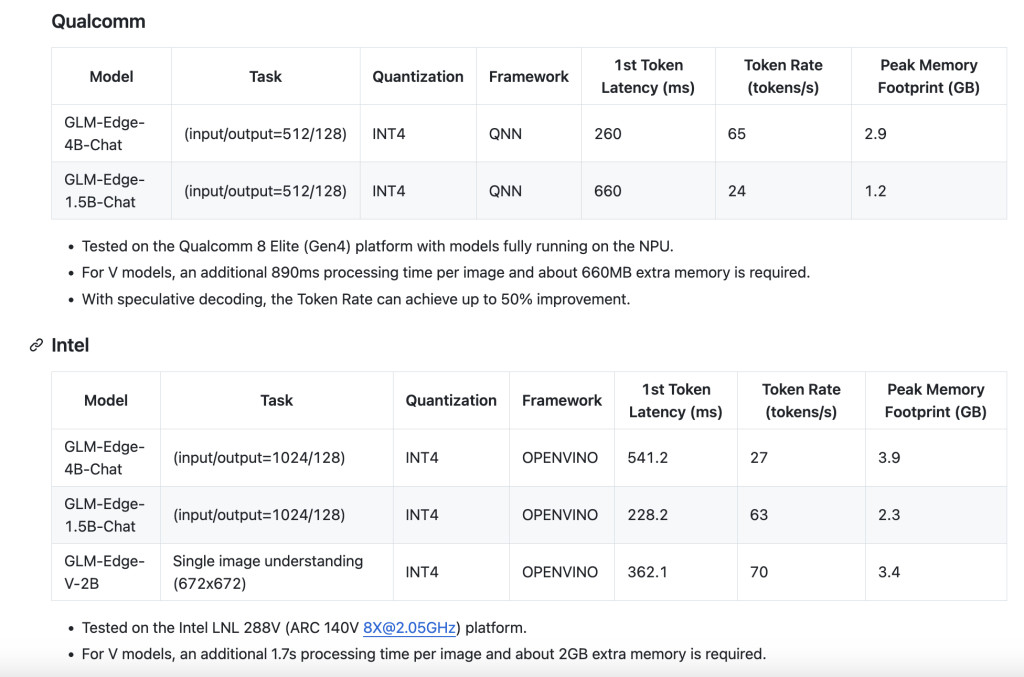

The GLM-Edge series builds upon the structure of GLM, optimized with quantization techniques and architectural changes that make them suitable for edge deployments. The models have been trained using a combination of knowledge distillation and pruning, which allows for a significant reduction in model size while maintaining high accuracy levels. Specifically, the models leverage 8-bit and even 4-bit quantization to reduce memory and computational demands, making them feasible for small devices with limited resources.

The GLM-Edge series has two primary focus areas: conversational AI and visual tasks. The language models are capable of carrying out complex dialogues with reduced latency, while the vision models support various computer vision tasks, such as object detection and image captioning, in real-time. A notable advantage of GLM-Edge is its modularity—it can combine language and vision capabilities into a single model, offering a solution for multi-modal applications. The practical benefits of GLM-Edge include efficient energy consumption, reduced latency, and the ability to run AI-powered applications directly on mobile devices, smart cameras, and embedded systems.

The significance of GLM-Edge lies in its ability to make sophisticated AI capabilities accessible to a wider range of devices beyond powerful cloud servers. By reducing the dependency on external computational power, the GLM-Edge models allow for AI applications that are both cost-effective and privacy-friendly, as data can be processed locally on the device without needing to be sent to the cloud. This is particularly relevant for applications where privacy, low latency, and offline operation are important factors.

The results from GLM-Edge’s evaluation demonstrate strong performance despite the reduced parameter count. For example, the GLM-Edge-1.5B achieved comparable results to much larger transformer models when tested on general NLP and vision benchmarks, highlighting the efficiency gains through careful design optimizations. The series also showcased strong performance in edge-relevant tasks, such as keyword spotting and real-time video analysis, offering a balance between model size, latency, and accuracy.

Conclusion

Tsinghua University’s GLM-Edge series represents an advancement in the field of edge AI, addressing the challenges of resource-limited devices. By providing models that blend efficiency with conversational and visual capabilities, GLM-Edge enables new edge AI applications that are practical and effective. These models help bring the vision of ubiquitous AI closer to reality, allowing AI computations to happen on-device and making it possible to deliver faster, more secure, and cost-effective AI solutions. As AI adoption continues to expand, the GLM-Edge series stands out as an effort that addresses the unique challenges of edge computing, providing a promising path forward for AI in the real world.

Check out the GitHub Page and Models on Hugging Face. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 55k+ ML SubReddit.

The post Tsinghua University Researchers Released the GLM-Edge Series: A Family of AI Models Ranging from 1.5B to 5B Parameters Designed Specifically for Edge Devices appeared first on MarkTechPost.

Source: Read MoreÂ

‘

‘