The evolution of machine learning has brought significant advancements in language models, which are foundational to tasks like text generation and question-answering. Among these, transformers and state-space models (SSMs) are pivotal, yet their efficiency when handling long sequences has posed challenges. As sequence length increases, traditional transformers suffer from quadratic complexity, leading to prohibitive memory and computational demands. To address these issues, researchers and organizations have explored alternative architectures, such as Mamba, a state-space model with linear complexity that provides scalability and efficiency for long-context tasks.

Large-scale language models often face challenges in managing computational costs, especially as they scale up to billions of parameters. For instance, while Mamba offers linear complexity advantages, its increasing size results in significant energy consumption and training costs, making deployment difficult. These limitations are exacerbated by the high resource demands of models like GPT-based architectures, which are traditionally trained and inferred at full precision (e.g., FP16 or BF16). Moreover, as demand grows for efficient, scalable AI, exploring extreme quantization methods has become critical to ensure practical deployment in resource-constrained settings.

Researchers have explored techniques such as pruning, low-bit quantization, and key-value cache optimizations to mitigate these challenges. Quantization, which reduces the bit-width of model weights, has shown promising results by compressing models without substantial performance degradation. However, most of these efforts focus on transformer-based models. The behavior of SSMs, particularly Mamba, under extreme quantization still needs to be explored, creating a gap in developing scalable and efficient state-space models for real-world applications.

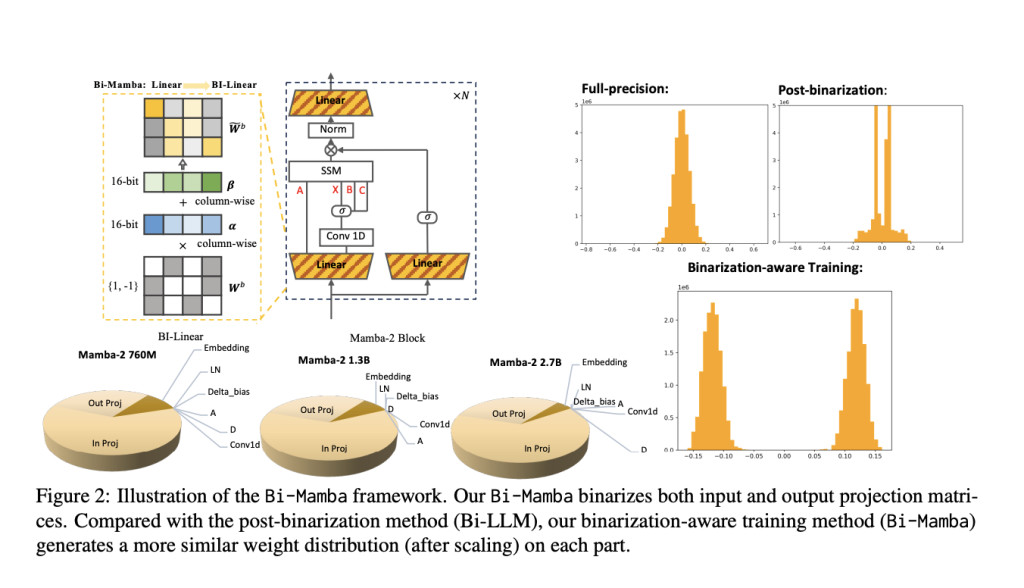

Researchers from the Mohamed bin Zayed University of Artificial Intelligence and Carnegie Mellon University introduced Bi-Mamba, a 1-bit scalable Mamba architecture designed for low-memory, high-efficiency scenarios. This innovative approach applies binarization-aware training to Mamba’s state-space framework, enabling extreme quantization while maintaining competitive performance. Bi-Mamba was developed in model sizes of 780 million, 1.3 billion, and 2.7 billion parameters and trained from scratch using an autoregressive distillation loss. The model uses high-precision teacher models such as LLaMA2-7B to guide training, ensuring robust performance.

The architecture of Bi-Mamba employs selective binarization of its linear modules while retaining other components at full precision to balance efficiency and performance. Input and output projections are binarized using FBI-Linear modules, which integrate learnable scaling and shifting factors for optimal weight representation. This ensures that binarized parameters align closely with their full-precision counterparts. The model’s training utilized 32 NVIDIA A100 GPUs to process large datasets, including 1.26 trillion tokens from sources like RefinedWeb and StarCoder.

Extensive experiments demonstrated Bi-Mamba’s competitive edge over existing models. On datasets like Wiki2, PTB, and C4, Bi-Mamba achieved perplexity scores of 14.2, 34.4, and 15.0, significantly outperforming alternatives like GPTQ and Bi-LLM, which exhibited perplexities up to 10× higher. Also, Bi-Mamba achieved zero-shot accuracies of 44.5% for the 780M model, 49.3% for the 2.7B model, and 46.7% for the 1.3B variant on downstream tasks such as BoolQ and HellaSwag. This demonstrated its robustness across various tasks and datasets while maintaining energy-efficient performance.

The study’s findings highlight several key takeaways:

- Efficiency Gains: Bi-Mamba achieves over 80% storage compression compared to full-precision models, reducing storage size from 5.03GB to 0.55GB for the 2.7B model.

- Performance Consistency: The model retains comparable performance to full-precision counterparts with significantly reduced memory requirements.

- Scalability: Bi-Mamba’s architecture enables effective training across multiple model sizes, with competitive results even for the largest variants.

- Robustness in Binarization: By selectively binarizing linear modules, Bi-Mamba avoids the performance degradation typically associated with naive binarization methods.

In conclusion, Bi-Mamba represents a significant step forward in addressing the dual challenges of scalability and efficiency in large language models. By leveraging binarization-aware training and focusing on key architectural optimizations, the researchers demonstrated that state-space models could achieve high performance under extreme quantization. This innovation enhances energy efficiency, reduces resource consumption, and sets the stage for future developments in low-bit AI systems, opening avenues for deploying large-scale models in practical, resource-limited environments. Bi-Mamba’s robust results underscore its potential as a transformative approach for more sustainable and efficient AI technologies.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 55k+ ML SubReddit.

[FREE AI VIRTUAL CONFERENCE] SmallCon: Free Virtual GenAI Conference ft. Meta, Mistral, Salesforce, Harvey AI & more. Join us on Dec 11th for this free virtual event to learn what it takes to build big with small models from AI trailblazers like Meta, Mistral AI, Salesforce, Harvey AI, Upstage, Nubank, Nvidia, Hugging Face, and more.

The post Researchers from MBZUAI and CMU Introduce Bi-Mamba: A Scalable and Efficient 1-bit Mamba Architecture Designed for Large Language Models in Multiple Sizes (780M, 1.3B, and 2.7B Parameters) appeared first on MarkTechPost.

Source: Read MoreÂ