In the evolving field of machine learning, fine-tuning foundation models such as BERT or LLAMA for specific downstream tasks has become a prevalent approach. However, the success of such fine-tuning depends not only on the model but also heavily on the quality and relevance of the training data. With massive repositories like Common Crawl containing billions of documents, manually selecting suitable data for a given task is impractical. Thus, automated data selection is essential, but current methods often fall short in three key areas: ensuring distribution alignment with target tasks, maintaining data diversity, and achieving efficiency with large-scale data. In this context, Task-Specific Data Selection (TSDS) offers a structured approach to address these challenges.

Introducing TSDS: An Optimized Approach for Data Selection

Researchers from the University of Wisconsin-Madison, Yale University, and Apple introduce TSDS (Task-Specific Data Selection), an AI framework designed to enhance the effectiveness of task-specific model fine-tuning by selecting relevant data intelligently. Guided by a small, representative set of examples from the target task, TSDS aims to optimize data selection through an automated and scalable process. The core idea behind TSDS is to formulate data selection as an optimization problem, focusing on aligning the distribution of selected data with the target task distribution while also maintaining diversity within the selected dataset. This alignment helps ensure that the model learns effectively from data that closely mirrors the intended use case, thereby improving its performance on downstream tasks.

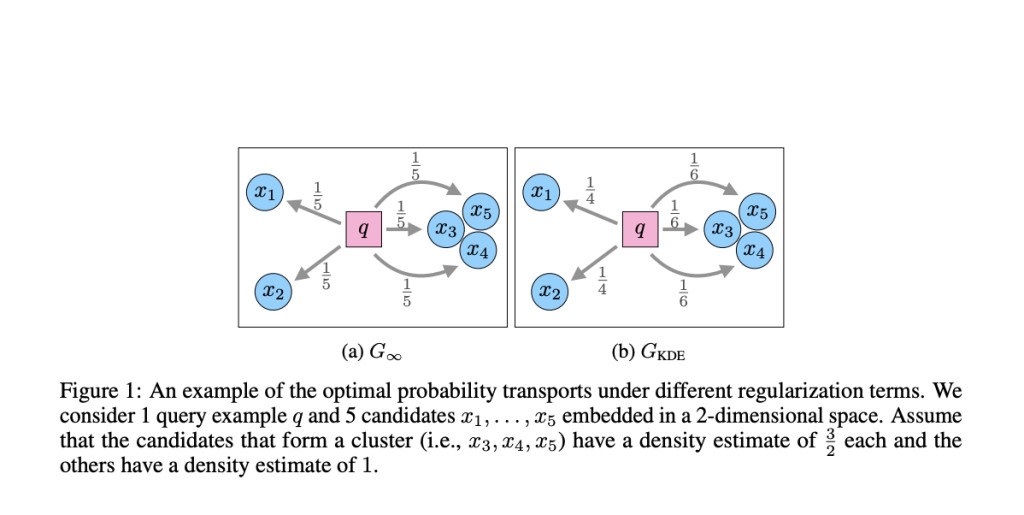

The TSDS framework relies on optimal transport theory to minimize the discrepancy between the data distribution of the selected set and that of the target task. By using a regularizer that promotes diversity and incorporating kernel density estimation, TSDS reduces the risk of overfitting, which can occur when near-duplicate examples dominate the training data. Additionally, TSDS connects this optimization problem to nearest neighbor search, enabling the use of efficient algorithms that leverage approximate nearest neighbor techniques for practical scalability.

Technical Details and Benefits of TSDS

At its core, TSDS addresses the optimization problem by balancing two objectives: distribution alignment and data diversity. Distribution alignment is achieved through a cost function based on optimal transport, ensuring that the selected data closely matches the target task distribution. To address the issue of data diversity, TSDS incorporates a regularizer that penalizes the over-representation of near-duplicate examples, which are common in large-scale data repositories. The framework uses kernel density estimation to quantify duplication levels and adjusts the selection process accordingly.

By formulating data selection as an optimization problem, TSDS can determine the probability distribution over candidate data points, prioritizing those that align well with the target task. This process results in an efficient selection of data, where only a small subset of the massive candidate pool is utilized for fine-tuning. TSDS also supports distribution alignment in any metric space that allows efficient nearest-neighbor search, making it adaptable to various tasks and model architectures.

Importance and Impact of TSDS

The value of TSDS lies in its ability to improve upon traditional data selection methods, particularly when dealing with large datasets. In experiments involving instruction tuning and domain-specific pretraining, TSDS showed better results compared to baseline methods. For instance, with a selection ratio of 1%, TSDS achieved an average improvement of 1.5 points in F1 score over baseline methods when fine-tuning large language models for specific tasks. Furthermore, TSDS demonstrated robustness in the presence of near-duplicate data, maintaining consistent performance even when up to 1,000 duplicates were present in the candidate pool.

The efficiency of TSDS is another important aspect. In one experiment, TSDS was able to preprocess a corpus of 150 million examples in 28 hours, with task-specific selection taking less than an hour. This level of efficiency makes TSDS suitable for real-world applications, where both time and computational resources are often limited.

Conclusion

TSDS represents an advancement in the field of task-specific model fine-tuning by addressing the key challenges of data selection. By formulating data selection as an optimization problem that balances distribution alignment and diversity, TSDS ensures that the selected data is both relevant and representative of the target task. This leads to improved model performance, reduced overfitting, and more efficient use of computational resources. As machine learning models continue to grow in scale and complexity, frameworks like TSDS will be essential in making fine-tuning more effective and accessible across diverse applications. Moving forward, further research could explore incorporating more efficient variants of optimal transport or refining the selection of representative examples to mitigate potential biases.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 55k+ ML SubReddit.

[FREE AI VIRTUAL CONFERENCE] SmallCon: Free Virtual GenAI Conference ft. Meta, Mistral, Salesforce, Harvey AI & more. Join us on Dec 11th for this free virtual event to learn what it takes to build big with small models from AI trailblazers like Meta, Mistral AI, Salesforce, Harvey AI, Upstage, Nubank, Nvidia, Hugging Face, and more.

The post Task-Specific Data Selection: A Practical Approach to Enhance Fine-Tuning Efficiency and Performance appeared first on MarkTechPost.

Source: Read MoreÂ