MongoDB is excited to announce enhancements to our LlamaIndex integration. By combining MongoDB’s robust database capabilities with LlamaIndex’s innovative framework for context-augmented large language models (LLMs), the enhanced MongoDB-LlamaIndex integration unlocks new possibilities for generative AI development.

Specifically, it supports vector (powered by Atlas Vector Search), full-text (powered by Atlas Search), and hybrid search, enabling developers to blend precise keyword matching with semantic search for more context-aware applications, depending on their use case.

Building AI applications with LlamaIndex

LlamaIndex is one of the world’s leading AI frameworks for building with LLMs. It streamlines the integration of external data sources, allowing developers to combine LLMs with relevant context from various data formats. This makes it ideal for building application features like retrieval-augmented generation (RAG), where accurate, contextual information is critical. LlamaIndex empowers developers to build smarter, more responsive AI systems while reducing the complexities involved in data handling and query management.

Advantages of building with LlamaIndex include:

Simplified data ingestion with connectors that integrate structured databases, unstructured files, and external APIs, removing the need for manual processing or format conversion.

Organizing data into structured indexes or graphs, significantly enhancing query efficiency and accuracy, especially when working with large or complex datasets.

An advanced retrieval interface that responds to natural language prompts with contextually enhanced data, improving accuracy in tasks like question-answering, summarization, or data retrieval.

Customizable APIs that cater to all skill levels—high-level APIs enable quick data ingestion and querying for beginners, while lower-level APIs offer advanced users full control over connectors and query engines for more complex needs.

MongoDB’s LlamaIndex integration

Developers are able to build powerful AI applications using LlamaIndex as a foundational AI framework alongside MongoDB Atlas as the long term memory database. With MongoDB’s developer-friendly document model and powerful vector search capabilities within MongoDB Atlas, developers can easily store and search vector embeddings for building RAG applications. And because of MongoDB’s low-latency transactional persistence capabilities, developers can do a lot more with MongoDB integration in LlamIndex to build AI applications in an enterprise-grade manner.

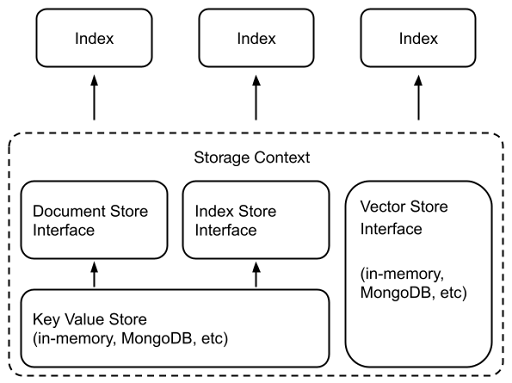

LlamaIndex’s flexible architecture supports customizable storage components, allowing developers to leverage MongoDB Atlas as a powerful vector store and a key-value store. By using Atlas’s Vector Search capabilities, developers can:

Store and retrieve vector embeddings efficiently (llama-index-vector-stores-mongodb)

Persist ingested documents (llama-index-storage-docstore-mongodb)

Maintain index metadata (llama-index-storage-index-store-mongodb)

Store Key-value pairs (llama-index-storage-kvstore-mongodb)

Figure adapted from Liu, Jerry and Agarwal, Prakul (May 2023). “Build a ChatGPT with your Private Data using LlamaIndex and MongoDBâ€. Medium.

https://medium.com/llamaindex-blog/build-a-chatgpt-with-your-private-data-using-llamaindex-and-mongodb-b09850eb154c

Adding hybrid and full-text search support

Developers may use different approaches to search for different use cases. Full-text search retrieves documents by matching exact keywords or linguistic variations, making it efficient for quickly locating specific terms within large datasets, such as in legal document review where exact wording is critical. Vector search, on the other hand, finds content that is ‘semantically’ similar, even if it does not contain the same keywords. Hybrid search combines full-text search with vector search to identify both exact matches and semantically similar content. This approach is particularly valuable in advanced retrieval systems or AI-powered search engines, enabling results that are both precise and aligned with the needs of the end-user.

It is super simple for developers to try out powerful retrieval capabilities on their data and improve the accuracy of their AI applications with this integration. In the LlamaIndex integration, the MongoDBAtlasVectorSearch class is used for vector search. All you have to do is enable full-text search, using VectorStoreQueryMode.TEXT_SEARCH in the same class. Similarly, to use Hybrid search, enable VectorStoreQueryMode.HYBRID. To learn more, check out the GitHub repository.

With the MongoDB-LlamaIndex integration’s support, developers no longer need to navigate the intricacies of Reciprocal Rank Fusion implementation or to determine the optimal way to combine vector and text searches—we’ve taken care of the complexities for you. The integration also includes sensible defaults and robust support, ensuring that building advanced search capabilities into AI applications is easier than ever. This means that MongoDB handles the intricacies of storing and querying your vectorized data, so you can focus on building!

We’re excited for you to work with our LlamaIndex integration. Here are some resources to expand your knowledge on this topic:

Check out how to get started with our LlamaIndex integration

Build a content recommendation system using MongoDB and LlamaIndex with our helpful tutorial

Experiment with building a RAG application with LlamaIndex, OpenAI, and our vector database

Learn how to build with private data using LlamaIndex, guided by one of its co-founders

Source: Read More

![Why developers needn’t fear CSS – with the King of CSS himself Kevin Powell [Podcast #154]](https://devstacktips.com/wp-content/uploads/2024/12/15498ad9-15f9-4dc3-98cc-8d7f07cec348-fXprvk-450x253.png)