Recent progress in LLMs has spurred interest in their mathematical reasoning skills, especially with the GSM8K benchmark, which assesses grade-school-level math abilities. While LLMs have shown improved performance on GSM8K, doubts remain about whether their reasoning abilities have truly advanced, as current metrics may only partially capture their capabilities. Research suggests that LLMs rely on probabilistic pattern matching rather than genuine logical reasoning, leading to token bias and sensitivity to small input changes. Furthermore, GSM8K’s static nature and reliance on a single metric limit its effectiveness in evaluating LLMs’ reasoning abilities under varied conditions.

Logical reasoning is essential for intelligent systems, but its consistency in LLMs remains to be determined. While some research shows LLMs can handle tasks using probabilistic pattern-matching, they often need more formal reasoning, as changes in input tokens can significantly alter results. While effective in some cases, transformers need more expressiveness for complex tasks if supported by external memory, like scratchpads. Studies suggest that LLMs rely on matching data seen during training rather than relying on true logical understanding.Â

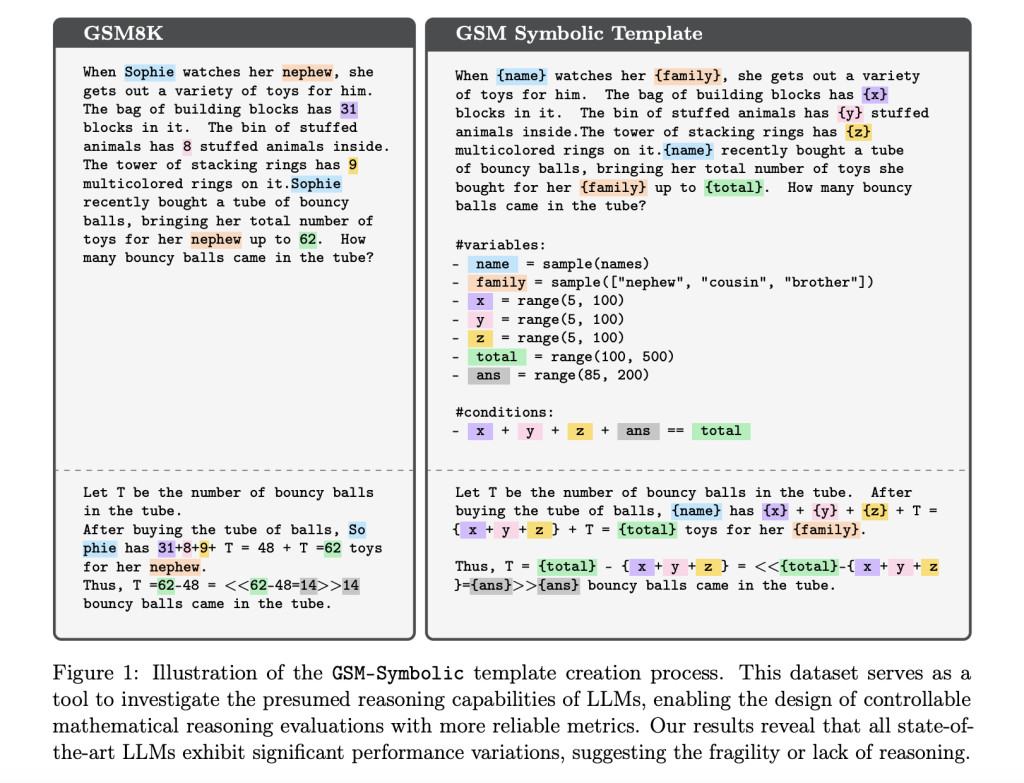

Researchers from Apple conducted a large-scale study to evaluate the reasoning capabilities of state-of-the-art LLMs using a new benchmark called GSM-Symbolic. This benchmark generates diverse mathematical questions through symbolic templates, allowing for more reliable and controllable evaluations. Their findings show that LLM performance declines significantly when numerical values or question complexity increases. Additionally, adding irrelevant but seemingly related information leads to a performance drop of up to 65%, indicating that LLMs rely on pattern matching rather than formal reasoning. The study highlights the need for improved evaluation methods and further research into LLM reasoning abilities.

The GSM8K dataset consists of over 8000 grade-school-level math questions and answers commonly used for evaluating LLMs. However, risks like data contamination and performance variance with minor question changes have arisen due to its popularity. To address this, GSM-Symbolic was developed, generating diverse problem instances using symbolic templates. This approach enables a more robust evaluation of LLMs, offering better control over question difficulty and testing the models’ capabilities across multiple variations. The benchmark evaluates over 20 open and closed models using 5000 samples from 100 templates, revealing insights into LLMs’ mathematical reasoning abilities and limitations.

Initial experiments reveal significant performance variability across models on GSM-Symbolic, a variant of the GSM8K dataset, with lower accuracy than reported on GSM8K. The study further explores how changing names versus altering values affects LLMs, showing that value changes significantly degrade performance. Question difficulty also impacts accuracy, with more complex questions leading to greater performance declines. The results suggest that models might rely on pattern matching rather than genuine reasoning, as additional clauses often reduce their performance.

The study examined the reasoning capabilities of LLMs and highlighted limitations in current GSM8K evaluations. A new benchmark, GSM-Symbolic, was introduced to assess LLMs’ mathematical reasoning with multiple question variations. Results revealed significant performance variability, especially when altering numerical values or adding irrelevant clauses. LLMs also needed help with increased question complexity, suggesting they rely more on pattern matching than true reasoning. GSM-NoOp further exposed LLMs’ inability to filter irrelevant information, resulting in large performance drops. Overall, this research emphasizes the need for further development to enhance LLMs’ logical reasoning abilities.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 50k+ ML SubReddit

[Upcoming Event- Oct 17 202] RetrieveX – The GenAI Data Retrieval Conference (Promoted)

The post Apple Researchers Introduce GSM-Symbolic: A Novel Machine Learning Benchmark with Multiple Variants Designed to Provide Deeper Insights into the Mathematical Reasoning Abilities of LLMs appeared first on MarkTechPost.

Source: Read MoreÂ