Traffic forecasting is a fundamental aspect of smart city management, essential for improving transportation planning and resource allocation. With the rapid advancement of deep learning, complex spatiotemporal patterns in traffic data can now be effectively modeled. However, real-world applications present unique challenges due to the large-scale nature of these systems, which typically encompass thousands of interconnected sensors distributed over vast geographical areas. Traditional models, such as graph neural networks (GNNs) and transformer-based architectures, have been widely adopted in traffic forecasting due to their ability to capture spatial and temporal dependencies. However, as these networks grow, their computational demands increase exponentially, making applying these methods to extensive networks like the California road system difficult.

One of the most pressing issues with existing models is their inability to handle large-scale road networks efficiently. For example, popular benchmarks like the PEMS series and MeTR-LA contain relatively few nodes, which is manageable for standard models. However, these datasets do not accurately represent the complexity of real-world traffic systems, such as California’s Caltrans Performance Measurement System, which comprises nearly 20,000 active sensors. The significant challenge is maintaining computational efficiency while modeling local and global patterns within such a large network. Without an effective solution, the limitations of current models, such as the high memory usage and extensive computation time required, continue to hinder their scalability and deployment in practical scenarios.

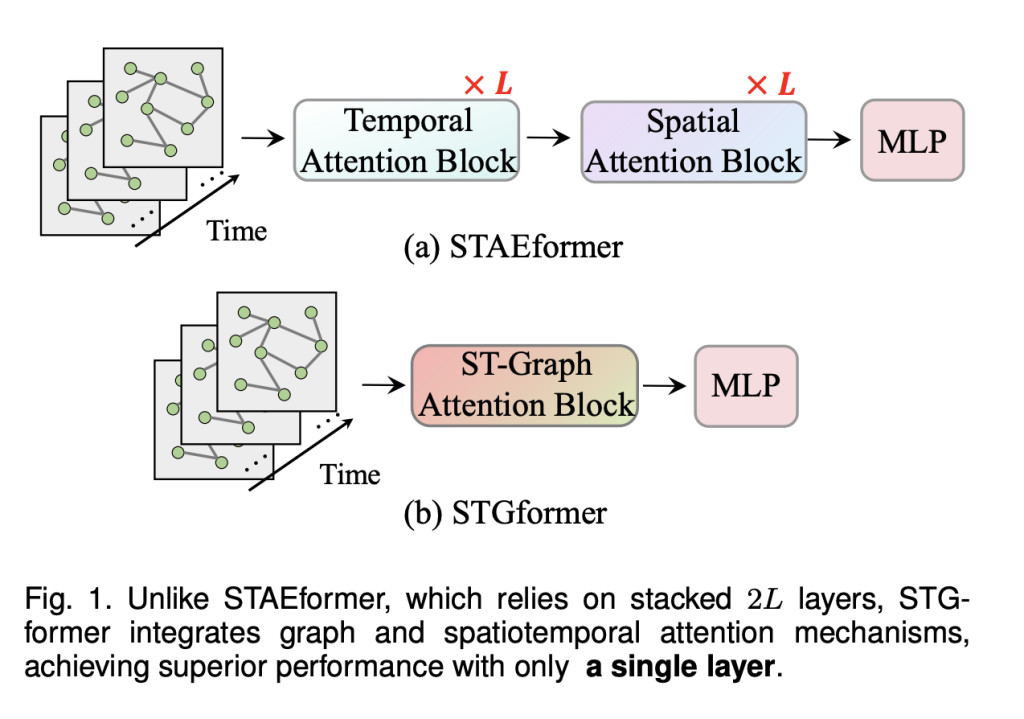

Several approaches have been introduced to tackle these limitations, combining GNNs and Transformer-based models to leverage their strengths. Spatiotemporal attention-based methods like STAEformer provide high-order spatiotemporal interactions using multiple stacked layers. While these models improve performance on small—to medium-sized datasets, their computational overheads make them impractical for large-scale networks. Consequently, there is a need for novel architectures that can balance model complexity and computational requirements while ensuring accurate traffic predictions across various scenarios.

Researchers from the SUSTech-UTokyo Joint Research Center on Super Smart City, Southern University of Science and Technology (SUSTech), Jilin University, and the University of Tokyo developed the STGformer. This novel model integrates spatiotemporal attention mechanisms within a graph structure. The research team introduced this model to achieve high efficiency in traffic forecasting. The key innovation in STGformer lies in its architecture, which combines graph-based convolutions with Transformer-like attention blocks in a single layer. This integration allows it to maintain the expressive power of Transformers while significantly reducing computational costs. Unlike traditional methods that require multiple attention layers, the STGformer captures high-order spatiotemporal interactions in a single attention block. This unique approach results in a 100x speedup and a 99.8% reduction in GPU memory usage compared to the STAEformer model when tested on the LargeST benchmark.

The researchers implemented an advanced spatiotemporal graph attention module that processes spatial and temporal dimensions as a unified entity. This design reduces the computational complexity by adopting a linear attention mechanism, which replaces the standard softmax operation with an efficient weighting function. The efficiency of this method was showcased using multiple large-scale datasets, including the San Diego and Bay Area datasets, where STGformer outperformed state-of-the-art models. The San Diego dataset achieved a 3.61% improvement in Mean Absolute Error (MAE) and a 6.73% reduction in Mean Absolute Percentage Error (MAPE) compared to the previous best models. Similar trends were observed in other datasets, highlighting the model’s robustness and adaptability in diverse traffic scenarios.

STGformer’s architecture provides a breakthrough in traffic forecasting by making it feasible to deploy models on real-world, large-scale traffic networks without compromising performance or efficiency. When tested on the California road network, the model demonstrated remarkable efficiency by completing batch inference 100 times faster than STAEformer and using only 0.2% of the memory resources. These improvements make STGformer a suitable foundation for future research and development in spatiotemporal modeling. Its generalization capabilities were further validated through cross-year scenario tests, where the model maintained high accuracy even when applied to unseen data from the following year.

Key Takeaways from the research:

Computational Efficiency: Compared to traditional models like STAEformer, STGformer achieves a 100x speedup and 99.8% reduction in GPU memory usage.

Scalability: The model can handle real-world networks with up to 20,000 sensors, overcoming the limitations of existing models that fail at large-scale deployments.

Performance Gains: Achieved a 3.61% improvement in MAE and a 6.73% reduction in MAPE on the San Diego dataset, outperforming state-of-the-art models.

Generalization Capability: Demonstrated robust performance across different datasets and maintained accuracy in cross-year testing, showcasing adaptability to changing traffic conditions.

Novel Architecture: Integrating spatiotemporal graph attention with linear attention mechanisms allows STGformer to capture local and global traffic patterns efficiently.

In conclusion, the STGformer model introduced by the research team presents a highly efficient and scalable solution for traffic forecasting on large-scale road networks. Addressing the limitations of existing GNNs and Transformer-based methods enables more effective resource allocation and transportation planning in smart city management. The proposed model’s ability to handle high-dimensional spatiotemporal data using minimal computational resources makes it an ideal candidate for deployment in real-world traffic forecasting applications. The results obtained across multiple datasets and benchmarks emphasize its potential to become a standard tool in urban computing.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 50k+ ML SubReddit

Want to get in front of 1 Million+ AI Readers? Work with us here

The post STGformer: A Spatiotemporal Graph Transformer Achieving Unmatched Computational Efficiency and Performance in Large-Scale Traffic Forecasting Applications appeared first on MarkTechPost.

Source: Read MoreÂ