Large Language Models (LLMs) have gained prominence in deep learning, demonstrating exceptional capabilities across various domains such as assistance, code generation, healthcare, and theorem proving. The training process for LLMs typically involves two stages: pretraining with massive corpora and an alignment step using Reinforcement Learning from Human Feedback (RLHF). However, LLMs need help generating appropriate content. Despite their effectiveness in multiple tasks, these models are prone to producing offensive or inappropriate content, including hate speech, malware, fake information, and social biases. This vulnerability stems from the unavoidable presence of harmful elements within their pretraining datasets. The alignment process, crucial for addressing these issues, is not universally applicable and depends on specific use cases and user preferences, making it a complex challenge for researchers to overcome

Researchers have made significant efforts to enhance LLM safety through alignment techniques, including supervised fine-tuning, red teaming, and refining the RLHF process. However, these attempts have led to an ongoing cycle of increasingly sophisticated alignment methods and more inventive “jailbreaking†attacks. Existing approaches to address these challenges fall into three main categories: baseline methods, LLM automation and suffix-based attacks, and manipulation of the decoding process. Baseline techniques like AutoPrompt and ARCA optimize tokens for harmful content generation, while LLM automation methods such as AutoDAN and GPTFuzzer employ genetic algorithms to create plausible jailbreaking prompts. Suffix-based attacks like GCG focus on improving interpretability. Despite these efforts, current methods need help with semantic plausibility and cross-architecture applicability. The lack of a principled universal defense against jailbreaking attacks and limited theoretical understanding of this phenomenon remain significant challenges in the field of LLM safety.

Researchers from NYU and MetaAI, FAIR introduce a theoretical framework for analyzing LLM pretraining and jailbreaking vulnerabilities. By decoupling input prompts and representing outputs as longer text fragments, the researchers quantify adversary strength and model behavior. They provide a PAC-Bayesian generalization bound for pretraining, suggesting inevitable harmful outputs in high-performing models. The framework demonstrates that jailbreaking remains unpreventable even after safety alignment. Identifying a key drawback in RL Fine-Tuning objectives, the researchers propose methods to train safer, more resilient models without compromising performance. This approach offers new insights into LLM safety and potential improvements in alignment techniques.

Researchers present a comprehensive theoretical framework for analyzing language model jailbreaking vulnerabilities, modeling prompts as query-concept tuples, and LLMs as generators of longer text fragments called explanations. The researchers introduce key assumptions and define notions of harmfulness, presenting a non-vacuous PAC-Bayesian generalization bound for pretraining Language Models. This bound implies that well-trained LMs may exhibit harmful behavior when exposed to such content during training. Building on these theoretical insights, the research proposes E-RLHF (Expanded Reinforcement Learning from Human Feedback), an innovative approach to improve language model alignment and reduce jailbreaking vulnerabilities. E-RLHF modifies the standard RLHF process by expanding the safety zone in the output distribution, replacing harmful prompts with safety-transformed versions in the KL-divergence term of the objective function. This innovation aims to increase safe explanations in the model’s output for harmful prompts without affecting performance on non-harmful ones. The approach can be integrated into the Direct Preference Optimization objective, eliminating the need for an explicit reward model.Â

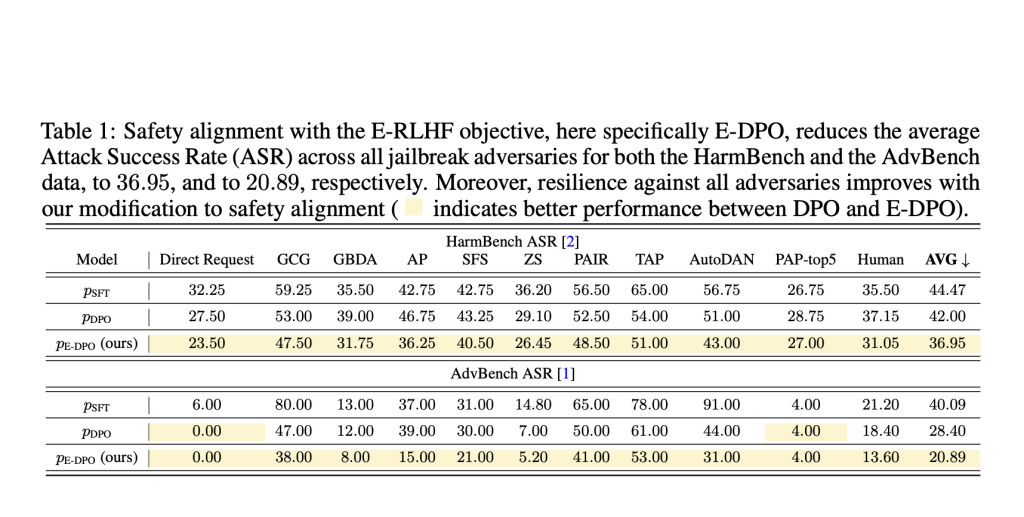

The researchers have conducted experiments using the alignment handbook code base and a publicly available SFT model. For evaluating their proposed E-DPO method using the Harmbench and AdvBench datasets, measuring safety alignment with various jailbreak adversaries. Results showed that E-DPO reduced the average Attack Success Rate (ASR) across all adversaries for both datasets, achieving 36.95% for Harmbench and 20.89% for AdvBench, demonstrating improvements over standard DPO. The study also assessed helpfulness using the MT-Bench project, with E-DPO scoring 6.6, surpassing the SFT model’s score of 6.3. The researchers concluded that E-DPO enhances safety alignment without sacrificing model helpfulness, and can be combined with system prompts for further safety improvements.

This study presented a theoretical framework for language model pretraining and jailbreaking, focusing on dissecting input prompts into query and concept pairs. Their analysis yielded two key theoretical results: first, language models can mimic the world after pretraining, leading to harmful outputs for harmful prompts; and second, jailbreaking is inevitable due to alignment challenges. Guided by these insights, the team developed a simple yet effective technique to enhance safety alignment. Their experiments demonstrated improved resilience to jailbreak attacks using this new methodology, contributing to the ongoing efforts to create safer and more robust language models.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 48k+ ML SubReddit

Find Upcoming AI Webinars here

The post Meta AI and NYU Researchers Propose E-RLHF to Combat LLM Jailbreaking appeared first on MarkTechPost.

Source: Read MoreÂ