Transformers are a groundbreaking innovation in AI, particularly in natural language processing and machine learning. Despite their pervasive use, the internal mechanics of Transformers remain a mystery to many, especially those who lack a deep technical background in machine learning. Understanding how these models work is crucial for anyone looking to engage with AI on a meaningful level, yet the complexity of the technology presents a significant barrier to entry.

The problem is that while Transformers are becoming more embedded in various applications, the steep learning curve of understanding their inner workings leaves many potential learners alienated. Existing educational resources, such as detailed blog posts and video tutorials, often delve into the mathematical underpinnings of these models, which can be overwhelming for beginners. These resources typically focus on the intricate details of neuron interactions and layer operations within the models, which are not easily digestible for those new to the field.

Existing methods and tools designed to educate users about Transformers tend to either oversimplify the concepts or, conversely, are too technical and require significant computational resources. For instance, while visualization tools that aim to demystify the workings of AI models are available, these tools often require installing specialized software or using advanced hardware, limiting their accessibility. These tools generally lack interactivity. This disconnect between the complexity of the models and the simplicity required for effective learning has created a significant gap in the educational resources available to those interested in AI.

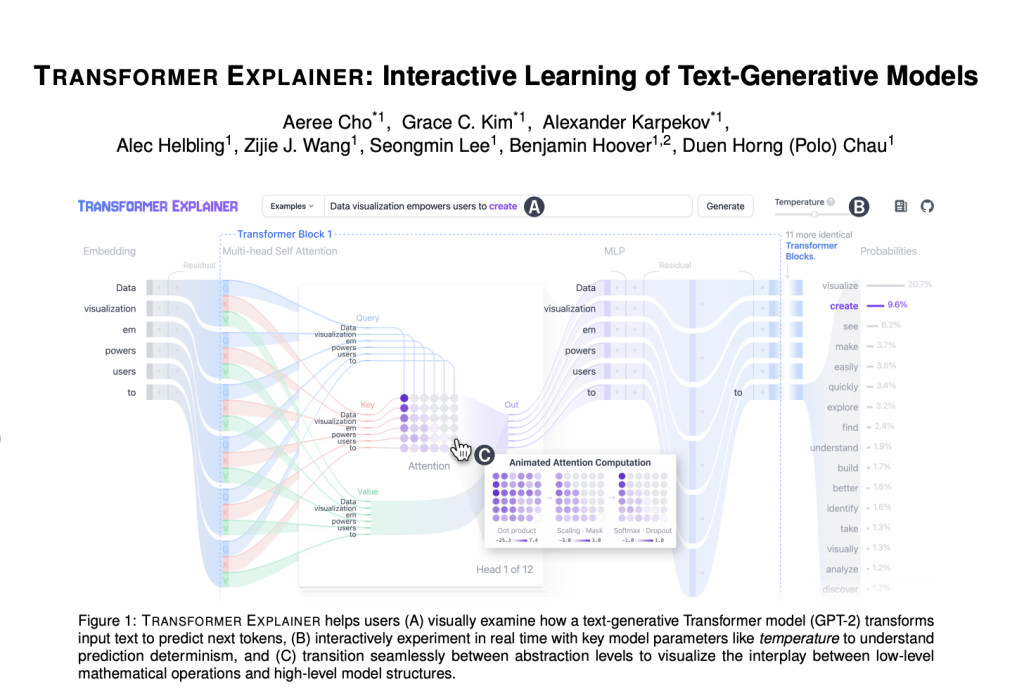

Georgia Tech and IBM Research researchers have introduced a novel tool called Transformer Explainer. This tool is designed to make learning about Transformers more intuitive and accessible. Transformer Explainer is an open-source, web-based platform allowing users to interact directly with a live GPT-2 model in their web browsers. By eliminating the need for additional software or specialized hardware, the tool lowers the barriers to entry for those interested in understanding AI. The tool’s design focuses on enabling users to explore and visualize the internal processes of the Transformer model in real-time.

Transformer Explainer offers a detailed breakdown of how text is processed within a Transformer model. The tool uses a Sankey diagram to visualize the flow of information through the model’s various components. This visualization helps users understand how input text is transformed step by step until the model predicts the next token. One of the key features of Transformer Explainer is its ability to adjust parameters, such as temperature, which controls the probability distribution of the predicted tokens. The tool’s ability to operate entirely within the browser, utilizing frameworks like Svelte and D3, ensures a seamless and accessible user experience.

In terms of performance, Transformer Explainer integrates a live GPT-2 model that runs locally in the user’s browser, offering real-time feedback on user interactions. This immediate response allows users to see the effects of their adjustments in real time, which is crucial for understanding how different aspects of the model interact. The tool’s design also incorporates multiple levels of abstraction, enabling users to begin with a high-level overview and gradually delve into more detailed aspects of the model as needed.Â

In conclusion, Transformer Explainer successfully bridges the gap between the complexity of Transformer models and the need for accessible educational tools. By allowing users to interact with a live GPT-2 model and visualize its processes in real time, the tool makes it easier for non-experts to understand how these powerful AI systems work. Exploring model parameters and seeing their effects immediately is a valuable feature that enhances learning and engagement.

Check out the Paper and Details. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 48k+ ML SubReddit

Find Upcoming AI Webinars here

The post Transformer Explainer: An Innovative Web-Based Tool for Interactive Learning and Visualization of Complex AI Models for Non-Experts appeared first on MarkTechPost.

Source: Read MoreÂ