When ChatGPT gives you the right answer to your prompt, does it reason through the request or simply remember the answer from its training data?

MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) researchers designed a series of tests to see if AI models “think†or just have good memories.

When you prompt an AI model to solve a math problem like “What is 27+62?†it comes back quickly with the correct answer: 89. How could we tell if it understands the underlying arithmetic or simply saw the problem in its training data?

In their paper, the researchers tested GPT-4, GPT-3.5 Turbo, Claude 1.3, and PaLM2 to see if they could “generalize not only to unseen instances of known tasks, but to new tasks.â€

They designed a series of 11 tasks that differed slightly from the standard tasks in which the LLMs generally perform well.

The LLMs should perform equally well with the “counterfactual tasks†if they employ general and transferable task-solving procedures.

If an LLM “understands†math then it should provide the correct answer to a math problem in base-10 and the seldom-used base-9, for example.

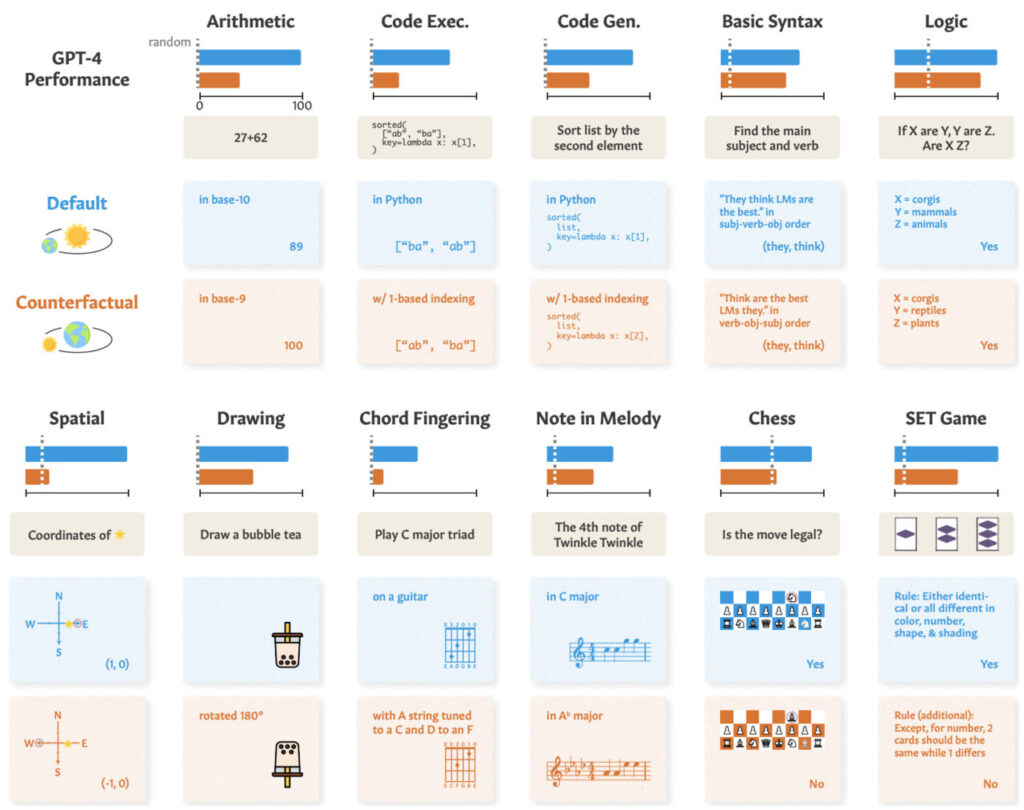

Here’s a look at examples of the tasks and GPT-4’s performance.

GPT-4’s performance with standard default tasks (Blue) and slightly altered counterfactual tasks (Orange). Examples of the tasks and correct answers are shown here. Source: arXiv

GPT-4’s performance in standard tests (blue line) is good, but its math, logic reasoning, spatial reasoning, and other abilities (orange line) degrade significantly when the task is slightly altered.

The other models displayed similar degradation with GPT-4 coming out on top.

Despite the degradation, the performance on counterfactual tasks was still better than chance. The AI models try to reason through these tasks but aren’t very good at it.

The results show that the impressive performance of AI models in tasks like college exams relies on excellent recall of training data, not reasoning. This further highlights that AI models can’t generalize to unseen tasks,

Zhaofeng Wu, an MIT PhD student in electrical engineering and computer science, CSAIL affiliate, and the lead author of the paper said, “We’ve uncovered a fascinating aspect of large language models: they excel in familiar scenarios, almost like a well-worn path, but struggle when the terrain gets unfamiliar. This insight is crucial as we strive to enhance these models’ adaptability and broaden their application horizons.â€

We saw a similar demonstration of this inability to generalize when we explored how bad AI models are at solving a simplified river crossing puzzle.

The researchers concluded that when developers analyze their models, they should “consider abstract task ability as detached from observed task performance.â€

The “train-to-test†approach may move a model up the benchmarks but doesn’t offer a true measure of how the model will fare when presented with a new task to reason through.

The researchers suggest that part of the problem is that these models are trained only on surface form text.

If LLMs are exposed to more real-world contextualized data and semantic representation they might be able to generalize when presented with task variations.

The post AI model performance: Is it reasoning or simply reciting? appeared first on DailyAI.

Source: Read MoreÂ