Natural language processing (NLP) teaches computers to understand, interpret, and generate human language. Researchers in this field are particularly focused on improving the reasoning capabilities of language models to solve complex tasks effectively. This involves enhancing models’ abilities to process and generate text that requires logical steps and coherent thought processes.

A significant challenge in NLP is enabling language models to solve reasoning tasks accurately and efficiently. Traditional models often rely on generating explicit intermediate steps, which can be computationally expensive and inefficient. While improving accuracy, these intermediate steps require substantial computational resources and may not fully leverage the models’ potential. The central issue is finding a way to internalize these reasoning processes within the models to maintain high accuracy while reducing computational overhead.

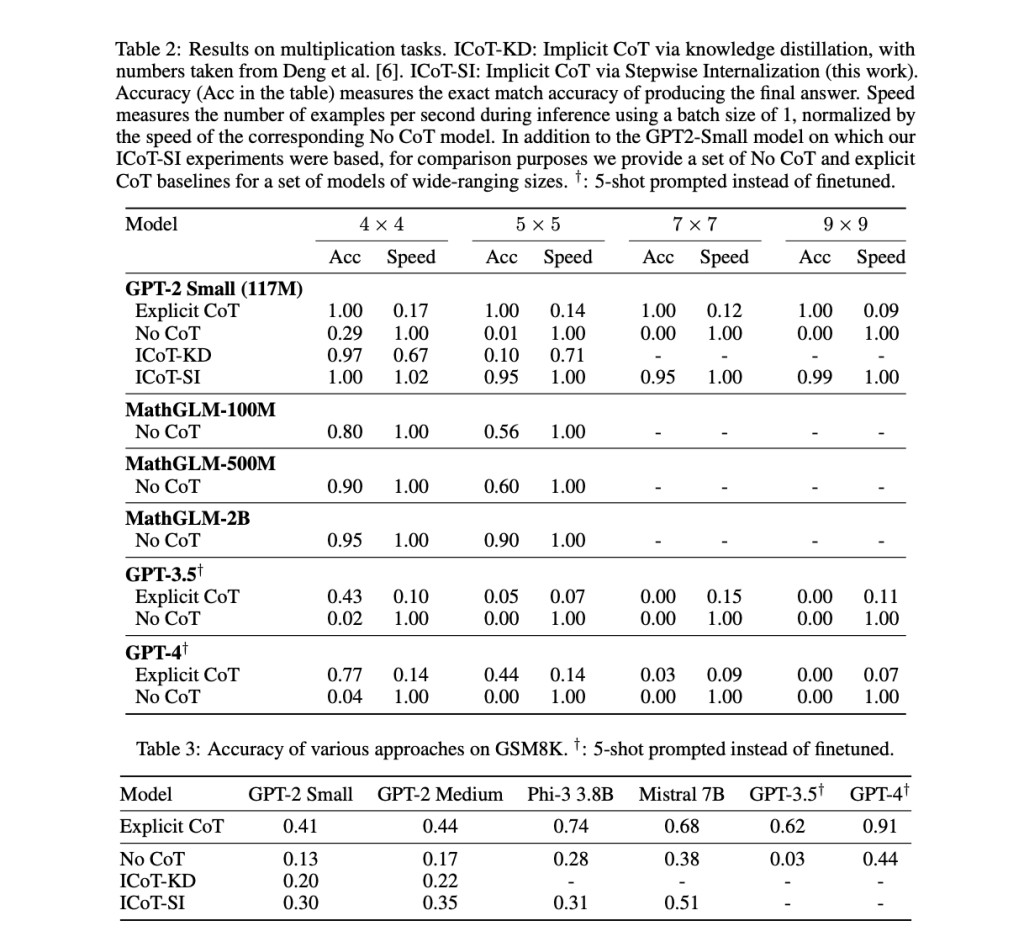

Existing work includes explicit chain-of-thought (CoT) reasoning, which generates intermediate reasoning steps to improve accuracy but demands substantial computational resources. Implicit CoT via knowledge distillation (ICoT-KD) trains models using hidden states for reasoning without explicit steps. Methods like MathGLM solve multi-digit arithmetic tasks without intermediate steps, achieving accuracy with large models. Another approach, Searchformer, trains transformers to perform searches with fewer steps. These methods aim to enhance efficiency and accuracy in natural language processing tasks.

Researchers from the Allen Institute for Artificial Intelligence, the University of Waterloo, the University of Washington, and Harvard University have introduced Stepwise Internalization to solve this inefficiency. This innovative method starts with a model trained for explicit CoT reasoning and then gradually removes the intermediate steps while fine-tuning the model. This process helps the model internalize the reasoning steps, simplifying the reasoning process while preserving performance. The gradual removal of CoT tokens during training allows the model to internalize these steps within its hidden states, achieving implicit CoT reasoning without generating intermediate steps.

Stepwise Internalization involves a meticulous training process. Initially, a language model is trained using explicit CoT reasoning, which generates intermediate steps to reach the final answer. As training progresses, these intermediate steps are incrementally removed. At each stage of the process, the model is fine-tuned to adapt to the absence of certain steps, which encourages it to internalize the reasoning process within its hidden states. The method uses a linear schedule to remove CoT tokens, ensuring the model gradually adapts to these changes. This systematic removal and fine-tuning process enables the model to handle complex reasoning tasks more efficiently.

The proposed method has shown remarkable improvements in performance across various tasks. For example, a GPT-2 Small model trained using Stepwise Internalization solved 9-by-9 multiplication problems with up to 99% accuracy. In contrast, models trained using standard methods struggled with tasks beyond 4-by-4 multiplication. Furthermore, the Mistral 7B model achieved over 50% accuracy on the GSM8K dataset, which consists of grade-school math problems, without producing any explicit intermediate steps. This performance surpasses the much larger GPT-4 model, which only scored 44% when prompted to generate the answer directly. Furthermore, Stepwise Internalization allows for significant computational efficiency. On tasks requiring explicit CoT reasoning, the method proves to be up to 11 times faster while maintaining high accuracy.

To conclude, this research highlights a promising approach to enhancing the reasoning capabilities of language models. By internalizing CoT steps, Stepwise Internalization offers a balance between accuracy and computational efficiency. This method represents a significant advancement in potentially transforming how complex reasoning tasks are handled in NLP. The ability to internalize reasoning steps within hidden states could pave the way for more efficient and capable language models, making them more practical for various applications. The research underscores the potential of this innovative approach, suggesting that further development and scaling could lead to even more impressive results in the future.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post From Explicit to Implicit: Stepwise Internalization Ushers in a New Era of Natural Language Processing Reasoning appeared first on MarkTechPost.

Source: Read MoreÂ