The primary goal of Sign Language Production (SLP) is to create sign avatars that resemble humans using text inputs. The standard procedure for SLP methods based on deep learning involves several steps. First, the text is translated into gloss, a language that represents postures and gestures. This gloss is then used to generate a video that mimics sign language. The resulting video is further processed to create more interesting avatar movies that appear more like real people. Acquiring and processing data in sign language is challenging due to the complexity of these processes.Â

Over the past decade, most studies have grappled with the challenges of a German sign language (GSL) dataset called PHOENIX14T and other lesser-known language datasets for Sign Language Production, Recognition, and Translation tasks (SLP, SLR, and SLT). These challenges, which include the lack of standardized tools and the slow progress in research on minority languages, have significantly dampened researchers’ enthusiasm. The complexity of the problem is further underscored by the fact that studies using the American Sign Language (ASL) dataset are still in their infancy.

Thanks to the current mainstream datasets, a lot of progress has been made in the sector. Nevertheless, they fail to tackle the new problems that are appearing:

Pre-existing datasets sometimes include files in complicated forms, such as pictures, scripts, OpenPose skeleton key points, graphs, and perhaps other formats used for preprocessing. Directly trainable actionable data is absent from these forms.Â

Annotating glosses by hand is a tedious and time-consuming process.Â

After obtaining several sign video datasets from sign language experts, the data is transformed into various forms, making expanding the dataset very difficult.

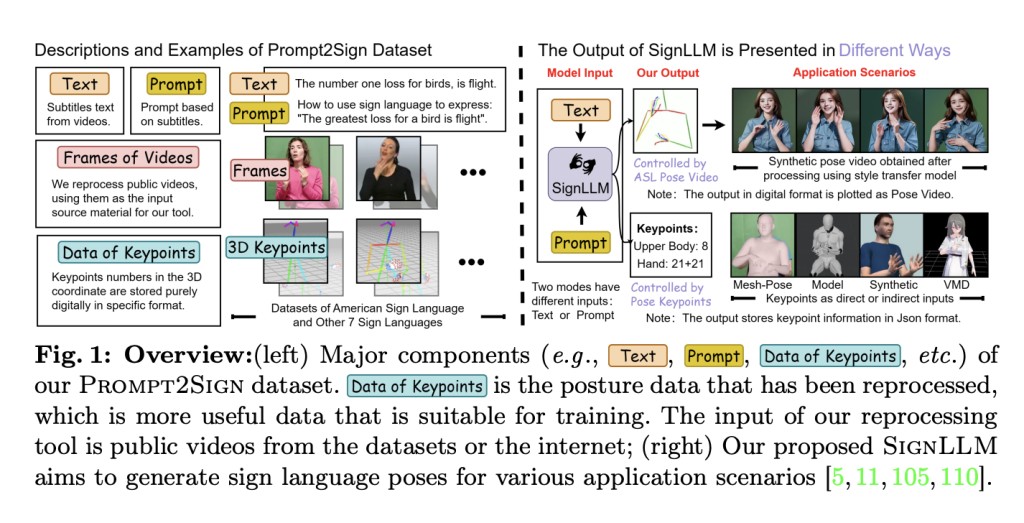

Researchers from Rutgers University, Australian National University, Data61/CSIRO, Carnegie Mellon University, University of Texas at Dallas, and University of Central Florida present Prompt2Sign, a groundbreaking dataset that tracks the upper body movements of sign language demonstrators on a massive scale. This is a significant step forward in the field of multilingual sign language recognition and generation, as it is the first comprehensive dataset combining eight distinct sign languages and using publicly available online videos and datasets to address the shortcomings of earlier efforts.

The researchers begin by standardizing the posture information of video frames (the original material of the tool) into the preset format using OpenPose, a video processing application so that they can construct this dataset. Reducing redundancy and making training with seq2seq and text2text models easier can be achieved by storing key information in their standardized format. Then, to make it more cost-effective, they auto-generate prompt words to decrease the need for human annotations. Lastly, to address the issues with manual preprocessing and data collecting, they enhance the tools’ processing level of automation, making them extremely efficient and lightweight. This improves their data processing capabilities without the need for further model loading.Â

The team highlights that the current model could benefit from some tweaks because the new datasets present different obstacles while training models. Due to the variances in sign language from country to country, it is not possible to train several sets of sign language data simultaneously. Managing additional languages and larger datasets makes training more complex and time-consuming, making downloading, storing, and loading data more painful. Therefore, investigating training techniques at fast speeds is essential. Furthermore, it is important to investigate under-researched topics like multilingual SLP, efficient training, and the capacity to understand prompts since the current model structure cannot comprehend more languages and more complicated, natural human conversational inputs. This pertains to questions like improving the large model’s generalization capability and fundamental understanding prompts ability.

To address these issues, the team presented SignLLM, the initial large-scale multilingual SLP model built on the Prompt2Sign dataset. It generates the skeletal poses of eight different sign languages given texts or suggestions. There are two other modes for SignLLM: (i) The Multi-Language Switching Framework (MLSF), which dynamically adds encoder-decoder groups to generate many sign languages in tandem. (ii) The Prompt2LangGloss module enables SignLLM to generate static encoder-decoder pairs.Â

The team aims to use their new dataset to set a standard for multilingual recognition and generation. Their latest loss function incorporates a novel module grounded in the Reinforcement Learning (RL) idea to expedite model training on more languages and larger datasets, thereby resolving the prolonged training time caused by these factors. A large number of tests and ablation investigations were carried out. The outcomes prove that the SignLLM outperforms baseline methods on both the development and test sets for a total of eight sign languages.

Even though their work has made great strides in automating the processing and capture of data in sign language, it still needs to provide a comprehensive end-to-end solution. For example, the team highlights that to utilize one’s private dataset, one must use OpenPose to extract 2D keypoint json files and then update them manually.

Check out the Paper and Project. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post SignLLM: A Multilingual Sign Language Model that can Generate Sign Language Gestures from Input Text appeared first on MarkTechPost.

Source: Read MoreÂ