LLMs like GPT, Gemini, and Claude have achieved remarkable performance but remain proprietary, with limited training details disclosed. Open-source models such as LLaMA-3 have provided weights but need more transparency in training data and methods. Efforts to create fully transparent LLMs, such as Pythia, Amber, and OLMo, aim to enhance scientific research by sharing more details, including pre-training data and training code. Despite these efforts, open-source LLMs still need to catch up compared to state-of-the-art models in tasks like reasoning, knowledge, and coding. Greater transparency is crucial for democratizing LLM development and advancing academic research.

Researchers from M-A-P, University of Waterloo, Wuhan AI Research, and 01.AI have released MAP-Neo, a highly capable and transparent bilingual language model with 7 billion parameters, trained on 4.5 trillion high-quality tokens. This model, fully open-sourced, matches the performance of leading closed-source LLMs. The release includes the cleaned pre-training corpus, data cleaning pipeline, checkpoints, and an optimized training and evaluation framework. The comprehensive documentation covers data curation, model architecture, training processes, evaluation codes, and insights into building LLMs, aiming to support and inspire the global research community, especially in non-English regions.

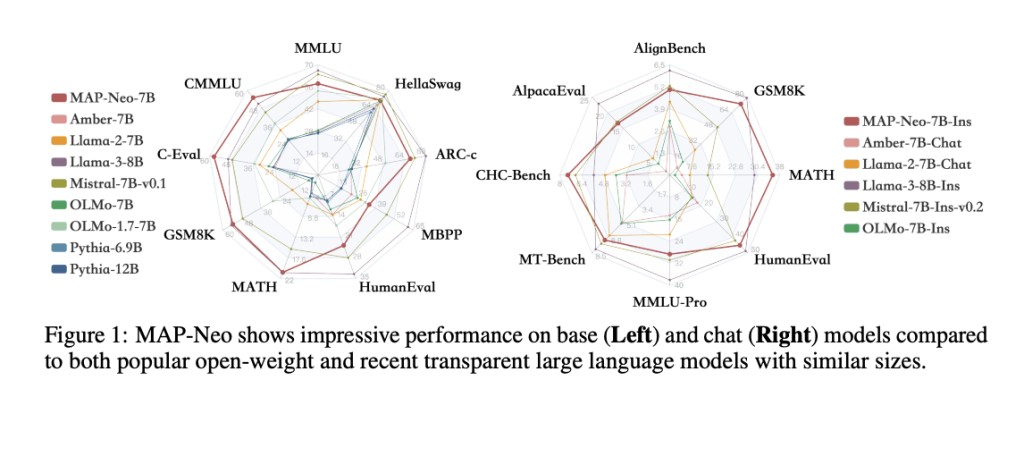

The advancement of open-source LLMs is crucial for AI research and applications. Recent efforts focus on enhancing both performance and transparency. MAP-Neo-7B stands out by integrating intermediate checkpoints, a comprehensive data cleaning process, accessible pre-training corpus, and reproduction code, unlike Mistral, LLaMA3, Pythia, Amber, and OLMo models. MAP-Neo-7B excels in benchmarks for Chinese and English understanding (C-EVAL, MMLU), mathematical ability (GSM8K), and coding (HumanEval). It achieves high scores across all tests and sets a new standard for transparency and performance, promoting trustworthiness and collaboration in the research community.

The tokenizer is trained using byte-pair encoding (BPE) via SentencePiece on 50 billion samples, with a capping length of 64,000. Priority is given to code, math, and academic data. The vocabulary size is 64,000 with a maximum sentence-piece length of 16 to enhance Chinese performance. Numbers are tokenized as individual digits, and unknown UTF-8 characters revert to byte granularity. No normalization or dummy prefixes are applied, maintaining character coverage at 99.99%. Extra whitespace removal is disabled to preserve code formatting and improve performance after addressing initial training issues. The tokenizer’s efficiency varies across different languages and data sources.

The MAP-Neo model family exhibits impressive performance across benchmarks for base and chat models. It particularly excels in code, math, and instruction-following tasks. MAP-Neo outperforms other models in standard benchmarks, demonstrating its academic and practical value. The base model’s high-quality data contributes to its superior results in complex reasoning tasks. Compared to other transparent LLMs, MAP-Neo shows significant advancements. The effectiveness of Iterative DPO is evident, with substantial improvements in chat-related benchmarks. However, the limited capabilities of certain base models restrict their performance in instruction-tuned chat benchmarks.

In conclusion, Data colonialism is a concern as firms exploit algorithms, leading to the manipulation of human behavior and market dominance. The concentration of AI capabilities in large tech firms and elite universities highlights the need for democratizing AI access to counter data colonialism. While open-source models offer an alternative, they often need full transparency in development processes, hindering trust and reproducibility. The MAP-Neo model addresses these issues by being a fully open-source bilingual LLM, detailing all key processes. This transparency can reduce deployment costs, particularly for Chinese LLMs, promoting innovation inclusivity and mitigating the dominance of English LLMs.

Check out the Paper and Project. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post MAP-Neo: A Fully Open-Source and Transparent Bilingual LLM Suite that Achieves Superior Performance to Close the Gap with Closed-Source Models appeared first on MarkTechPost.

Source: Read MoreÂ