Natural language processing (NLP) has many applications, including machine translation, sentiment analysis, and conversational agents. The advent of LLMs has significantly advanced NLP capabilities, making these applications more accurate and efficient. However, these large models’ computational and energy demands have raised concerns about sustainability and accessibility.

The primary challenge with current large language models lies in their substantial computational and energy requirements. These models, often comprising billions of parameters, require extensive resources for training and deployment. This high demand limits their accessibility, making it difficult for many researchers and institutions to utilize these powerful tools. More efficient models are needed to deliver high performance without excessive resource consumption.

Various methods have been developed to improve the efficiency of language models. Techniques such as weight tying, pruning, quantization, and knowledge distillation have been explored. Weight tying involves sharing certain weights between different model components to reduce the total number of parameters. Pruning removes less significant weights, creating a sparser, more efficient model. Quantization reduces the precision of weights and activations from 32-bit to lower-bit representations, which decreases the model size and speeds up training and inference. Knowledge distillation transfers knowledge from a larger “teacher†model to a smaller “student†model, maintaining performance while reducing size.

A research team from A*STAR, Nanyang Technological University, and Singapore Management University introduced Super Tiny Language Models (STLMs) to address the inefficiencies of large language models. These models aim to provide high performance with significantly reduced parameter counts. The team focuses on innovative techniques such as byte-level tokenization, weight tying, and efficient training strategies. Their approach aims to minimize parameter counts by 90% to 95% compared to traditional models while still delivering competitive performance.

The proposed STLMs employ several advanced techniques to achieve their goals. Byte-level tokenization with a pooling mechanism embeds each character in the input string and processes them through a smaller, more efficient transformer. This method dramatically reduces the number of parameters needed. Weight tying shares weights across different model layers decreases the parameter count. Efficient training strategies ensure these models can be trained effectively even on consumer-grade hardware.

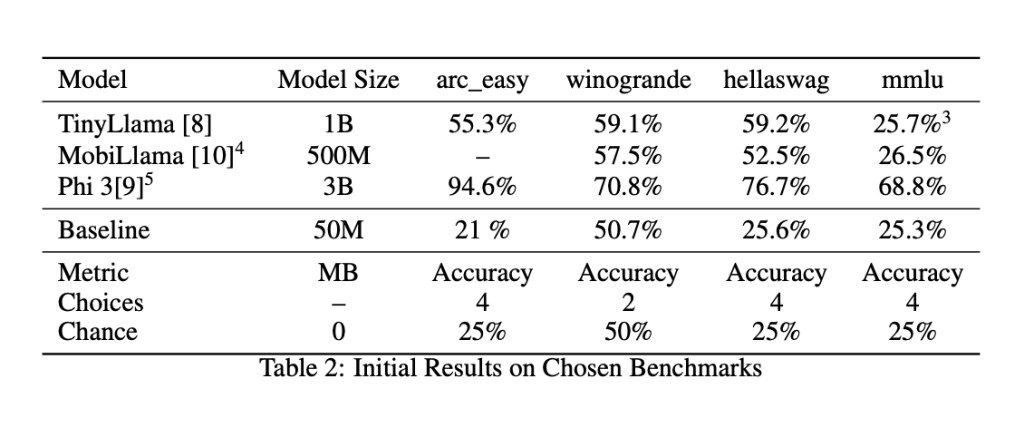

Performance evaluations of the proposed STLMs showed promising results. Despite their reduced size, these models achieved competitive accuracy levels on several benchmarks. For instance, the 50M parameter model demonstrated performance comparable to much larger models, such as the TinyLlama (1.1B parameters), Phi-3-mini (3.3B parameters), and MobiLlama (0.5B parameters). In specific tasks like ARC (AI2 Reasoning Challenge) and Winogrande, the models showed 21% and 50.7% accuracy, respectively. These results highlight the effectiveness of the parameter reduction techniques and the potential of STLMs to provide high-performance NLP capabilities with lower resource requirements.

In conclusion, the research team from A*STAR, Nanyang Technological University, and Singapore Management University has created high-performing and resource-efficient models by developing Super Tiny Language Models (STLMs) by focusing on parameter reduction and efficient training methods. These STLMs address the critical issues of computational and energy demands, making advanced NLP technologies more accessible and sustainable. The proposed techniques, such as byte-level tokenization and weight tying, have proven effective in maintaining performance while significantly reducing the parameter counts.Â

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post The Emergence of Super Tiny Language Models (STLMs) for Sustainable AI Transforms the Realm of NLP appeared first on MarkTechPost.

Source: Read MoreÂ