Large language models (LLMs) like ChatGPT-4 and Claude-3 Opus excel in tasks such as code generation, data analysis, and reasoning. Their growing influence in decision-making across various domains makes it crucial to align them with human preferences to ensure fairness and sound economic decisions. Human preferences vary widely due to cultural backgrounds and personal experiences, and LLMs often exhibit biases, favoring dominant viewpoints and frequent items. If LLMs do not accurately reflect these diverse preferences, biased outputs can lead to unfair and economically detrimental outcomes.

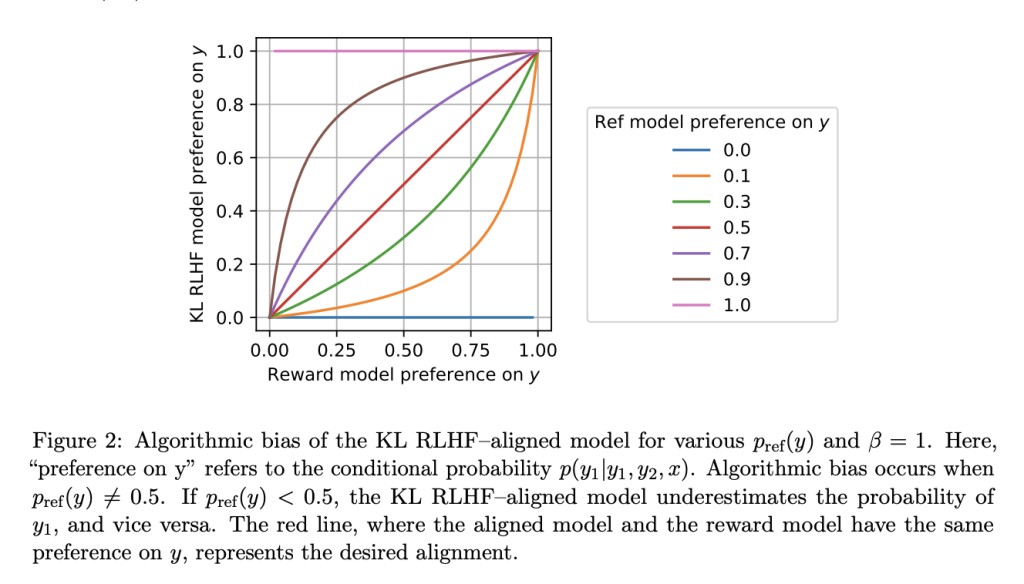

Existing methods, particularly reinforcement learning from human feedback (RLHF), suffer from algorithmic bias, leading to preference collapse where minority preferences are disregarded. This bias persists even with an oracle reward model, highlighting the limitations of current approaches in capturing diverse human preferences accurately.

Researchers have introduced a groundbreaking approach, Preference Matching RLHF, aimed at mitigating algorithmic bias and aligning LLMs with human preferences effectively. At the core of this innovative method lies the preference-matching regularizer, derived through solving an ordinary differential equation. This regularizer ensures the LLM strikes a balance between response diversification and reward maximization, enhancing the model’s ability to capture and reflect human preferences accurately. Preference Matching RLHF provides robust statistical guarantees and effectively eliminates the bias inherent in conventional RLHF approaches. The paper also details a conditional variant tailored for natural language generation tasks, improving the model’s capacity to generate responses that align closely with human preferences.

The experimental validation of Preference Matching RLHF on the OPT-1.3B and Llama-2-7B models yielded compelling results, demonstrating significant improvements in aligning LLMs with human preferences. Performance metrics show a 29% to 41% improvement compared to standard RLHF methods, underscoring the approach’s capability to capture diverse human preferences and mitigate algorithmic bias. These results highlight the promising potential of Preference Matching RLHF in advancing AI research toward more ethical and effective decision-making processes.

In conclusion, Preference Matching RLHF offers a significant contribution by addressing algorithmic bias and enhancing the alignment of LLMs with human preferences. This advancement can improve decision-making processes, promote fairness, and mitigate biased outputs from LLMs, advancing the field of AI research.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post Advancing Ethical AI: Preference Matching Reinforcement Learning from Human Feedback RLHF for Aligning LLMs with Human Preferences appeared first on MarkTechPost.

Source: Read MoreÂ