Multi-layer perceptrons (MLPs), or fully-connected feedforward neural networks, are fundamental in deep learning, serving as default models for approximating nonlinear functions. Despite their importance affirmed by the universal approximation theorem, they possess drawbacks. In applications like transformers, MLPs often monopolize parameters and lack interpretability compared to attention layers. While exploring alternatives, such as the Kolmogorov-Arnold representation theorem, research has primarily focused on traditional depth-2 width-(2n+1) architectures, neglecting modern training techniques like backpropagation. Thus, while MLPs remain crucial, there’s ongoing exploration for more effective nonlinear regressors in neural network design.

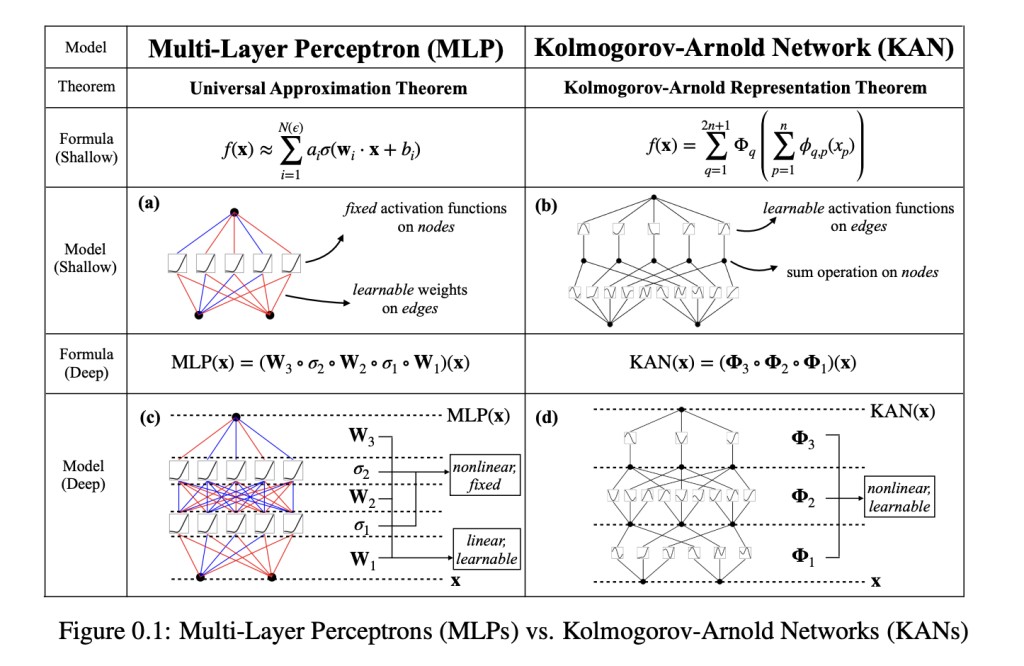

MIT, Caltech, Northeastern researchers, and the NSF Institute for AI and Fundamental Interactions have developed Kolmogorov-Arnold Networks (KANs) as an alternative to MLPs. Unlike MLPs with fixed node activation functions, KANs employ learnable activation functions on edges, replacing linear weights with parametrized splines. This change enables KANs to surpass MLPs in both accuracy and interpretability. Through mathematical and empirical analysis, KANs perform better, particularly in handling high-dimensional data and scientific problem-solving. The study introduces KAN architecture, presents comparative experiments with MLPs, and showcases KANs’ interpretability and applicability in scientific discovery.

Existing literature explores the connection between the Kolmogorov-Arnold theorem (KAT) and neural networks, with prior works primarily focusing on limited network architectures and toy experiments. The study contributes by expanding the network to arbitrary sizes and depths, making it relevant in modern deep learning. Additionally, it addresses Neural Scaling Laws (NSLs), showcasing how Kolmogorov-Arnold representations enable fast scaling. The research also delves into Mechanistic Interpretability (MI) by designing inherently interpretable architectures. Learnable activations and symbolic regression methods are explored, highlighting the approach of continuously learned activation functions in KANs. Moreover, KANs show promise in replacing MLPs in Physics-Informed Neural Networks (PINNs) and AI applications in mathematics, particularly in knot theory.

KANs draw inspiration from the Kolmogorov-Arnold Representation Theorem, which asserts that any bounded multivariate continuous function can be represented by combining single-variable continuous functions and addition operations. KANs leverage this theorem by employing univariate B-spline curves with adjustable coefficients to parametrize functions across multiple layers. By stacking these layers, KANs deepen, aiming to overcome the limitations of the original theorem and achieve smoother activations for better function approximation. Theoretical guarantees, like the KAN Approximation Theorem, provide bounds on approximation accuracy. Compared to other theories like the Universal Approximation Theorem (UAT), KANs offer promising scaling laws due to their low-dimensional function representation.Â

In the study, KANs outperform MLPs in representing functions across various tasks such as regression, solving partial differential equations, and continual learning. KANs demonstrate superior accuracy and efficiency, particularly in capturing the complex structures of special functions and Feynman datasets. They exhibit interpretability by revealing compositional structures and topological relationships, showcasing their potential for scientific discovery in fields like knot theory. KANs also show promise in solving unsupervised learning problems, offering insights into structural relationships among variables. Overall, KANs emerge as powerful and interpretable models for AI-driven scientific research.

KANs offer an approach to deep learning, leveraging mathematical concepts to enhance interpretability and accuracy. Despite their slower training than Multilayer Perceptrons, KANs excel in tasks where interpretability and accuracy are paramount. While their efficiency remains an engineering challenge, ongoing research aims to optimize training speed. If interpretability and accuracy are key priorities and time constraints are manageable, KANs present a compelling choice over MLPs. However, for tasks prioritizing speed, MLPs remain the more practical option.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit

The post Kolmogorov-Arnold Networks (KANs): A New Era of Interpretability and Accuracy in Deep Learning appeared first on MarkTechPost.

Source: Read MoreÂ