Improving comprehension and interaction capabilities of Large Language Models (LLMs) with video content is a major area of ongoing research and development. A major achievement in this field is Pegasus-1, which is a state-of-the-art multimodal model that can comprehend, synthesise, and interact with video information using natural language.

The main goal of Pegasus-1‘s development is to address the inherent complexity of video data, which frequently has several modalities contained in a single format. Understanding the temporal sequence of visual information is essential to fully understanding such data, as is capturing the dynamics and changes that transpire over time and doing a thorough spatial analysis of each frame.Â

Pegasus-1’s adaptability across a variety of video genres has been ensured by its ability to handle a wide range of video lengths, from little samples to extensive recordings. The strategy used to overcome obstacles and give Pegasus-1 extensive video understanding capabilities has been covered in the technical study shared by the authors. This includes details about the training data it uses, the training procedures it uses, and the model architecture, all of which add to Pegasus-1’s sophisticated understanding of video content.Â

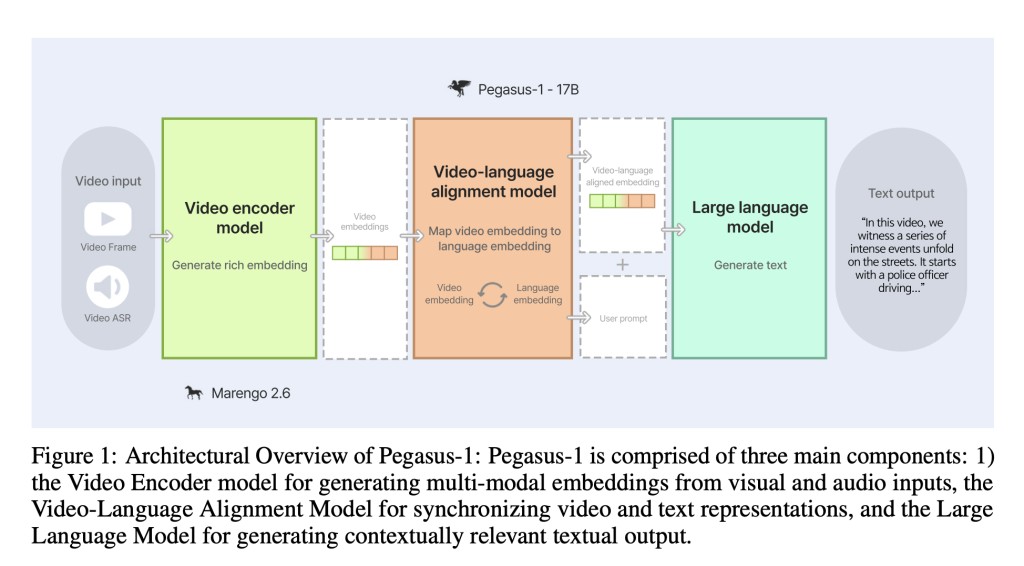

Pegasus-1 uses an advanced architectural framework to manage extended video lengths while simultaneously integrating both visual and aural information in order to successfully handle the intricacies of video data. The Video Encoder Model, the Video-language Alignment Model, and the Large Language Model (Decoder Model) are the three main parts of this architecture, and they are all essential to the model’s comprehension and interaction with video content.

Key video large language model benchmarks such as video conversation, zero-shot video question answering, and video summary have been used to assess Pegasus-1’s performance. Using data from Google’s Video Question Answering reports for Gemini 1.0 and 1.5, the evaluation compares Pegasus-1 with proprietary Gemini models. Using benchmark data from the corresponding papers, Pegasus-1 has been compared with open-source models such as VideoChat, Video-ChatGPT, Video LLAMA, BT-adapter, LLaMA-VID, and VideoChat2. This thorough evaluation sheds light on Pegasus-1’s performance in comparison to well-known proprietary and open-source models for Natural Language Processing and interaction with video content.

The team has shared that Pegasus-1 performs admirably on a number of video LLM benchmarks, the details of which are as follows.

With a score of 4.29 in Context and 3.79 in Correctness in the video conversation benchmark, Pegasus-1 has demonstrated its proficiency in processing and comprehending dialogue that is presented in video format. Its ability to interact with video chat information effectively has been demonstrated by its strengths in important characteristics like Correctness, Detail, Contextual Awareness, Temporal Comprehension, and Consistency.Â

Pegasus-1 has also outperformed open-source models and the Gemini series in zero-shot video question answering on ActivityNet-QA and NExT-QA datasets, exhibiting great advances in zero-shot capabilities.Â

Using the ActivityNet detailed caption dataset, Pegasus-1 has beaten baseline algorithms for video summarization in terms of parameters like Correctness of Information, Detailed Orientation, and Contextual Understanding.Â

Using TempCompass to measure temporal comprehension, Pegasus-1 has outperformed open-source benchmarks, especially outperforming VideoChat2. Artificial video modifications, such as slowing down, reversing, and varying speeds, have been used in this evaluation to assess the model’s understanding of temporal dynamics.Â

In conclusion, this technical report provides a thorough grasp of Pegasus-1’s advantages, disadvantages, and potential areas for improvement by admitting its limitations and consistently improving and refining its features.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit

The post Twelve Labs Introduces Pegasus-1: A Multimodal Language Model Specialized in Video Content Understanding and Interaction through Natural Language appeared first on MarkTechPost.

Source: Read MoreÂ