Reinforcement learning (RL) faces challenges due to sample inefficiency, hindering real-world adoption. Standard RL methods struggle, particularly in environments where exploration is risky. However, offline RL utilizes pre-collected data to optimize policies without online data collection. Yet, a distribution shift between the target policy and collected data presents hurdles, leading to an out-of-sample issue. This discrepancy results in overestimation bias, potentially yielding an overly optimistic target policy. This highlights the need to address distribution shifts for effective offline RL implementation.

Prior research addresses this by explicitly or implicitly regularizing the policy toward behavior distribution. Another approach involves learning a single-step world model from the offline dataset to generate trajectories for the target policy, aiming to mitigate distribution shifts. However, this method may introduce generalization issues within the world model itself, potentially exacerbating value overestimation bias in RL policies.

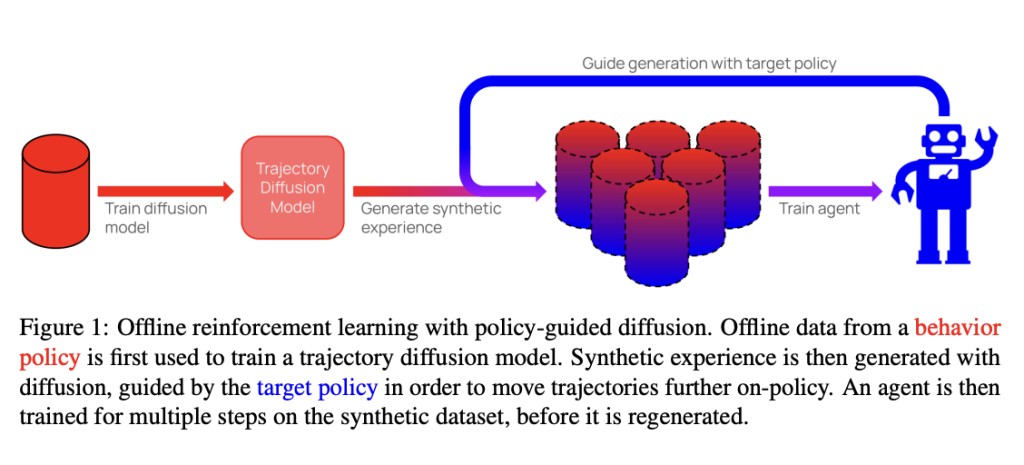

Researchers from Oxford University present policy-guided diffusion (PGD) to address the issue of compounding error in offline RL by modeling entire trajectories rather than single-step transitions. PGD trains a diffusion model on the offline dataset to generate synthetic trajectories under the behavior policy. To align these trajectories with the target policy, guidance from the target policy is applied to shift the sampling distribution. This results in a behavior-regularized target distribution, reducing divergence from the behavior policy and limiting generalization error.Â

PGD utilizes a trajectory-level diffusion model trained on an offline dataset to approximate the behavior distribution. Inspired by classifier-guided diffusion, PGD incorporates guidance from the target policy during the denoising process to steer trajectory sampling toward the target distribution. This results in a behavior-regularized target distribution, balancing action likelihoods under both policies. PGD excludes behavior policy guidance, focusing solely on target policy guidance. To control guidance strength, PGD introduces guidance coefficients, allowing for fine-tuning of the regularization level towards the behavior distribution. Also, PGD applies a cosine guidance schedule and stabilization techniques to enhance guidance stability and reduce dynamic error.

The experiments conducted demonstrate the following key findings:

Effectiveness of PGD:Â Agents trained with synthetic experience from PGD outperform those trained on unguided synthetic data or directly on the offline dataset.Â

Guidance Coefficient Tuning: Tuning the guidance coefficient in PGD enables the sampling of trajectories with high action likelihood across a range of target policies. As the guidance coefficient increases, trajectory likelihood under each target policy increases monotonically, indicating the ability to sample high-probability trajectories with out-of-distribution (OOD) target policies.

Low Dynamics Error: Despite sampling high-likelihood actions from the policy, PGD retains low dynamics error. Compared to an autoregressive world model (PETS), PGD achieves significantly lower error across all target policies, highlighting its robustness to different target policies.

Training Stability: Periodic generation of synthetic data outperforms continuous generation, attributed to training stability, especially when performing guidance early in training. Both approaches consistently outperform training on real and unguided synthetic data, demonstrating the potential of PGD as an extension to replay and model-based RL methods.

To conclude, Oxford researchers introduced PGD, offering a controllable method for synthetic trajectory generation in offline RL. By directly modeling trajectories and utilizing policy guidance, PGD achieves competitive performance compared to autoregressive methods like PETS, with lower dynamics error. This approach consistently improves downstream agent performance across diverse environments and behavior policies. PGD addresses out-of-sample issues, paving the way for less conservative algorithms in offline RL with the potential for further enhancements.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit

Want to get in front of 1.5 Million AI Audience? Work with us here

The post Researchers at Oxford Presented Policy-Guided Diffusion: A Machine Learning Method for Controllable Generation of Synthetic Trajectories in Offline Reinforcement Learning RL appeared first on MarkTechPost.

Source: Read MoreÂ