For too long, the world of natural language processing has been dominated by models that primarily cater to the English language. This inherent bias has left a significant portion of the global population feeling underrepresented and overlooked. However, a groundbreaking new development is set to challenge this status quo and usher in a more inclusive era of language models – the Chinese Tiny LLM (CT-LLM).

Imagine a world where language barriers are no longer an obstacle to accessing cutting-edge AI technologies. That’s precisely what the researchers behind CT-LLM have set out to achieve by prioritizing the Chinese language, one of the most widely spoken in the world. This 2 billion parameter model departs from the conventional approach of training language models primarily on English datasets and then adapting them to other languages.

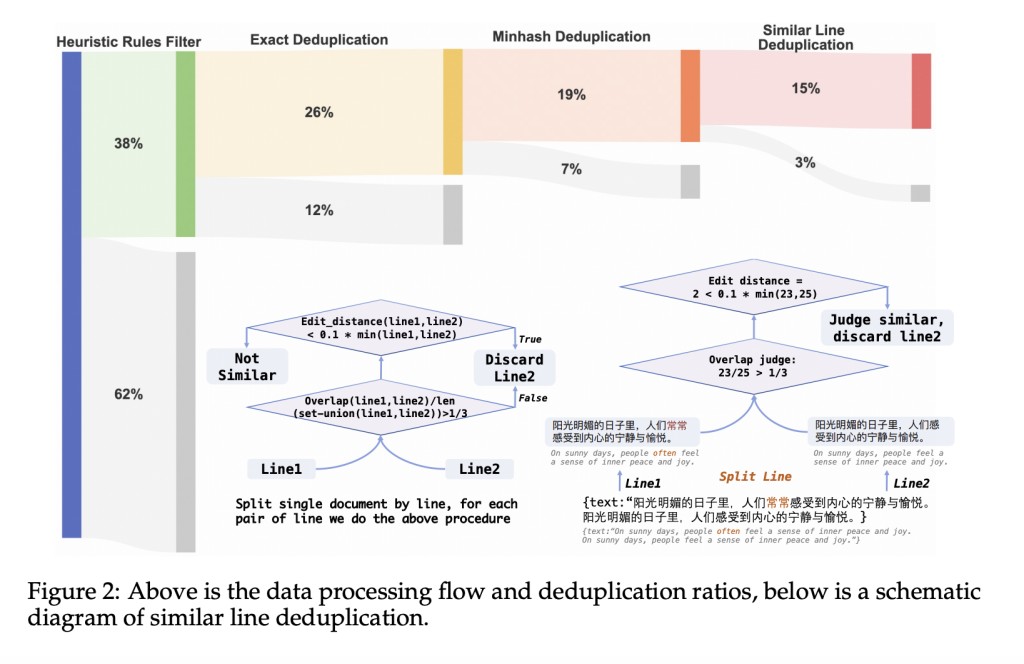

Instead, CT-LLM has been meticulously pre-trained on a staggering 1,200 billion tokens, with a strategic emphasis on Chinese data. The pretraining corpus comprises an impressive 840.48 billion Chinese tokens, complemented by 314.88 billion English tokens and 99.3 billion code tokens. This strategic composition not only equips the model with exceptional proficiency in understanding and processing Chinese but also enhances its multilingual adaptability, ensuring that it can navigate the linguistic landscapes of diverse cultures with ease.

But that’s not all – CT-LLM incorporates cutting-edge techniques contributing to its exceptional performance. One such innovation is supervised fine-tuning (SFT), which bolsters the model’s adeptness in Chinese language tasks while simultaneously enhancing its versatility in comprehending and generating English text. Moreover, the researchers have employed preference optimization techniques, such as DPO (Direct Preference Optimization), to align CT-LLM with human preferences, ensuring that its outputs are not only accurate but also harmless and helpful.

To put CT-LLM’s capabilities to the test, the researchers developed the Chinese Hard Case Benchmark (CHC-Bench), a multidisciplinary suite of challenging problems designed to assess the model’s instruction understanding and following abilities in the Chinese language. Remarkably, CT-LLM demonstrated outstanding performance on this benchmark, excelling in tasks related to social understanding and writing, showcasing its strong grasp of Chinese cultural contexts.

The development of CT-LLM represents a significant stride towards creating inclusive language models that reflect the linguistic diversity of our global society. By prioritizing the Chinese language from the outset, this groundbreaking model challenges the prevailing English-centric paradigm and paves the way for future innovations in NLP that cater to a broader range of languages and cultures. With its exceptional performance, innovative techniques, and open-sourced training process, CT-LLM stands as a beacon of hope for a more equitable and representative future in the field of natural language processing. In the future, language barriers are no longer an impediment to accessing cutting-edge AI technologies.

Check out the Paper and HF Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit

The post CT-LLM: A 2B Tiny LLM that Illustrates a Pivotal Shift Towards Prioritizing the Chinese Language in Developing LLMs appeared first on MarkTechPost.

Source: Read MoreÂ