In today’s data-driven world, valuable insights are often buried in unstructured text—be it clinical notes, lengthy legal contracts, or customer…

Machine Learning

In this tutorial, we explore how to integrate Microsoft AutoGen with Google’s free Gemini API using LiteLLM, enabling us to…

LLMs are deployed through conversational interfaces that present helpful, harmless, and honest assistant personas. However, they fail to maintain consistent…

OpenAI has just sent seismic waves through the AI world: for the first time since GPT-2 hit the scene in…

In this tutorial, we dive into building an advanced AI agent system based on the SAGE framework, Self-Adaptive Goal-oriented Execution,…

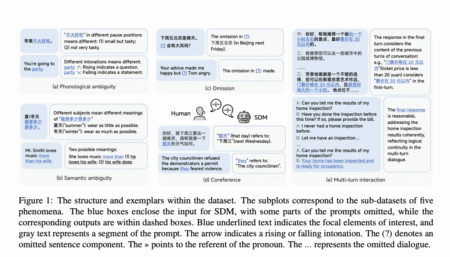

Spoken Dialogue Models (SDMs) are at the frontier of conversational AI, enabling seamless spoken interactions between humans and machines. Yet,…

The Model Context Protocol (MCP) has rapidly become a foundational standard for connecting large language models (LLMs) and other AI…

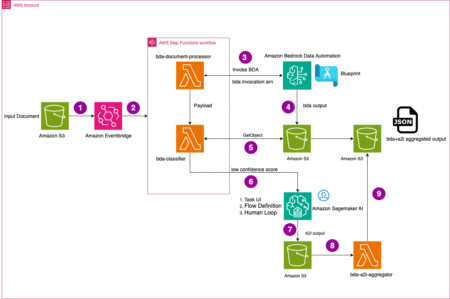

Organizations across industries face challenges with high volumes of multi-page documents that require intelligent processing to extract accurate information. Although…

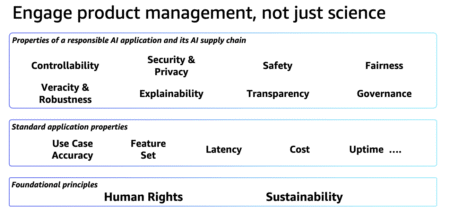

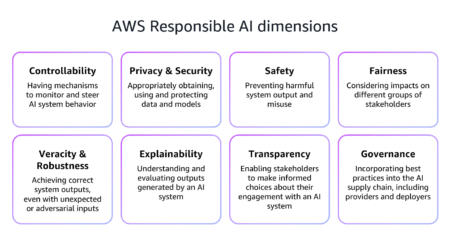

In Part 1 of our series, we explored the foundational concepts of responsible AI in the payments industry. In this…

The payments industry stands at the forefront of digital transformation, with artificial intelligence (AI) rapidly becoming a cornerstone technology that…

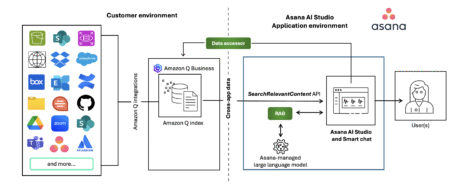

Organizations today face a critical challenge: managing an ever-increasing volume of tasks and information across multiple systems. Although traditional task…

While the last decade has witnessed significant advancements in Automatic Speech Recognition (ASR) systems, performance of these systems for individuals…

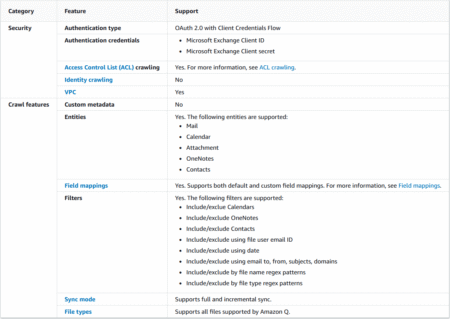

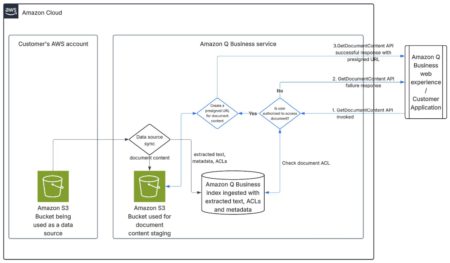

Amazon Q Business is a fully managed, generative AI-powered assistant that helps enterprises unlock the value of their data and…

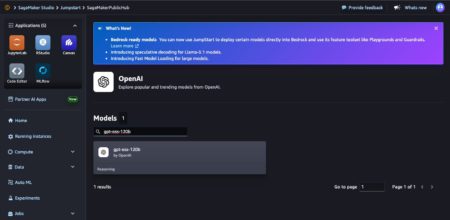

Today, we are excited to announce the availability of Open AI’s new open weight GPT OSS models, gpt-oss-120b and gpt-oss-20b,…

Organizations need user-friendly ways to build AI assistants that can reference enterprise documents while maintaining document security. This post shows…

Ambisonics is a spatial audio format describing a sound field. First-order Ambisonics (FOA) is a popular format comprising only four…

In this tutorial, we’ll explore a range of SHAP-IQ visualizations that provide insights into how a machine learning model arrives…

LLMs have shown notable improvements in mathematical reasoning by extending through natural language, resulting in performance gains on benchmarks such…

Building an intelligent agent goes far beyond clever prompt engineering for language models. To create real-world, autonomous AI systems that…

The tides have turned in the enterprise AI landscape. According to Menlo Ventures’ 2025 “Mid-Year LLM Market Update,” Anthropic’s Claude…