AI image generation — which relies on neural networks to create new images from a variety of inputs, including text prompts — is projected to become a billion-dollar industry by the end of this decade. Even with today’s technology, if you wanted to make a fanciful picture of, say, a friend planting a flag on Mars or heedlessly flying into a black hole, it could take less than a second. However, before they can perform tasks like that, image generators are commonly trained on massive datasets containing millions of images that are often paired with associated text. Training these generative models can be an arduous chore that takes weeks or months, consuming vast computational resources in the process.

But what if it were possible to generate images through AI methods without using a generator at all? That real possibility, along with other intriguing ideas, was described in a research paper presented at the International Conference on Machine Learning (ICML 2025), which was held in Vancouver, British Columbia, earlier this summer. The paper, describing novel techniques for manipulating and generating images, was written by Lukas Lao Beyer, a graduate student researcher in MIT’s Laboratory for Information and Decision Systems (LIDS); Tianhong Li, a postdoc at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL); Xinlei Chen of Facebook AI Research; Sertac Karaman, an MIT professor of aeronautics and astronautics and the director of LIDS; and Kaiming He, an MIT associate professor of electrical engineering and computer science.

This group effort had its origins in a class project for a graduate seminar on deep generative models that Lao Beyer took last fall. In conversations during the semester, it became apparent to both Lao Beyer and He, who taught the seminar, that this research had real potential, which went far beyond the confines of a typical homework assignment. Other collaborators were soon brought into the endeavor.

The starting point for Lao Beyer’s inquiry was a June 2024 paper, written by researchers from the Technical University of Munich and the Chinese company ByteDance, which introduced a new way of representing visual information called a one-dimensional tokenizer. With this device, which is also a kind of neural network, a 256×256-pixel image can be translated into a sequence of just 32 numbers, called tokens. “I wanted to understand how such a high level of compression could be achieved, and what the tokens themselves actually represented,” says Lao Beyer.

The previous generation of tokenizers would typically break up the same image into an array of 16×16 tokens — with each token encapsulating information, in highly condensed form, that corresponds to a specific portion of the original image. The new 1D tokenizers can encode an image more efficiently, using far fewer tokens overall, and these tokens are able to capture information about the entire image, not just a single quadrant. Each of these tokens, moreover, is a 12-digit number consisting of 1s and 0s, allowing for 212 (or about 4,000) possibilities altogether. “It’s like a vocabulary of 4,000 words that makes up an abstract, hidden language spoken by the computer,” He explains. “It’s not like a human language, but we can still try to find out what it means.”

That’s exactly what Lao Beyer had initially set out to explore — work that provided the seed for the ICML 2025 paper. The approach he took was pretty straightforward. If you want to find out what a particular token does, Lao Beyer says, “you can just take it out, swap in some random value, and see if there is a recognizable change in the output.” Replacing one token, he found, changes the image quality, turning a low-resolution image into a high-resolution image or vice versa. Another token affected the blurriness in the background, while another still influenced the brightness. He also found a token that’s related to the “pose,” meaning that, in the image of a robin, for instance, the bird’s head might shift from right to left.

“This was a never-before-seen result, as no one had observed visually identifiable changes from manipulating tokens,” Lao Beyer says. The finding raised the possibility of a new approach to editing images. And the MIT group has shown, in fact, how this process can be streamlined and automated, so that tokens don’t have to be modified by hand, one at a time.

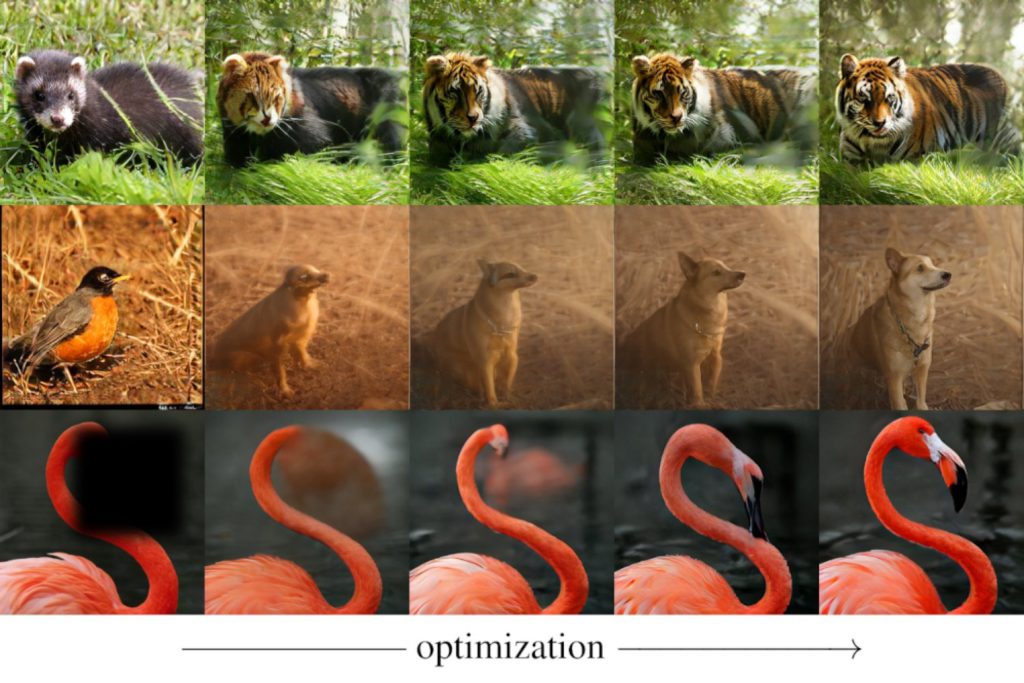

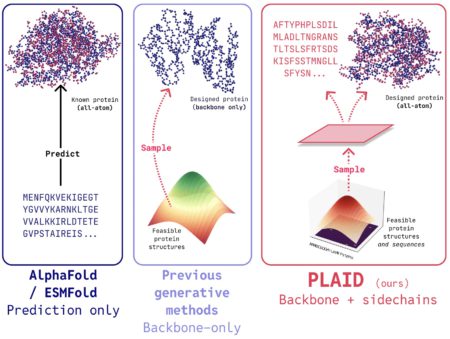

He and his colleagues achieved an even more consequential result involving image generation. A system capable of generating images normally requires a tokenizer, which compresses and encodes visual data, along with a generator that can combine and arrange these compact representations in order to create novel images. The MIT researchers found a way to create images without using a generator at all. Their new approach makes use of a 1D tokenizer and a so-called detokenizer (also known as a decoder), which can reconstruct an image from a string of tokens. However, with guidance provided by an off-the-shelf neural network called CLIP — which cannot generate images on its own, but can measure how well a given image matches a certain text prompt — the team was able to convert an image of a red panda, for example, into a tiger. In addition, they could create images of a tiger, or any other desired form, starting completely from scratch — from a situation in which all the tokens are initially assigned random values (and then iteratively tweaked so that the reconstructed image increasingly matches the desired text prompt).

The group demonstrated that with this same setup — relying on a tokenizer and detokenizer, but no generator — they could also do “inpainting,” which means filling in parts of images that had somehow been blotted out. Avoiding the use of a generator for certain tasks could lead to a significant reduction in computational costs because generators, as mentioned, normally require extensive training.

What might seem odd about this team’s contributions, He explains, “is that we didn’t invent anything new. We didn’t invent a 1D tokenizer, and we didn’t invent the CLIP model, either. But we did discover that new capabilities can arise when you put all these pieces together.”

“This work redefines the role of tokenizers,” comments Saining Xie, a computer scientist at New York University. “It shows that image tokenizers — tools usually used just to compress images — can actually do a lot more. The fact that a simple (but highly compressed) 1D tokenizer can handle tasks like inpainting or text-guided editing, without needing to train a full-blown generative model, is pretty surprising.”

Zhuang Liu of Princeton University agrees, saying that the work of the MIT group “shows that we can generate and manipulate the images in a way that is much easier than we previously thought. Basically, it demonstrates that image generation can be a byproduct of a very effective image compressor, potentially reducing the cost of generating images several-fold.”

There could be many applications outside the field of computer vision, Karaman suggests. “For instance, we could consider tokenizing the actions of robots or self-driving cars in the same way, which may rapidly broaden the impact of this work.”

Lao Beyer is thinking along similar lines, noting that the extreme amount of compression afforded by 1D tokenizers allows you to do “some amazing things,” which could be applied to other fields. For example, in the area of self-driving cars, which is one of his research interests, the tokens could represent, instead of images, the different routes that a vehicle might take.

Xie is also intrigued by the applications that may come from these innovative ideas. “There are some really cool use cases this could unlock,” he says.

Source: Read MoreÂ