For all their impressive capabilities, large language models (LLMs) often fall short when given challenging new tasks that require complex reasoning skills.

While an accounting firm’s LLM might excel at summarizing financial reports, that same model could fail unexpectedly if tasked with predicting market trends or identifying fraudulent transactions.

To make LLMs more adaptable, MIT researchers investigated how a certain training technique can be strategically deployed to boost a model’s performance on unfamiliar, difficult problems.

They show that test-time training, a method that involves temporarily updating some of a model’s inner workings during deployment, can lead to a sixfold improvement in accuracy. The researchers developed a framework for implementing a test-time training strategy that uses examples of the new task to maximize these gains.

Their work could improve a model’s flexibility, enabling an off-the-shelf LLM to adapt to complex tasks that require planning or abstraction. This could lead to LLMs that would be more accurate in many applications that require logical deduction, from medical diagnostics to supply chain management.

“Genuine learning — what we did here with test-time training — is something these models can’t do on their own after they are shipped. They can’t gain new skills or get better at a task. But we have shown that if you push the model a little bit to do actual learning, you see that huge improvements in performance can happen,” says Ekin Akyürek PhD ’25, lead author of the study.

Akyürek is joined on the paper by graduate students Mehul Damani, Linlu Qiu, Han Guo, and Jyothish Pari; undergraduate Adam Zweiger; and senior authors Yoon Kim, an assistant professor of Electrical Engineering and Computer Science (EECS) and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL); and Jacob Andreas, an associate professor in EECS and a member of CSAIL. The research will be presented at the International Conference on Machine Learning.

Tackling hard domains

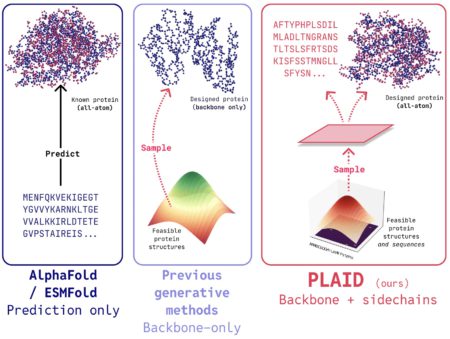

LLM users often try to improve the performance of their model on a new task using a technique called in-context learning. They feed the model a few examples of the new task as text prompts which guide the model’s outputs.

But in-context learning doesn’t always work for problems that require logic and reasoning.

The MIT researchers investigated how test-time training can be used in conjunction with in-context learning to boost performance on these challenging tasks. Test-time training involves updating some model parameters — the internal variables it uses to make predictions — using a small amount of new data specific to the task at hand.

The researchers explored how test-time training interacts with in-context learning. They studied design choices that maximize the performance improvements one can coax out of a general-purpose LLM.

“We find that test-time training is a much stronger form of learning. While simply providing examples can modestly boost accuracy, actually updating the model with those examples can lead to significantly better performance, particularly in challenging domains,” Damani says.

In-context learning requires a small set of task examples, including problems and their solutions. The researchers use these examples to create a task-specific dataset needed for test-time training.

To expand the size of this dataset, they create new inputs by slightly changing the problems and solutions in the examples, such as by horizontally flipping some input data. They find that training the model on the outputs of this new dataset leads to the best performance.

In addition, the researchers only update a small number of model parameters using a technique called low-rank adaption, which improves the efficiency of the test-time training process.

“This is important because our method needs to be efficient if it is going to be deployed in the real world. We find that you can get huge improvements in accuracy with a very small amount of parameter training,” Akyürek says.

Developing new skills

Streamlining the process is key, since test-time training is employed on a per-instance basis, meaning a user would need to do this for each individual task. The updates to the model are only temporary, and the model reverts to its original form after making a prediction.

A model that usually takes less than a minute to answer a query might take five or 10 minutes to provide an answer with test-time training, Akyürek adds.

“We wouldn’t want to do this for all user queries, but it is useful if you have a very hard task that you want to the model to solve well. There also might be tasks that are too challenging for an LLM to solve without this method,” he says.

The researchers tested their approach on two benchmark datasets of extremely complex problems, such as IQ puzzles. It boosted accuracy as much as sixfold over techniques that use only in-context learning.

Tasks that involved structured patterns or those which used completely unfamiliar types of data showed the largest performance improvements.

“For simpler tasks, in-context learning might be OK. But updating the parameters themselves might develop a new skill in the model,” Damani says.

In the future, the researchers want to use these insights toward the development of models that continually learn.

The long-term goal is an LLM that, given a query, can automatically determine if it needs to use test-time training to update parameters or if it can solve the task using in-context learning, and then implement the best test-time training strategy without the need for human intervention.

This work is supported, in part, by the MIT-IBM Watson AI Lab and the National Science Foundation.

Source: Read MoreÂ