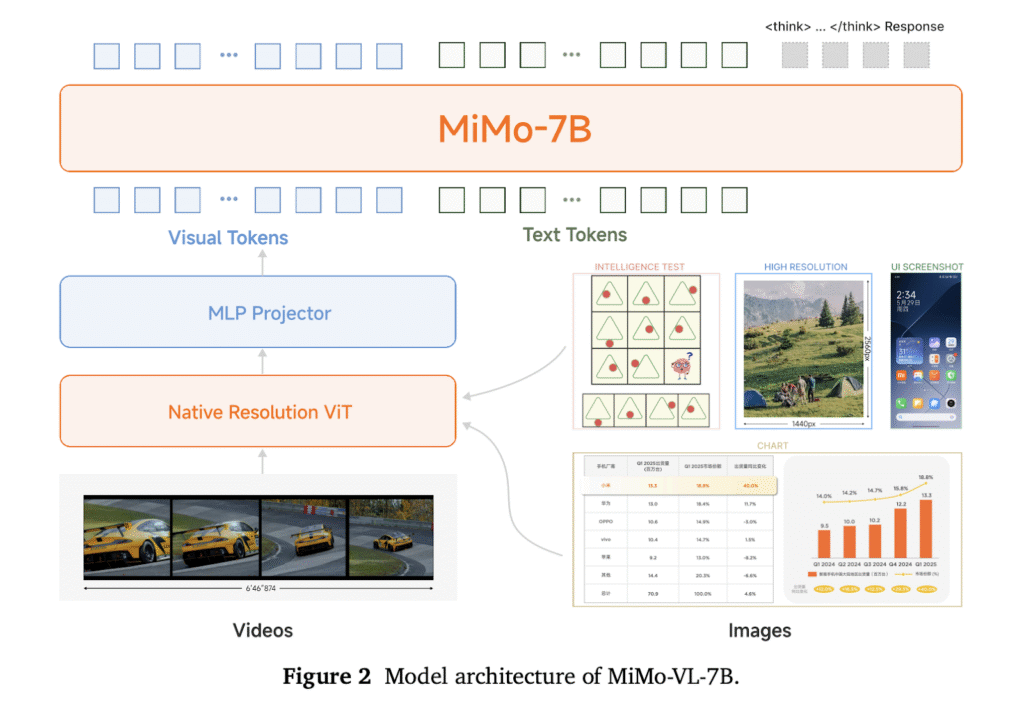

Vision-language models (VLMs) have become foundational components for multimodal AI systems, enabling autonomous agents to understand visual environments, reason over multimodal content, and interact with both digital and physical worlds. The significance of these capabilities has led to extensive research across architectural designs and training methodologies, resulting in rapid advancements in the field. Researchers from Xiaomi introduce MiMo-VL-7B, a compact yet powerful VLM comprising three key components: a native-resolution Vision Transformer encoder that preserves fine-grained visual details, a Multi-Layer Perceptron projector for efficient cross-modal alignment, and the MiMo-7B language model optimized for complex reasoning tasks.

MiMo-VL-7B undergoes two sequential training processes. The first process is a four-stage pre-training phase, including projector warmup, vision-language alignment, general multimodal pre-training, and long-context supervised fine-tuning, which consumes 2.4 trillion tokens from curated high-quality datasets. This yields the MiMo-VL-7B-SFT model. The second process is the post-training phase, which introduces Mixed On-policy Reinforcement Learning (MORL), integrating diverse reward signals spanning perception accuracy, visual grounding precision, logical reasoning capabilities, and human preferences. This yields the MiMo-VL-7B-RL model. Key findings reveal that incorporating high-quality, broad-coverage reasoning data from the pre-training stage enhances model performance, while achieving stable simultaneous improvements remains challenging.

The MiMo-VL-7B architecture contains three components, (a) a Vision Transformer (ViT) for encoding visual inputs such as images and videos, (b) a projector that maps the visual encodings into a latent space aligned with the LLM, and (c) the LLM itself, which performs textual understanding and reasoning. The Qwen2.5-ViT is adopted as a visual encoder to support native resolution inputs. The LLM backbone with MiMo-7B-Base as its strong reasoning capability, and a randomly initialized Multi-Layer Perceptron (MLP) as the projector are used in the model’s architecture. The model’s pre-training dataset comprises 2.4 trillion tokens, diverse multimodal data, image captions, interleaved data, Optical Character Recognition (OCR) data, grounding data, video content, GUI interactions, reasoning examples, and text-only sequences.

The post-training phase further enhances MiMo-VL-7B on challenging reasoning tasks and with human preference alignment by utilizing the MORL framework that seamlessly integrates Reinforcement Learning with Verifiable Rewards (RLVR) and RLHF. RLVR utilizes rule-based reward functions for continuous self-improvement, so multiple verifiable reasoning and perception tasks are designed to validate the final answer precisely using predefined rules. RLHF is employed in this verifiable reward framework to address human preference alignment and mitigate undesirable behaviors. Moreover, the MORL is implemented to optimize RLVR and RLHF objectives simultaneously.

Comprehensive evaluation across 50 tasks demonstrates MiMo-VL-7B’s state-of-the-art performance among open-source models. In general capabilities, the models achieve exceptional results on general vision-language tasks, with MiMo-VL-7B-SFT and MiMo-VL-7B-RL obtaining 64.6% and 66.7% on MMMUval, respectively, outperforming larger models like Gemma 3 27B. For document understanding, MiMo-VL-7B-RL excels with 56.5% on CharXivRQ, significantly exceeding Qwen2.5-VL by 14.0 points and InternVL3 by 18.9 points. In multimodal reasoning tasks, both the RL and SFT models substantially outperform open-source baselines, with MiMo-VL-7B-SFT even surpassing much larger models, including Qwen2.5-VL-72B and QVQ-72B-Preview. The RL variant achieves further improvements, boosting MathVision accuracy from 57.9% to 60.4%.

MiMo-VL-7B demonstrates exceptional GUI understanding and grounding capabilities, with the RL model outperforming all compared general VLMs and achieving comparable or superior performance to GUI-specialized models on challenging benchmarks like Screenspot-Pro and OSWorld-G. The model achieves the highest Elo rating among all evaluated open-source VLMs, ranking first across models spanning 7B to 72B parameters and closely approaching proprietary models like Claude 3.7 Sonnet. MORL provides a significant 22+ point boost to the SFT model, validating the effectiveness of the training methodology and highlighting the competitive capability of this general-purpose VLM approach.

In conclusion, researchers introduced MiMo-VL-7B models that achieve state-of-the-art performance through curated, high-quality pre-training datasets and the MORL frameworks. Key development insights include consistent performance gains from incorporating reasoning data in later pre-training stages, the advantages of on-policy RL over vanilla GRPO, and challenges of task interference when applying MORL across diverse capabilities. The researchers open-source the comprehensive evaluation suite to promote transparency and reproducibility in multimodal research. This work advances capable open-source vision-language models and provides valuable insights for the community.

Check out the Paper, GitHub Page and Model on Hugging Face. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit and Subscribe to our Newsletter.

The post MiMo-VL-7B: A Powerful Vision-Language Model to Enhance General Visual Understanding and Multimodal Reasoning appeared first on MarkTechPost.

Source: Read MoreÂ