Manipulating lighting conditions in images post-capture is challenging. Traditional approaches rely on 3D graphics methods that reconstruct scene geometry and properties from multiple captures before simulating new lighting using physical illumination models. Though these techniques provide explicit control over light sources, recovering accurate 3D models from single images remains a problem that frequently results in unsatisfactory results. Modern diffusion-based image editing methods have emerged as alternatives that use strong statistical priors to bypass physical modeling requirements. However, these approaches struggle with precise parametric control due to their inherent stochasticity and dependence on textual conditioning.

Generative image editing methods have been adapted for various relighting tasks with mixed results. Portrait relighting approaches often use light stage data to supervise generative models, while object relighting methods might fine-tune diffusion models using synthetic datasets conditioned on environment maps. Some methods assume a single dominant light source for outdoor scenes, like the sun, while indoor scenes present more complex multi-illumination challenges. Various approaches address these issues, including inverse rendering networks and methods that manipulate StyleGAN’s latent space. Flash photography research shows progress in multi-illumination editing through techniques that use flash/no-flash pairs to disentangle and manipulate scene illuminants.

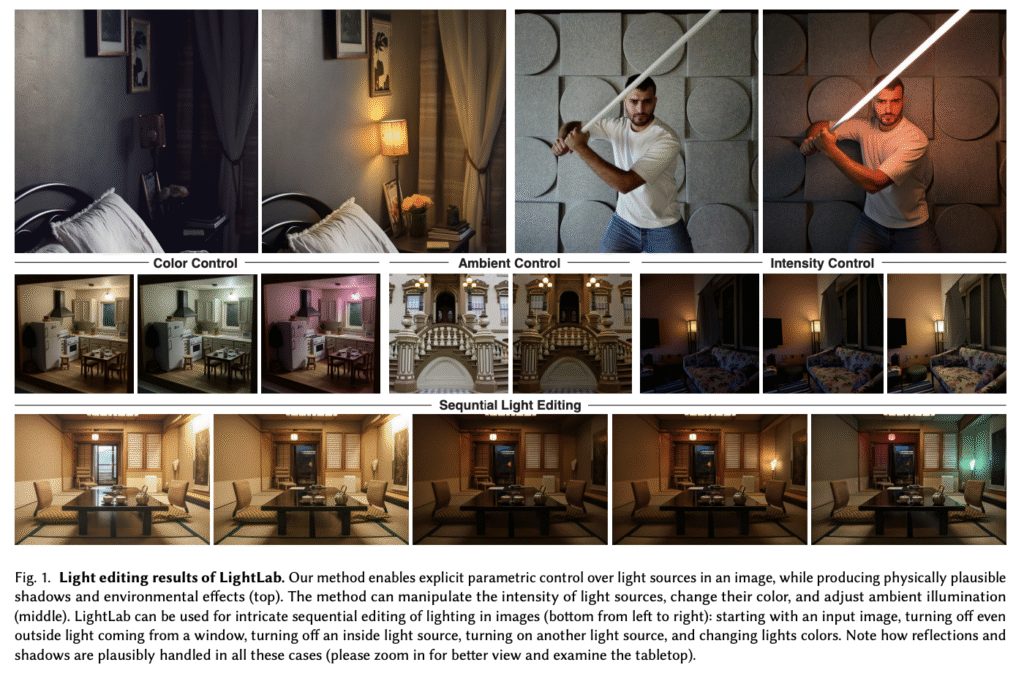

Researchers from Google, Tel Aviv University, Reichman University, and Hebrew University of Jerusalem have proposed LightLab, a diffusion-based method enabling explicit parametric control over light sources in images. It targets two fundamental properties of light sources, intensity and color. LightLab provides control over ambient illumination and tone mapping effects, creating a comprehensive set of editing tools that allow users to manipulate an image’s overall look and feel through illumination adjustments. The method shows effectiveness on indoor images containing visible light sources, though additional results show promise for outdoor scenes and out-of-domain examples. Comparative analysis confirms that LightLab is pioneering in delivering high-quality, precise control over visible local light sources.

LightLab uses a pair of images to implicitly model controlled light changes in image space, which then trains a specialized diffusion model. The data collection combines real photographs with synthetic renderings. The photography dataset consists of 600 raw image pairs captured using mobile devices on tripods, with each pair showing identical scenes where only a visible light source is switched on or off. Auto-exposure settings and post-capture calibration ensure proper exposure. A larger set of synthetic images is rendered from 20 artist-created indoor 3D scenes to augment this collection using physically-based rendering in Blender. This synthetic pipeline randomly samples camera views around target objects and procedurally assigns light source parameters, including intensity, color temperature, area size, and cone angle.

Comparative analysis shows that using a weighted mixture of real captures and synthetic renders achieves optimal results across all settings. The quantitative improvement from adding synthetic data to real captures is relatively modest at only 2.2% in PSNR, likely because significant local illumination changes are overshadowed by low-frequency image-wide details in these metrics. Qualitative comparisons on evaluation datasets show LightLab’s superiority over competing methods like OmniGen, RGB  X, ScribbleLight, and IC-Light. These alternatives often introduce unwanted illumination changes, color distortion, or geometric inconsistencies. In contrast, LightLab provides faithful control over target light sources while generating physically plausible lighting effects throughout the scene.

X, ScribbleLight, and IC-Light. These alternatives often introduce unwanted illumination changes, color distortion, or geometric inconsistencies. In contrast, LightLab provides faithful control over target light sources while generating physically plausible lighting effects throughout the scene.

In conclusion, researchers introduced LightLab, an advancement in diffusion-based light source manipulation for images. Using light linearity principles and synthetic 3D data, the researchers created high-quality paired images that implicitly model complex illumination changes. Despite its strengths, LightLab faces limitations from dataset bias, particularly regarding light source types. This could be addressed through integration with unpaired fine-tuning methods. Moreover, while the simplistic data capture process using consumer mobile devices with post-capture exposure calibration facilitated easier dataset collection, it prevents precise relighting in absolute physical units, indicating room for further refinement in future iterations.

Check out the Paper and Project Page. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 90k+ ML SubReddit.

The post Google Researchers Introduce LightLab: A Diffusion-Based AI Method for Physically Plausible, Fine-Grained Light Control in Single Images appeared first on MarkTechPost.

Source: Read MoreÂ