In the Large Language Models (LLM) RL training, value-free methods like GRPO and DAPO have shown great effectiveness. The true potential lies in value-based methods, which allow more precise credit assignment by accurately tracing each action’s impact on subsequent returns. This precision is crucial for complex reasoning, where subtle errors can lead to catastrophic failures. However, training effective value models for long chain-of-thought (CoT) tasks face challenges: achieving low bias despite lengthy trajectories, managing distinct preferences of short and long responses, and addressing reward signal sparsity. Despite their theoretical advantages, these difficulties have hindered the full realization of value-based methods.

Value-based reinforcement learning methods for LLMs face three significant challenges when applied to long chain-of-thought reasoning tasks. First, the Value Model Bias issue identified in VC-PPO shows that initializing value models with reward models introduces positive bias. Second, Heterogeneous Sequence Lengths in complex reasoning tasks create difficulties for standard approaches like GAE with fixed parameters, which cannot effectively adapt to sequences ranging from very short to extremely long. Third, the Sparsity of the Reward Signal becomes problematic in verifier-based tasks that provide binary feedback rather than continuous values. This sparsity is worsened by lengthy CoT responses, creating a difficult exploration-exploitation trade-off during optimization.

Researchers from ByteDance Seed have proposed Value Augmented Proximal Policy Optimization (VAPO), a value-based RL training framework to address the challenges of long CoT reasoning tasks. VAPO introduces three key innovations: a detailed value-based training framework with superior performance and efficiency, a Length-adaptive GAE mechanism that adjusts the parameter based on response lengths to optimize advantage estimation, and a systematic integration of techniques from prior research. VAPO combines these components to create a system where the collective improvements exceed what individual enhancements could achieve independently. Using the Qwen2.5-32B model without SFT data, VAPO improves scores from 5 to 60, surpassing previous state-of-the-art methods by 10 points.

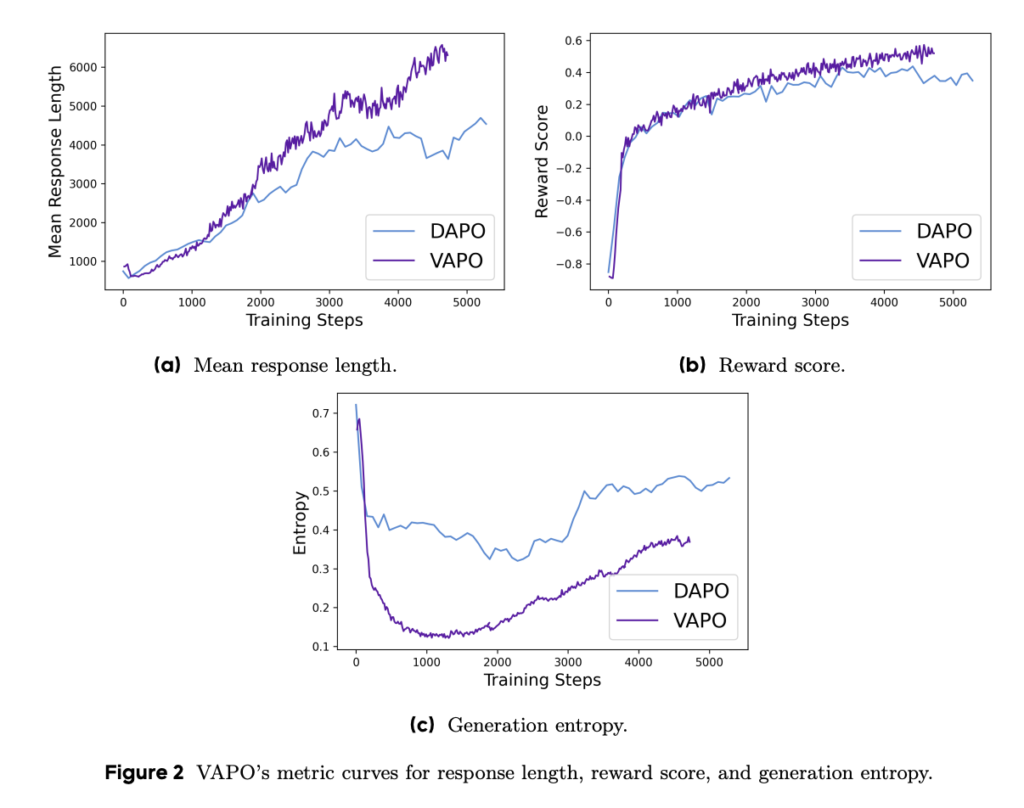

The VAPO is built upon the PPO algorithm with several key modifications to enhance mathematical reasoning capabilities. Training dynamics analysis reveals VAPO’s superior characteristics compared to DAPO, including smoother training curves indicating more stable optimization, better length scaling which enhances generalization capabilities, faster score growth due to the granular signals provided by the value model, and lower entropy in later training stages. While reduced entropy could potentially limit exploration, the method balances this trade-off effectively, resulting in minimal performance impact while improving reproducibility and stability. This shows how VAPO’s decisions directly address the core challenges of value-based RL in complex reasoning tasks.

While DeepSeek R1 using GRPO achieves 47 points on AIME24 and DAPO reaches 50 points, VAPO matches DAPO’s performance on Qwen-32b with just 60% of the update steps and achieves a new state-of-the-art score of 60.4 within only 5,000 steps. Vanilla PPO achieves only 5 points due to value model learning collapse, but VAPO finally achieves 60 points. Ablation studies validated the effectiveness of the seven proposed modifications: Value-Pretraining prevents collapse, decoupled GAE enables full optimization of long-form responses, adaptive GAE balances short and long response optimization, Clip-higher encourages thorough exploration, Token-level loss increases long response weighting, positive-example LM loss adds 6 points, and Group-Sampling contributes 5 points to the final performance.

In this paper, researchers introduced VAPO, an algorithm that utilizes the Qwen2.5-32B model to achieve state-of-the-art performance on the AIME24 benchmark. By introducing seven innovative techniques on top of the PPO framework, VAPO significantly refines value learning and creates an optimal balance between exploration and exploitation. This value-based approach decisively outperforms value-free methods like GRPO and DAPO, establishing a new performance ceiling for reasoning tasks. It addresses fundamental challenges in training value models for long CoT scenarios, providing a robust foundation for advancing LLMs in reasoning-intensive applications.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

The post ByteDance Introduces VAPO: A Novel Reinforcement Learning Framework for Advanced Reasoning Tasks appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]