Reinforcement Learning RL has become a widely used post-training method for LLMs, enhancing capabilities like human alignment, long-term reasoning, and adaptability. A major challenge, however, is generating accurate reward signals in broad, less structured domains, as current high-quality reward models are largely built on rule-based systems or verifiable tasks such as math and coding. In general applications, reward criteria are more diverse and subjective, lacking clear ground truths. To address this, generalist reward models (RMs) are being explored for broader applicability. However, these models must balance input flexibility and scalability during inference, particularly in producing reliable, high-quality rewards across varied tasks and domains.

Existing reward modeling approaches include scalar, semi-scalar, and generative techniques, each with flexibility and inference-time performance trade-offs. For instance, pairwise models are limited to relative comparisons, while scalar models struggle with producing diverse feedback. Generative reward models (GRMs) offer richer, more flexible outputs, making them more suited for evaluating various responses. Recent work has explored training GRMs through offline RL, integrating tools and external knowledge to improve reward quality. However, few methods directly address how RMs can scale efficiently during inference. This has led to research on methods like sampling-based scaling, chain-of-thought prompting, and reward-guided aggregation, aiming to co-scale policy models and reward models during inference. These developments hold promise for more robust, general-purpose reward systems in LLMs.

DeepSeek-AI and Tsinghua University researchers explore enhancing reward models RM for general queries by improving inference-time scalability using increased computing and better learning techniques. They employ pointwise GRM for flexible input handling and propose a learning method—Self-Principled Critique Tuning (SPCT)—which helps GRMs generate adaptive principles and accurate critiques during online reinforcement learning. They apply parallel sampling and introduce a meta RM to scale effectively and refine the voting process. Their DeepSeek-GRM models outperform existing benchmark methods, offering higher reward quality and scalability, with plans for open-sourcing despite challenges in some complex tasks.

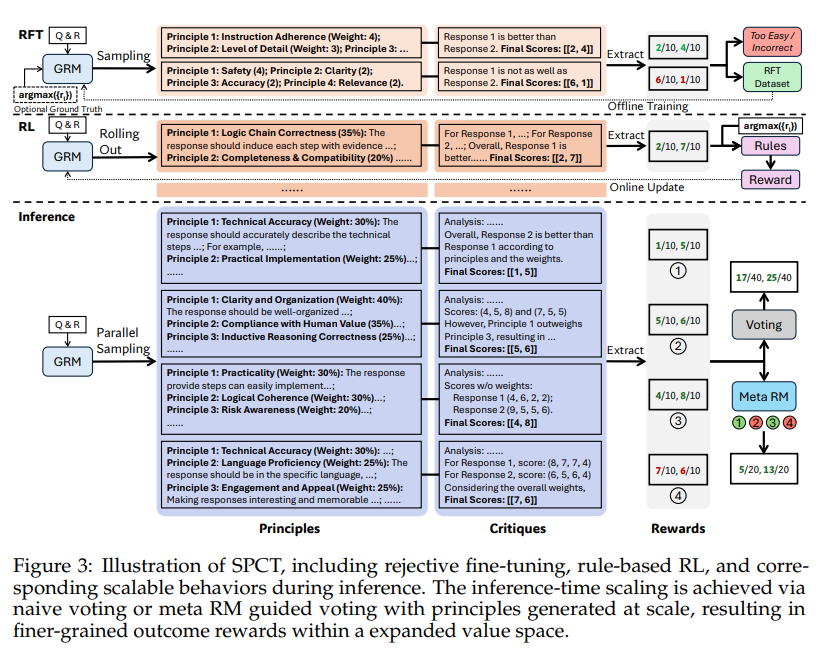

The researchers introduce SPCT, a method designed to enhance pointwise GRMs by enabling them to generate adaptive principles and accurate critiques. SPCT consists of two stages: rejective fine-tuning for initializing principle and critique generation and rule-based RL for refinement. Instead of treating principles as preprocessing, they are generated dynamically during inference. This promotes scalability by improving reward granularity. Additionally, inference-time performance is boosted through parallel sampling and voting, supported by a meta reward model (meta RM) that filters out low-quality outputs. Overall, SPCT improves reward accuracy, robustness, and scalability in GRMs.

Using standard metrics, the study evaluates various RM methods across benchmarks like Reward Bench, PPE, RMB, and ReaLMistake. DeepSeek-GRM-27B consistently outperforms baselines and rivals strong public models like GPT-4o. Inference-time scaling, especially with voting and meta reward models, significantly boosts performance—achieving results comparable to much larger models. Ablation studies highlight the importance of components like principle generation and non-hinted sampling. Training-time scaling shows diminishing returns compared to inference-time strategies. Overall, DeepSeek-GRM, enhanced with SPCT and meta RM, offers robust, scalable reward modeling with reduced domain bias and strong generalization.

In conclusion, the study presents SPCT, a method that improves inference-time scalability for GRMs through rule-based online reinforcement learning. SPCT enables adaptive principle and critique generation, enhancing reward quality across diverse tasks. DeepSeek-GRM models outperform several baselines and strong public models, especially when paired with a meta reward model for inference-time scaling. Using parallel sampling and flexible input handling, these GRMs achieve strong performance without relying on larger model sizes. Future work includes integrating GRMs into RL pipelines, co-scaling with policy models, and serving as reliable offline evaluators.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

The post Scalable and Principled Reward Modeling for LLMs: Enhancing Generalist Reward Models RMs with SPCT and Inference-Time Optimization appeared first on MarkTechPost.

Source: Read MoreÂ

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]

[Register Now] miniCON Virtual Conference on OPEN SOURCE AI: FREE REGISTRATION + Certificate of Attendance + 3 Hour Short Event (April 12, 9 am- 12 pm PST) + Hands on Workshop [Sponsored]