Large Language Models (LLMs) have become integral to various artificial intelligence applications, demonstrating capabilities in natural language processing, decision-making, and creative tasks. However, critical challenges remain in understanding and predicting their behaviors. Treating LLMs as black boxes complicates efforts to assess their reliability, particularly in contexts where errors can have significant consequences. Traditional approaches often rely on internal model states or gradients to interpret behaviors, which are unavailable for closed-source, API-based models. This limitation raises an important question: how can we effectively evaluate LLM behavior with only black-box access? The problem is further compounded by adversarial influences and potential misrepresentation of models through APIs, highlighting the need for robust and generalizable solutions.

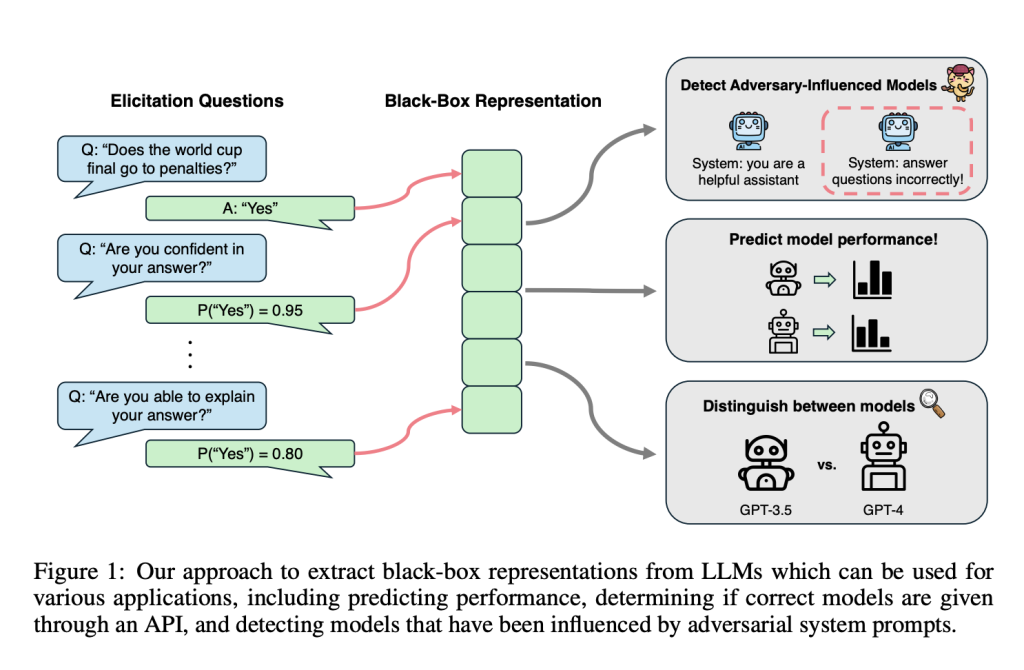

To address these challenges, researchers at Carnegie Mellon University have developed QueRE (Question Representation Elicitation). This method is tailored for black-box LLMs and extracts low-dimensional, task-agnostic representations by querying models with follow-up prompts about their outputs. These representations, based on probabilities associated with elicited responses, are used to train predictors of model performance. Notably, QueRE performs comparably to or even better than some white-box techniques in reliability and generalizability.

Unlike methods dependent on internal model states or full output distributions, QueRE relies on accessible outputs, such as top-k probabilities available through most APIs. When such probabilities are unavailable, they can be approximated through sampling. QueRE’s features also enable evaluations such as detecting adversarially influenced models and distinguishing between architectures and sizes, making it a versatile tool for understanding and utilizing LLMs.

Technical Details and Benefits of QueRE

QueRE operates by constructing feature vectors derived from elicitation questions posed to the LLM. For a given input and the model’s response, these questions assess aspects such as confidence and correctness. Questions like “Are you confident in your answer?” or “Can you explain your answer?” enable the extraction of probabilities that reflect the model’s reasoning.

The extracted features are then used to train linear predictors for various tasks:

- Performance Prediction: Evaluating whether a model’s output is correct at an instance level.

- Adversarial Detection: Identifying when responses are influenced by malicious prompts.

- Model Differentiation: Distinguishing between different architectures or configurations, such as identifying smaller models misrepresented as larger ones.

By relying on low-dimensional representations, QueRE supports strong generalization across tasks. Its simplicity ensures scalability and reduces the risk of overfitting, making it a practical tool for auditing and deploying LLMs in diverse applications.

Results and Insights

Experimental evaluations demonstrate QueRE’s effectiveness across several dimensions. In predicting LLM performance on question-answering (QA) tasks, QueRE consistently outperformed baselines relying on internal states. For instance, on open-ended QA benchmarks like SQuAD and Natural Questions (NQ), QueRE achieved an Area Under the Receiver Operating Characteristic Curve (AUROC) exceeding 0.95. Similarly, it excelled in detecting adversarially influenced models, outperforming other black-box methods.

QueRE also proved robust and transferable. Its features were successfully applied to out-of-distribution tasks and different LLM configurations, validating its adaptability. The low-dimensional representations facilitated efficient training of simple models, ensuring computational feasibility and robust generalization bounds.

Another notable result was QueRE’s ability to use random sequences of natural language as elicitation prompts. These sequences often matched or exceeded the performance of structured queries, highlighting the method’s flexibility and potential for diverse applications without extensive manual prompt engineering.

Conclusion

QueRE offers a practical and effective approach to understanding and optimizing black-box LLMs. By transforming elicitation responses into actionable features, QueRE provides a scalable and robust framework for predicting model behavior, detecting adversarial influences, and differentiating architectures. Its success in empirical evaluations suggests it is a valuable tool for researchers and practitioners aiming to enhance the reliability and safety of LLMs.

As AI systems evolve, methods like QueRE will play a crucial role in ensuring transparency and trustworthiness. Future work could explore extending QueRE’s applicability to other modalities or refining its elicitation strategies for enhanced performance. For now, QueRE represents a thoughtful response to the challenges posed by modern AI systems.

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 65k+ ML SubReddit.

Recommend Open-Source Platform: Parlant is a framework that transforms how AI agents make decisions in customer-facing scenarios. (Promoted)

Recommend Open-Source Platform: Parlant is a framework that transforms how AI agents make decisions in customer-facing scenarios. (Promoted)

The post CMU Researchers Propose QueRE: An AI Approach to Extract Useful Features from a LLM appeared first on MarkTechPost.

Source: Read MoreÂ