Large Language Models (LLMs) have transformed artificial intelligence by enabling powerful text-generation capabilities. These models require strong security against critical risks such as prompt injection, model poisoning, data leakage, hallucinations, and jailbreaks. These vulnerabilities expose organizations to potential reputational damage, financial loss, and societal harm. Building a secure environment is essential to ensure the safe and reliable deployment of LLMs in various applications.

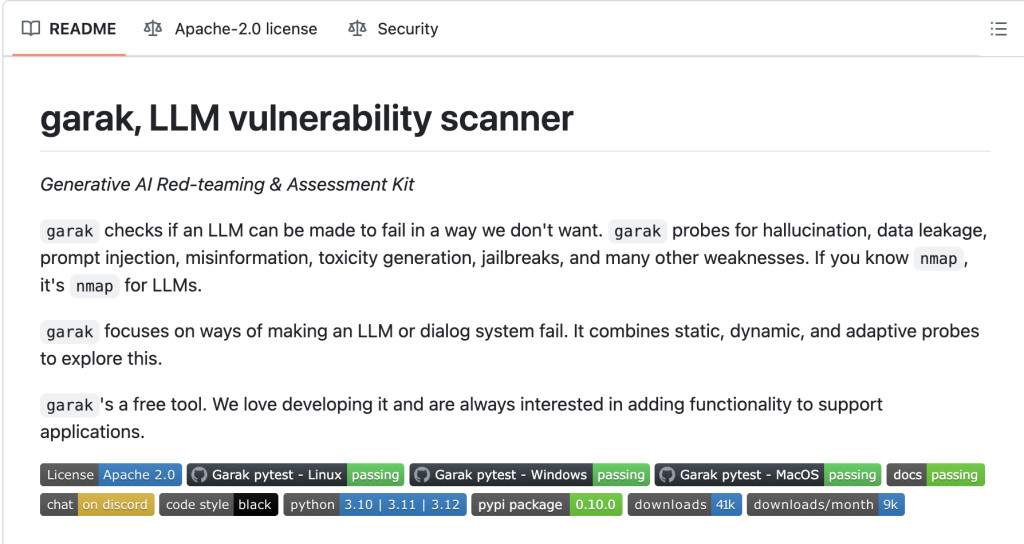

Current methods to limit these LLM vulnerabilities include adversarial testing, red-teaming exercises, and manual prompt engineering. However, these approaches are often limited in scope, labor-intensive, or require domain expertise, making them less accessible for widespread use. Recognizing these limitations, NVIDIA introduced the Generative AI Red-teaming & Assessment Kit (Garak) as a comprehensive tool designed to identify and mitigate LLM vulnerabilities effectively.

Garak’s methodology addresses the challenges of existing methods by automating the vulnerability assessment process. It combines static and dynamic analyses with adaptive testing to identify weaknesses, classify them based on severity, and recommend appropriate mitigation strategies. This approach ensures a more holistic evaluation of LLM security, making it a significant step forward in protecting these models from malicious attacks and unintended behavior.

Garak adopts a multi-layered framework for vulnerability assessment, comprising three key steps: vulnerability identification, classification, and mitigation. The tool employs static analysis to examine model architecture and training data, while dynamic analysis uses diverse prompts to simulate interactions and identify behavioral weaknesses. Additionally, Garak incorporates adaptive testing, leveraging machine learning techniques to refine its testing process iteratively and uncover hidden vulnerabilities.

The identified vulnerabilities are categorized based on their impact, severity, and potential exploitability, providing a structured approach to addressing risks. For mitigation, Garak offers actionable recommendations, such as refining prompts to counteract malicious inputs, retraining the model to improve its resilience, and implementing output filters to block inappropriate content.Â

Garak’s architecture integrates a generator for model interaction, a prober to craft and execute test cases, an analyzer to process and assess model responses, and a reporter that delivers detailed findings and suggested remedies. Its automated and systematic design makes it more accessible than conventional methods, enabling organizations to strengthen their LLMs’ security while reducing the demand for specialized expertise.

In conclusion, NVIDIA’s Garak is a robust tool that addresses the critical vulnerabilities faced by LLMs. By automating the assessment process and providing actionable mitigation strategies, Garak not only enhances LLM security but also ensures greater reliability and trustworthiness in its outputs. The tool’s comprehensive approach marks a significant advancement in safeguarding AI systems, making it a valuable resource for organizations deploying LLMs.

Check out the GitHub Repo. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 55k+ ML SubReddit.

[FREE AI VIRTUAL CONFERENCE] SmallCon: Free Virtual GenAI Conference ft. Meta, Mistral, Salesforce, Harvey AI & more. Join us on Dec 11th for this free virtual event to learn what it takes to build big with small models from AI trailblazers like Meta, Mistral AI, Salesforce, Harvey AI, Upstage, Nubank, Nvidia, Hugging Face, and more.

The post NVIDIA AI Introduces ‘garak’: The LLM Vulnerability Scanner to Perform AI Red-Teaming and Vulnerability Assessment on LLM Applications appeared first on MarkTechPost.

Source: Read MoreÂ