Preserving and taking advantage of institutional knowledge is critical for organizational success and adaptability. This collective wisdom, comprising insights and experiences accumulated by employees over time, often exists as tacit knowledge passed down informally. Formalizing and documenting this invaluable resource can help organizations maintain institutional memory, drive innovation, enhance decision-making processes, and accelerate onboarding for new employees. However, effectively capturing and documenting this knowledge presents significant challenges. Traditional methods, such as manual documentation or interviews, are often time-consuming, inconsistent, and prone to errors. Moreover, the most valuable knowledge frequently resides in the minds of seasoned employees, who may find it difficult to articulate or lack the time to document their expertise comprehensively.

This post introduces an innovative voice-based application workflow that harnesses the power of Amazon Bedrock, Amazon Transcribe, and React to systematically capture and document institutional knowledge through voice recordings from experienced staff members. Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading artificial intelligence (AI) companies such as AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI. Our solution uses Amazon Transcribe for real-time speech-to-text conversion, enabling accurate and immediate documentation of spoken knowledge. We then use generative AI, powered by Amazon Bedrock, to analyze and summarize the transcribed content, extracting key insights and generating comprehensive documentation.

The front-end of our application is built using React, a popular JavaScript library for creating dynamic UIs. This React-based UI seamlessly integrates with Amazon Transcribe, providing users with a real-time transcription experience. As employees speak, they can observe their words converted to text in real-time, permitting immediate review and editing.

By combining the React front-end UI with Amazon Transcribe and Amazon Bedrock, we’ve created a comprehensive solution for capturing, processing, and preserving valuable institutional knowledge. This approach not only streamlines the documentation process but also enhances the quality and accessibility of the captured information, supporting operational excellence and fostering a culture of continuous learning and improvement within organizations.

Solution overview

This solution uses a combination of AWS services, including Amazon Transcribe, Amazon Bedrock, AWS Lambda, Amazon Simple Storage Service (Amazon S3), and Amazon CloudFront, to deliver real-time transcription and document generation. This solution uses a combination of cutting-edge technologies to create a seamless knowledge capture process:

- User interface – A React-based front-end, distributed through Amazon CloudFront, provides an intuitive interface for employees to input voice data.

- Real-time transcription – Amazon Transcribe streaming converts speech to text in real time, providing accurate and immediate transcription of spoken knowledge.

- Intelligent processing – A Lambda function, powered by generative AI models through Amazon Bedrock, analyzes and summarizes the transcribed text. It goes beyond simple summarization by performing the following actions:

- Extracting key concepts and terminologies.

- Structuring the information into a coherent, well-organized document.

- Secure storage – Raw audio files, processed information, summaries, and generated content are securely stored in Amazon S3, providing scalable and durable storage for this valuable knowledge repository. S3 bucket policies and encryption are implemented to enforce data security and compliance.

This solution uses a custom authorization Lambda function with Amazon API Gateway instead of more comprehensive identity management solutions such as Amazon Cognito. This approach was chosen for several reasons:

- Simplicity – As a sample application, it doesn’t demand full user management or login functionality

- Minimal user friction – Users don’t need to create accounts or log in, simplifying the user experience

- Quick implementation – For rapid prototyping, this approach can be faster to implement than setting up a full user management system

- Temporary credential management – Businesses can use this approach to offer secure, temporary access to AWS services without embedding long-term credentials in the application

Although this solution works well for this specific use case, it’s important to note that for production applications, especially those dealing with sensitive data or needing user-specific functionality, a more robust identity solution such as Amazon Cognito would typically be recommended.

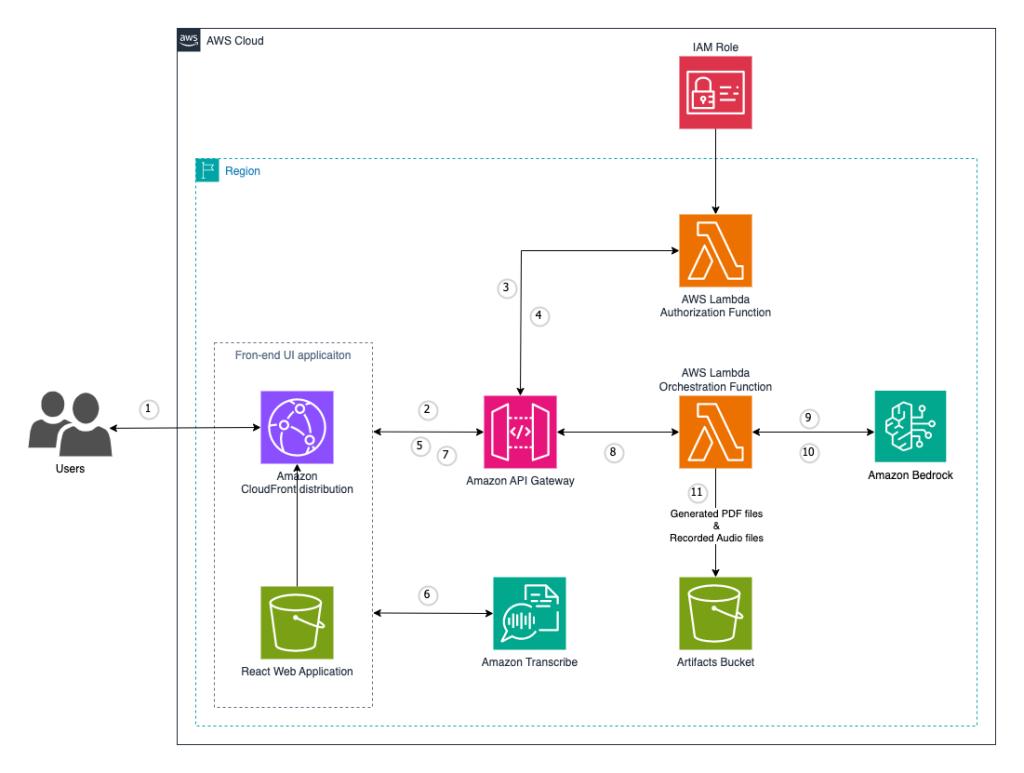

The following diagram illustrates the architecture of our solution.

The workflow includes the following steps:

- Users access the front-end UI application, which is distributed through CloudFront

- The React web application sends an initial request to Amazon API Gateway

- API Gateway forwards the request to the authorization Lambda function

- The authorization function checks the request against the AWS Identity and Access Management (IAM) role to confirm proper permissions

- The authorization function sends temporary credentials back to the front-end application through API Gateway

- With the temporary credentials, the React web application communicates directly with Amazon Transcribe for real-time speech-to-text conversion as the user records their input

- After recording and transcription, the user sends (through the front-end UI) the transcribed texts and audio files to the backend through API Gateway

- API Gateway routes the authorized request (containing transcribed text and audio files) to the orchestration Lambda function

- The orchestration function sends the transcribed text for summarization

- The orchestration function receives summarized text from Amazon Bedrock to generate content

- The orchestration function stores the generated PDF files and recorded audio files in the artifacts S3 bucket

Prerequisites

You need the following prerequisites:

- An active AWS account

- Docker installed

- The AWS CDK Toolkit 2.114.1+ installed and bootstrapped to the

us-east-1AWS Region - Python 3.12+ installed

- Model access to Anthropic’s Claude enabled in Amazon Bedrock

- An IAM user or role with access to Amazon Transcribe, Amazon Bedrock, Amazon S3, and Lambda

Deploy the solution with the AWS CDK

The AWS Cloud Development Kit (AWS CDK) is an open source software development framework for defining cloud infrastructure as code and provisioning it through AWS CloudFormation. Our AWS CDK stack deploys resources from the following AWS services:

- Amazon Bedrock

- Amazon CloudFront

- AWS CodeBuild

- Amazon EventBridge

- IAM

- AWS Key Management Service (AWS KMS)

- AWS Lambda

- Amazon S3

- AWS Systems Manager Parameter Store

- Amazon Transcribe

- AWS WAF

To deploy the solution, complete the following steps:

- Clone the GitHub repository: genai-knowledge-capture-webapp

- Follow the Prerequisites section in the

README.mdfile to set up your local environment

As of this writing, this solution supports deployment to the us-east-1 Region. The CloudFront distribution in this solution is geo-restricted to the US and Canada by default. To change this configuration, refer to the react-app-deploy.ts GitHub repo.

- Invoke

npm installto install the dependencies - Invoke

cdk deployto deploy the solution

The deployment process typically takes 20–30 minutes. When the deployment is complete, CodeBuild will build and deploy the React application, which typically takes 2–3 minutes. After that, you can access the UI at the ReactAppUrl URL that is output by the AWS CDK.

Amazon Transcribe Streaming within React application

Our solution’s front-end is built using React, a popular JavaScript library for creating dynamic user interfaces. We integrate Amazon Transcribe streaming into our React application using the aws-sdk/client-transcribe-streaming library. This integration enables real-time speech-to-text functionality, so users can observe their spoken words converted to text instantly.

The real-time transcription offers several benefits for knowledge capture:

- With the immediate feedback, speakers can correct or clarify their statements in the moment

- The visual representation of spoken words can help maintain focus and structure in the knowledge sharing process

- It reduces the cognitive load on the speaker, who doesn’t need to worry about note-taking or remembering key points

In this solution, the Amazon Transcribe client is managed in a reusable React hook, useAudioTranscription.ts. An additional React hook, useAudioProcessing.ts, implements the necessary audio stream processing. Refer to the GitHub repo for more information. The following is a simplified code snippet demonstrating the Amazon Transcribe client integration:

For optimal results, we recommend using a good-quality microphone and speaking clearly. At the time of writing, the system supports major dialects of English, with plans to expand language support in future updates.

Use the application

After deployment, open the ReactAppUrl link (https://<cloud front domain name>.cloudfront.net) in your browser (the solution supports Chrome, Firefox, Edge, Safari, and Brave browsers on Mac and Windows). A web UI opens, as shown in the following screenshot.

To use this application, complete the following steps:

- Enter a question or topic.

- Enter a file name for the document.

- Choose Start Transcription and start recording your input for the given question or topic. The transcribed text will be shown in the Transcription box in real time.

- After recording, you can edit the transcribed text.

- You can also choose the play icon to play the recorded audio clips.

- Choose Generate Document to invoke the backend service to generate a document from the input question and associated transcription. Meanwhile, the recorded audio clips are sent to an S3 bucket for future analysis.

The document generation process uses FMs from Amazon Bedrock to create a well-structured, professional document. The FM model performs the following actions:

- Organizes the content into logical sections with appropriate headings

- Identifies and highlights important concepts or terminologies

- Generates a brief executive summary at the beginning of the document

- Applies consistent formatting and styling

The audio files and generated documents are stored in a dedicated S3 bucket, as shown in the following screenshot, with appropriate encryption and access controls in place.

- Choose View Document after you generate the document, and you will notice a professional PDF document generated with the user’s input in your browser, accessed through a presigned URL.

Additional information

To further enhance your knowledge capture solution and address specific use cases, consider the additional features and best practices discussed in this section.

Custom vocabulary with Amazon Transcribe

For industries with specialized terminology, Amazon Transcribe offers a custom vocabulary feature. You can define industry-specific terms, acronyms, and phrases to improve transcription accuracy. To implement this, complete the following steps:

- Create a custom vocabulary file with your specialized terms

- Use the Amazon Transcribe API to add this vocabulary to your account

- Specify the custom vocabulary in your transcription requests

Asynchronous file uploads

For handling large audio files or improving user experience, implement an asynchronous upload process:

- Create a separate Lambda function for file uploads

- Use Amazon S3 presigned URLs to allow direct uploads from the client to Amazon S3

- Invoke the upload Lambda function using S3 Event Notifications

Multi-topic document generation

For generating comprehensive documents covering multiple topics, refer to the following AWS Prescriptive Guidance pattern: Document institutional knowledge from voice inputs by using Amazon Bedrock and Amazon Transcribe. This pattern provides a scalable approach to combining multiple voice inputs into a single, coherent document.

Key benefits of this approach include:

- Efficient capture of complex, multifaceted knowledge

- Improved document structure and coherence

- Reduced cognitive load on subject matter experts (SMEs)

Use captured knowledge as a knowledge base

The knowledge captured through this solution can serve as a valuable, searchable knowledge base for your organization. To maximize its utility, you can integrate with enterprise search solutions such as Amazon Bedrock Knowledge Bases to make the captured knowledge quickly discoverable. Additionally, you can set up regular review and update cycles to keep the knowledge base current and relevant.

Clean up

When you’re done testing the solution, remove it from your AWS account to avoid future costs:

- Invoke

cdk destroyto remove the solution - You may also need to manually remove the S3 buckets created by the solution

Summary

This post demonstrates the power of combining AWS services such as Amazon Transcribe and Amazon Bedrock with popular front-end frameworks such as React to create a robust knowledge capture solution. By using real-time transcription and generative AI, organizations can efficiently document and preserve valuable institutional knowledge, fostering innovation, improving decision-making, and maintaining a competitive edge in dynamic business environments.

We encourage you to explore this solution further by deploying it in your own environment and adapting it to your organization’s specific needs. The source code and detailed instructions are available in our genai-knowledge-capture-webapp GitHub repository, providing a solid foundation for your knowledge capture initiatives.

By embracing this innovative approach to knowledge capture, organizations can unlock the full potential of their collective wisdom, driving continuous improvement and maintaining their competitive edge.

About the Authors

Jundong Qiao is a Machine Learning Engineer at AWS Professional Service, where he specializes in implementing and enhancing AI/ML capabilities across various sectors. His expertise encompasses building next-generation AI solutions, including chatbots and predictive models that drive efficiency and innovation.

Jundong Qiao is a Machine Learning Engineer at AWS Professional Service, where he specializes in implementing and enhancing AI/ML capabilities across various sectors. His expertise encompasses building next-generation AI solutions, including chatbots and predictive models that drive efficiency and innovation.

Michael Massey is a Cloud Application Architect at Amazon Web Services. He helps AWS customers achieve their goals by building highly-available and highly-scalable solutions on the AWS Cloud.

Michael Massey is a Cloud Application Architect at Amazon Web Services. He helps AWS customers achieve their goals by building highly-available and highly-scalable solutions on the AWS Cloud.

Praveen Kumar Jeyarajan is a Principal DevOps Consultant at AWS, supporting Enterprise customers and their journey to the cloud. He has 13+ years of DevOps experience and is skilled in solving myriad technical challenges using the latest technologies. He holds a Masters degree in Software Engineering. Outside of work, he enjoys watching movies and playing tennis.

Praveen Kumar Jeyarajan is a Principal DevOps Consultant at AWS, supporting Enterprise customers and their journey to the cloud. He has 13+ years of DevOps experience and is skilled in solving myriad technical challenges using the latest technologies. He holds a Masters degree in Software Engineering. Outside of work, he enjoys watching movies and playing tennis.

Source: Read MoreÂ