Search

News & Updates

As a global AI-first consultancy, we’re driving meaningful results through AI-powered innovation and digital transformation, creating lasting impact for businesses and their customers. Our thought leaders actively engage with top global analyst firms, including Forrester, IDC, and more. These partnerships not only validate our capabilities but also amplify the impact…

by George Whittaker Introduction Steam’s monthly Hardware & Software Survey, published by Valve, offers a window into what operating systems, hardware, and software choices its user base is making. It has become a key barometer for understanding trends in PC gaming, especially for less dominant platforms like Linux. The newest…

Raspberry Pi Pico 2 starter kit from Elecrow is an educational device that integrates multiple sensors and components onto a single board for learning electronics and programming. Built around the dual-core Raspberry Pi Pico2 RP2350 chip, the kit includes 17 sensors, 20 RGB LEDs, and a 2.4-inch TFT color touchscreen…

We hit a major milestone on our Mastodon account. We crossed the 40,000 mark. It’s a pleasant surprise. We have a lot more people on Twitter, Facebook, Instagram and even YouTube. But seeing this number on a non-mainstream platform like Mastodon gives a positive uplift🕺 💬 Let’s see what you…

Artificial Intelligence

PLAID is a multimodal generative model that simultaneously generates protein 1D sequence and 3D structure,…

Training Diffusion Models with Reinforcement Learning We deployed 100 reinforcement learning (RL)-controlled cars into rush-hour…

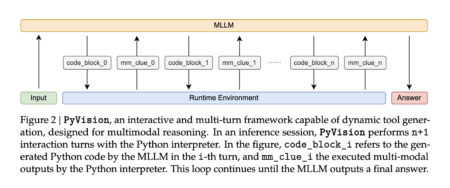

Recent advances in Large Language Models (LLMs) enable exciting LLM-integrated applications. However, as LLMs have…

In order to produce effective targeted therapies for cancer, scientists need to isolate the genetic…