Tsinghua University’s Knowledge Engineering Group (KEG) has unveiled GLM-4 9B, a powerful new language model that outperforms GPT-4 and Gemini in various benchmarks. Developed by the Tsinghua Deep Model (THUDM) team, this open-source model marks a significant milestone in the field of natural language processing.

At its core, GLM-4 9B is a massive language model trained on an unprecedented 10 trillion tokens spanning 26 languages. It caters to various capabilities, including multi-round dialogue in Chinese and English, code execution, web browsing, and custom tool calling through Function Call.

The model’s architecture is built upon the latest advancements in deep learning, incorporating cutting-edge techniques such as attention mechanisms and transformer architectures. The base version supports a context window of up to 128,000 tokens, while a specialized variant allows for an impressive 1 million token context length.

Compared to industry giants like GPT and Gemini, GLM-4 9B’s architecture stands out with its support for high-resolution vision tasks (up to 1198 x 1198 pixels) and its ability to handle a diverse range of languages. This versatility positions GLM-4 9B as a powerful contender in the language model landscape.

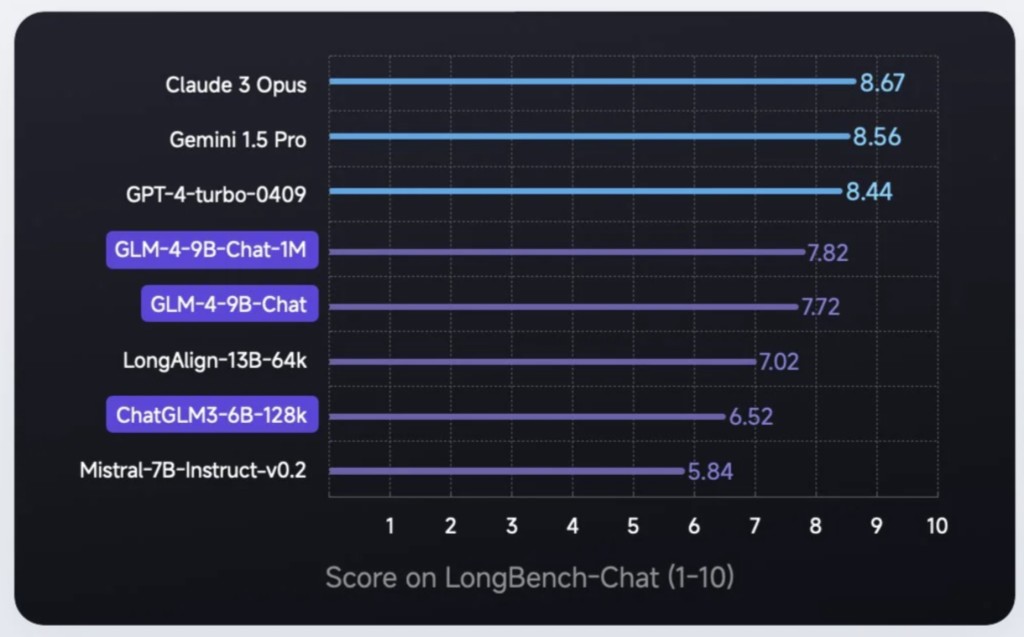

Evaluations on various datasets have demonstrated GLM-4 9B’s superior performance in many areas and performance on par with the best models for some of the tasks, the model has surpassed every other existing model on overall accuracy. Notably, it has outperformed GPT-4, Gemini Pro (in vision tasks), Mistral, and Llama 3 8B, solidifying its position as a formidable force in the field.

With its open-source nature and permissive commercial use (under certain conditions), GLM-4 9B presents a wealth of opportunities for developers, researchers, and businesses alike. Potential applications range from natural language processing tasks to computer vision, code generation, and beyond. The model’s seamless integration with the Transformers library further simplifies its adoption and deployment.

The release of GLM-4 9B by Tsinghua University’s KEG marks a significant milestone in language models. With its impressive performance, multi-lingual capabilities, and versatile architecture, this model sets a new benchmark for open-source language models and paves the way for further advancements in natural language processing and artificial intelligence.

Check out the Model on HF Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 43k+ ML SubReddit | Also, check out our AI Events Platform

The post Meet Tsinghua University’s GLM-4-9B-Chat-1M: An Outstanding Language Model Challenging GPT 4V, Gemini Pro (on vision), Mistral and Llama 3 8B appeared first on MarkTechPost.

Source: Read MoreÂ