Graph Neural Networks (GNNs) are crucial in processing data from domains such as e-commerce and social networks because they manage complex structures. Traditionally, GNNs operate on data that fits within a system’s main memory. However, with the growing scale of graph data, many networks now require methods to handle datasets that exceed memory limits, introducing the need for out-of-core solutions where data resides on disk.

Despite their necessity, existing out-of-core GNN systems struggle to balance efficient data access with model accuracy. Current systems face a trade-off: either suffer from slow input/output operations due to small, frequent disk reads or compromise accuracy by handling graph data in disconnected chunks. For instance, while pioneering, these challenges have limited previous solutions like Ginex and MariusGNN, showing significant drawbacks in training speed or accuracy.

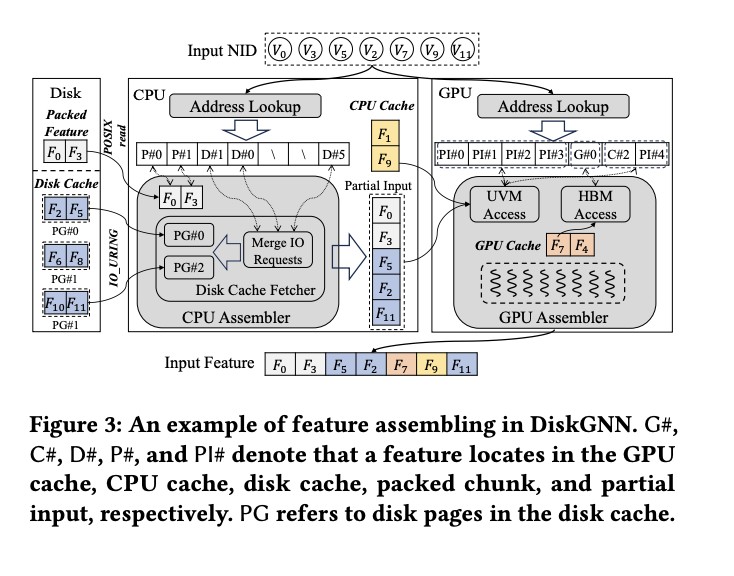

The DiskGNN framework, developed by researchers from Southern University of Science and Technology, Shanghai Jiao Tong University, Centre for Perceptual and Interactive Intelligence, AWS Shanghai AI Lab, and New York University, emerges as a transformative solution specifically designed to optimize the speed and accuracy of GNN training on large datasets. This system utilizes an innovative offline sampling technique that prepares data for quick access during training. By preprocessing and arranging graph data based on expected access patterns, DiskGNN reduces unnecessary disk reads, significantly enhancing training efficiency.

The architecture of DiskGNN is built around a multi-tiered storage approach that cleverly utilizes GPU and CPU memory alongside disk storage. This structure ensures that frequently accessed data is kept closer to the computation layer, substantially speeding up the training process. For instance, in benchmark tests, DiskGNN demonstrated a speedup of over eight times compared to baselines, with training epochs averaging around 76 seconds compared to 580 seconds for systems like Ginex.

Performance evaluations further illustrate DiskGNN’s efficacy. The system accelerates the GNN training process and maintains high model accuracy. For example, in tests involving the Ogbn-papers100M graph dataset, DiskGNN matched or exceeded the best model accuracies of existing systems while significantly reducing the average epoch time and disk access time. Specifically, DiskGNN managed to maintain an accuracy of approximately 65.9% while reducing the average disk access time to just 51.2 seconds, compared to 412 seconds in previous systems.

DiskGNN’s design minimizes the typical amplification of read operations inherent in disk-based systems. The system effectively avoids the typical scenario where each training step requires multiple, small-scale read operations by organizing node features into contiguous blocks on the disk. This reduces the load on the storage system and decreases the time spent waiting for data, thus optimizing the overall training pipeline.

In conclusion, DiskGNN, which addresses the dual challenges of data access speed and model accuracy, sets a new standard for out-of-core GNN training. DiskGNN’s strategic data management and innovative architecture allow it to outperform existing solutions, offering a faster, more accurate approach to training graph neural networks. This makes it an invaluable tool for researchers and industries working with extensive graph datasets, where performance and accuracy are paramount.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 42k+ ML SubReddit

The post Optimizing Graph Neural Network Training with DiskGNN: A Leap Toward Efficient Large-Scale Learning appeared first on MarkTechPost.

Source: Read MoreÂ