Humans are versatile; they can quickly apply what they’ve learned from little examples to larger contexts by combining new and old information. Not only can they foresee possible setbacks and determine what is important for success, but they swiftly learn to adjust to different situations by practicing and receiving feedback on what works. This process can refine and transfer knowledge across many jobs and situations.

Extraction of high-level insights from trajectories and experiences has been the subject of recent research utilizing visual-language models (VLMs) and large-language models (LLMs). The model’s introspection yields these insights, which are then used to improve performance by attaching them to prompts, using their remarkable ability to learn in context. The majority of current approaches rely on language in one of several ways: to communicate job rewards, to store human adjustments after failures, to have domain experts create or select examples without reflection, or to set regulations and incentives through language. The approaches in question mostly rely on text and don’t use visual cues or demonstrations. They also rely solely on introspection in the event of failure, which is just one of many ways that machines and humans can accumulate experiences and derive insights.

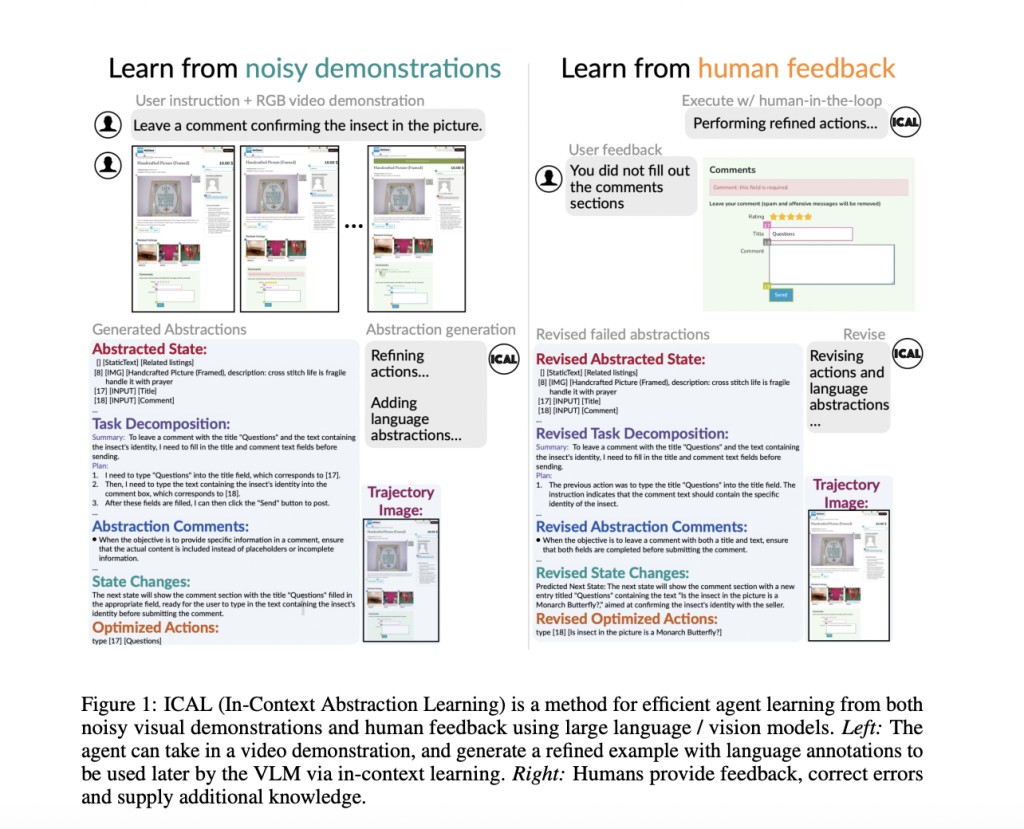

A new study by Carnegie Mellon University and Google DeepMind demonstrates a novel approach to training VLMs. This approach, called In-Context Abstraction Learning (ICAL), guides VLMs to build multimodal abstractions in novel domains. In simpler terms, ICAL helps VLMs to understand and learn from their experiences in different situations, allowing them to adapt and perform better in new tasks. The approach emphasizes learning abstractions that encompass tasks’ dynamics and critical knowledge, in contrast to earlier efforts that store and recall successful action plans or trajectories. To be more precise, ICAL addresses four distinct kinds of cognitive abstractions:Â

Task and causal relationships, which reveal the underlying principles or actions required to accomplish a goal and the interconnectedness of its elements

Changes in object states, which show the different shapes or states an object can take

Temporal abstractions, which divide tasks into smaller objectives

Task construals emphasize important visual aspects within a task.Â

In response to good or bad demonstrations, ICAL tells a VLM to optimize the trajectories and generate relevant verbal and visual abstractions. Humans’ natural language input guides the execution of the trajectory in the environment, which further refines these abstractions. The model can enhance its execution and abstraction capabilities with each phase of abstraction generation, using previously derived abstractions. The acquired abstractions concisely summarize the rules, focal regions, action sequences, state transitions, and visual representations expressed in free-form natural language.Â

Using the acquired example abstractions, the researchers conducted a thorough evaluation of their agent on three different benchmarks: VisualWebArena, TEACh, and Ego4D. These benchmarks are widely used in the field of AI and provide a standard for evaluating the performance of different models. VisualWebArena is used for multimodal autonomous web tasks, TEACh for dialogue-based training in the home, and Ego4D for video action anticipation. The effectiveness of ICAL-taught abstractions for in-context learning is demonstrated by their agent’s new state-of-the-art performance in TEACh, which outperforms VLM agents that rely on raw demos or extensive domain-expert hand-written examples. In particular, the proposed method improves the success of goal conditions by 12.6% compared to the prior SOTA, HELPER. After just ten cases, the findings show that this method delivers a speed boost of 14.7% on unseen jobs and grows with the size of the external memory. The goal-condition performance is enhanced by an additional 4.9% when the learned examples are combined with LoRA-based LLM fine-tuning [32]. With a success percentage of 22.7% in the VisualWebArena, the agent outperforms the state-of-the-art GPT4Vision + Set of Marks by a margin of 14.3%. Using the chain of thought, ICAL reduces the noun edit distance by 6.4 and the action edit distance by 1.7 in the Ego4D environment, outperforming few-shot GPT4V. It also competes closely with fully supervised approaches, even though it uses 639 times less in-domain training data.Â

The potential of the ICAL method is vast, as it consistently outperforms in-context learning using action plans or trajectories without such abstractions, while significantly reducing the need for meticulously constructed examples. The team acknowledges several areas for further study and potential challenges for ICAL, such as its ability to handle noisy demos and its dependence on a static action API. However, these are seen as opportunities for growth and improvement rather than limitations, instilling a sense of optimism and hope for the future of ICAL.

Check out the Paper, Project, and GitHub. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter.Â

Join our Telegram Channel and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 45k+ ML SubReddit

Create, edit, and augment tabular data with the first compound AI system, Gretel Navigator, now generally available! [Advertisement]

The post CMU Researchers Propose In-Context Abstraction Learning (ICAL): An AI Method that Builds a Memory of Multimodal Experience Insights from Sub-Optimal Demonstrations and Human Feedback appeared first on MarkTechPost.

Source: Read MoreÂ