Large Language Models (LLMs) are capable of performing a wide range of tasks with text. In this tutorial, you’ll learn how to use LLMs using LeMUR to summarize audio with Node.js.

Summarizing audio is a two-step process. First, you need to transcribe the audio to text. Then, once you have a transcript, you need to prompt an LLM to summarize it. In this tutorial, you’ll use AssemblyAI for both of these steps, using the Universal-1 model to transcribe the audio and LeMUR to summarize it.

Set up your environment

First, install Node.js 18 or higher on your system.

Next, create a new project folder, change directories to it, and initialize a new node project:

mkdir audio-summarization

cd audio-summarization

npm init -y

Open the package.json file and add type: “module”, to the list of properties.

{

…

“type”: “module”,

…

}

This will tell Node.js to use the ES Module syntax for exporting and importing modules, and not to use the old CommonJS syntax.

Then, install the AssemblyAI JavaScript SDK which lets you interact with AssemblyAI API more easily:

npm install –save assemblyai

Next, you need an AssemblyAI API key that you can find on your dashboard. If you don’t have an AssemblyAI account yet, you must first sign up. Once you’ve copied your API key, configure it as the ASSEMBLYAI_API_KEY environment variable on your machine:

# Mac/Linux:

export ASSEMBLYAI_API_KEY=<YOUR_KEY>

# Windows:

set ASSEMBLYAI_API_KEY=<YOUR_KEY>

Automatic summarization of a video with Node.js

You’ll summarize this episode of the Lex Fridman podcast in which Lex speaks with Guido Van Rossum, the creator of Python.

Transcription

First, you’ll transcribe the podcast episode, which only takes three lines of code. Create a file called autosummarize.js and paste the below code into it:

import { AssemblyAI } from ‘assemblyai’;

// create AssemblyAI API client

const client = new AssemblyAI({ apiKey: process.env.ASSEMBLYAI_API_KEY });

// transcribe audio file with punctuation and text formatting

const transcript = await client.transcripts.transcribe({

// replace with local file path or your remote file

audio: “https://storage.googleapis.com/aai-web-samples/lex_guido.webm”

});

The code imports the AssemblyAI client class from the assemblyai module, instantiates the client with your API key, and finally transcribes the video file.

If everything goes well, the transcript object will be populated with the transcript text and many other transcript properties. However, you should check whether an error occurred and log the error.

Add the following lines to autosummarize.js:

// throw error if transcript status is error

if (transcript.status === “error”) {

throw new Error(transcript.error);

}

Automatic summarization

Now that you have a transcript, you can automatically summarize it using LeMUR, our LLM framework for prompting audio. There are a few potential parameters you can include when calling LeMUR.

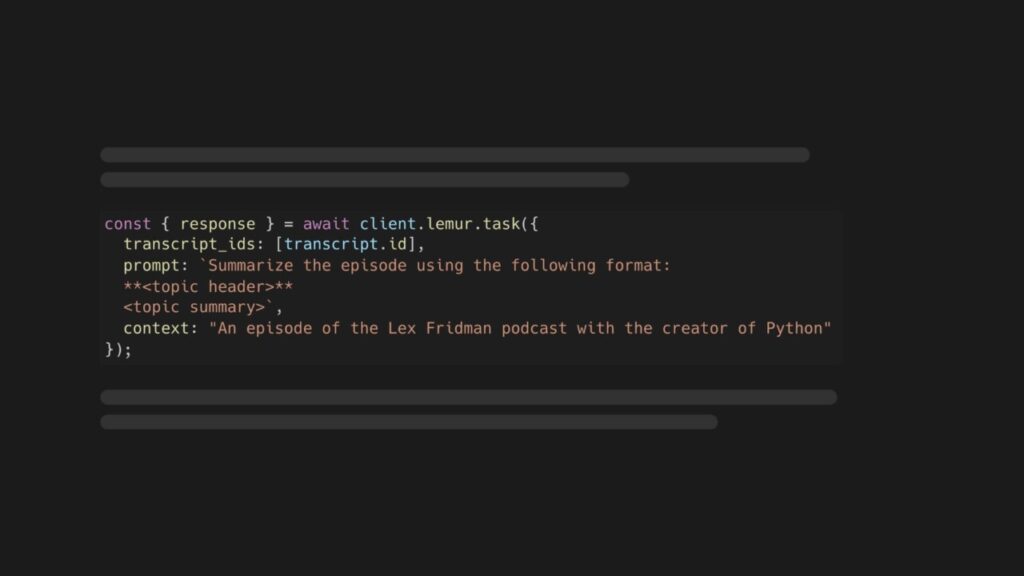

In this tutorial, you’ll use the prompt and context parameters.

With the prompt, you tell LeMUR what to do, and the context provides wider context to LeMUR to contextualize the contents of the transcript. Add the following lines of code to autosummarize.js:

const { response } = await client.lemur.task({

transcript_ids: [transcript.id],

prompt: `Summarize the episode using the following format:

**<topic header>**

<topic summary>

`,

context: “An episode of the Lex Fridman podcast, in which he speaks with Guido van Rossum, the creator of the Python programming language”

});

console.log(“LeMUR response”, response);

Run the script

To run the script, go back to your shell and run:

node autosummarize.js

After a minute or two, you’ll see the summary printed to the terminal:

**Python’s design choices**

Guido discusses the rationale behind Python’s indentation style over curly braces, which reduces clutter and is simpler for beginners. However, most programmers are familiar with curly braces from other languages. The dollar sign used before variables in PHP originated in early Unix shells to distinguish variables from program names and file names. Choosing different programming languages involves difficult trade-offs.

**Improving CPython’s performance**

Guido initially coded CPython simply and efficiently, but over time more optimized algorithms were developed to improve performance. The example of prime number checking illustrates the time-space tradeoff in algorithms.

**The history of asynchronous I/O in Python**

In the late 1990s and early 2000s, the Python standard library included modules for asynchronous I/O and networking. However, over time these modules became outdated. Around 2012 to 2014, developers proposed updating these modules, but were told to use third party libraries instead. Guido proposed updating asynchronous I/O in the standard library. He worked with developers of third party libraries on the design. The new asynchronous I/O module was added to the standard library and has been successful, particularly for Python web clients.

**Python for machine learning and data science**

In the early 1990s, scientists used Fortran and C++ libraries to solve mathematical problems. Paul Dubois saw that a higher level language was needed to tie algorithms together. In the mid 1990s, Python libraries emerged to support large arrays efficiently. Scientists at different institutions discovered Python had the infrastructure they needed. Exchanging code in the same language is preferable to starting from scratch in another language. This is how Python became dominant for machine learning and data science.

**The global interpreter lock (GIL)**

The GIL allowed for multi-threading even on single core CPUs. As multi-core CPUs became common, the GIL became an issue. Removing the GIL could be an option for Python 4.0, though it would require recompiling extension modules and supporting third party packages.

**Guido’s experience as BDFL**

While providing clarity and direction for the community, the BDFL role also caused Guido personal stress. He feels he relinquished the role too late, but the new steering council structure has led the community steadily.

**The future of Python and programming**

Python will become a legacy language built upon without needing details. Abstractions are built upon each other at different levels.

Automatic summarize audio files with Node.js

The AssemblyAI JS SDK also accepts audio files as a public URL, local path, stream, or buffer. You can find a list of all supported audio and video formats here. To automatically summarize an audio file, you can use the same code as above but simply pass in an audio file:

import { AssemblyAI } from ‘assemblyai’;

// create AssemblyAI API client

const client = new AssemblyAI({ apiKey: process.env.ASSEMBLYAI_API_KEY });

// transcribe audio file with punctuation and text formatting

const transcript = await client.transcripts.transcribe({

// replace with local file path or your remote file

audio: “https://storage.googleapis.com/aai-web-samples/meeting.mp3”

});

// throw error if transcript status is error

if (transcript.status === “error”) {

throw new Error(transcript.error);

}

const { response } = await client.lemur.task({

transcript_ids: [transcript.id],

prompt: `Summarize the episode using the following format:

**<topic header>**

<topic summary>

`,

context: “An episode of the Lex Fridman podcast, in which he speaks with Guido van Rossum, the creator of the Python programming language”

});

console.log(“LeMUR response”, response);

Final words

In this tutorial, you learned how to automatically summarize audio and video files in Node.js using AssemblyAI’s Speech-To-Text model and LeMUR LLM framework.

LeMUR is capable of much more than summarization. LeMUR has support for multiple models, see the final_model parameter, so you can prompt your audio with your preferred LLM.

Source: Read MoreÂ