Large language models (LLMs) have gained significant attention as powerful tools for various tasks, but their potential as general-purpose decision-making agents presents unique challenges. To function effectively as agents, LLMs must go beyond simply generating plausible text completions. They need to exhibit interactive, goal-directed behavior to accomplish specific tasks. This requires two critical abilities: actively seeking information about the task and making decisions that can be improved through “thinking†and verification at inference time. Current methodologies struggle to achieve these capabilities, particularly in complex tasks requiring logical reasoning. While LLMs often possess the necessary knowledge, they frequently fail to apply it effectively when asked to correct their own mistakes sequentially. This limitation highlights the need for a more robust approach to enable test-time self-improvement in LLM agents.

Researchers have attempted various approaches to enhance the reasoning and thinking capabilities of foundation models for downstream applications. These methods primarily focus on developing prompting techniques for effective multi-turn interaction with external tools, sequential refinement of predictions through reflection, thought verbalization, self-critique and revision, or using other models for response criticism. While some of these approaches show promise in improving responses, they often rely on detailed error traces or external feedback to succeed.

Prompting techniques, although useful, have limitations. Studies indicate that intrinsic self-correction guided solely by the LLM itself is often infeasible for off-the-shelf models, even when they possess the required knowledge to tackle the prompt. Fine-tuning LLMs to obtain self-improvement capabilities has also been explored, using strategies such as training on self-generated responses, learned verifiers, search algorithms, contrastive prompting on negative data, and iterated supervised or reinforcement learning.

However, these existing methods primarily focus on improving single-turn performance rather than introducing the capability to enhance performance over sequential turns of interaction. While some work has explored fine-tuning LLMs for multi-turn interaction directly via reinforcement learning, this approach addresses different challenges than those posed by single-turn problems in multi-turn scenarios.

Researchers from Carnegie Mellon University, UC Berkeley, and MultiOn present RISE (Recursive IntroSpEction), a unique approach to enhance LLMs’ self-improvement capabilities. This method employs an iterative fine-tuning procedure that frames single-turn prompts as multi-turn Markov decision processes. By incorporating principles from online imitation learning and reinforcement learning, RISE develops strategies for multi-turn data collection and training. This approach enables LLMs to recursively detect and correct mistakes in subsequent iterations, a capability previously thought challenging to attain. Unlike traditional methods focusing on single-turn performance, RISE aims to instill dynamic self-improvement in LLMs, potentially revolutionizing their problem-solving abilities in complex scenarios.

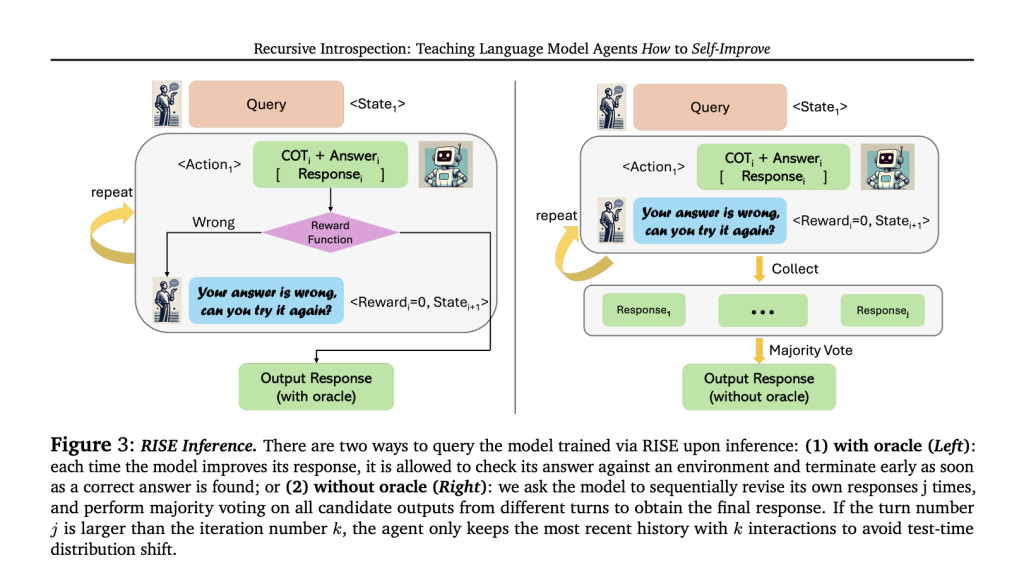

RISE presents an innovative approach to fine-tune foundation models for self-improvement over multiple turns. The method begins by converting single-turn problems into a multi-turn Markov Decision Process (MDP). This MDP construction transforms prompts into initial states, with model responses serving as actions. The next state is created by concatenating the current state, the model’s action, and a fixed introspection prompt. Rewards are based on answer correctness. RISE then employs strategies for data collection and learning within this MDP framework. The approach uses either distillation from a more capable model or self-distillation to generate improved responses. Finally, RISE applies reward-weighted supervised learning to train the model, enabling it to enhance its predictions over sequential attempts.

RISE demonstrates significant performance improvements across multiple benchmarks. On GSM8K, RISE boosted the LLama2 base model’s five-turn performance by 15.1% and 17.7% after one and two iterations respectively, without using an oracle. On MATH, improvements of 3.4% and 4.6% were observed. These gains surpass those achieved by other methods, including prompting-only self-refinement and standard fine-tuning on oracle data. Notably, RISE outperforms sampling multiple responses in parallel, indicating its ability to genuinely correct mistakes over sequential turns. The method’s effectiveness persists across different base models, with Mistral-7B + RISE outperforming Eurus-7B-SFT, a model specifically fine-tuned for math reasoning. Also, a self-distillation version of RISE shows promise, improving 5-turn performance even with entirely self-generated data and supervision.

RISEÂ introduces a unique approach for fine-tuning Large Language Models to improve their responses over multiple turns. By converting single-turn problems into multi-turn Markov Decision Processes, RISE employs iterative reinforcement learning on on-policy rollout data, using expert or self-generated supervision. The method significantly enhances self-improvement abilities of 7B models on reasoning tasks, outperforming previous approaches. Results show consistent performance gains across different base models and tasks, demonstrating genuine sequential error correction. While computational constraints currently limit the number of training iterations, especially with self-generated supervision, RISE presents a promising direction for advancing LLM self-improvement capabilities.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 47k+ ML SubReddit

Find Upcoming AI Webinars here

The post Recursive IntroSpEction (RISE): A Machine Learning Approach for Fine-Tuning LLMs to Improve Their Own Responses Over Multiple Turns Sequentially appeared first on MarkTechPost.

Source: Read MoreÂ