Numerous customers face challenges in managing diverse data sources and seek a chatbot solution capable of orchestrating these sources to offer comprehensive answers. This post presents a solution for developing a chatbot capable of answering queries from both documentation and databases, with straightforward deployment.

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI. For documentation retrieval, Retrieval Augmented Generation (RAG) stands out as a key tool. It allows you to retrieve data from sources beyond the foundation model, enhancing prompts by integrating contextually relevant retrieved data. You can use prompt engineering to prevent hallucination and make sure that the answer is grounded in the source documentations. To retrieve data from database, you can use foundation models (FMs) offered by Amazon Bedrock, converting text into SQL queries with specified constraints. This process empowers the extraction of data from Amazon Athena tables, effectively addressing inquiries related to data.

For handling more intricate queries, achieving comprehensive answers demands information sourced from both documentation and databases. Agents for Amazon Bedrock is a generative AI tool offered through Amazon Bedrock that enables generative AI applications to execute multistep tasks across company systems and data sources. This integration allows for the synthesis of combined information, resulting in detailed and exhaustive answers.

This post demonstrates how to build a chatbot using Amazon Bedrock including Agents for Amazon Bedrock and Knowledge Bases for Amazon Bedrock, within an automated solution. The code used in this solution is available in the GitHub repo.

Solution overview

In this post, we use publicly available data, encompassing both unstructured and structured formats, to showcase our entirely automated chatbot system. Our unstructured data comes from the Amazon EC2 User Guide for Linux Instances and Amazon EC2 Instance Types documentation, and the structured data is derived from the EC2 Instance On-Demand Pricing for the US East (N. Virginia) AWS Region.

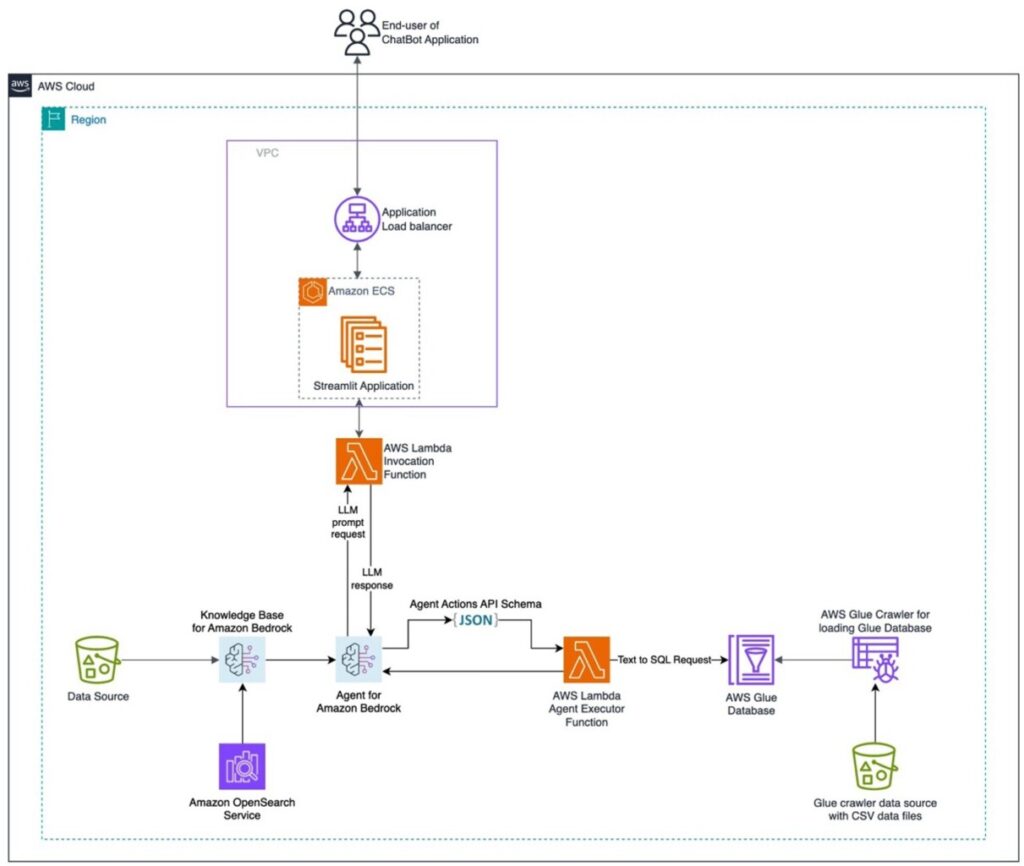

The following diagram illustrates the solution architecture.

The diagram details a comprehensive AWS Cloud-based setup within a specific Region, using multiple AWS services. The primary interface for the chatbot is a Streamlit application hosted on an Amazon Elastic Container Service (Amazon ECS) cluster, with accessibility managed by an Application Load Balancer. Queries made through this interface activate the AWS Lambda Invocation function, which interfaces with an agent. This agent responds to user inquiries by either consulting the knowledge base or by invoking an Agent Executor Lambda function. This function invokes a set of actions associated with the agent, following a predefined API schema. The knowledge base uses a serverless Amazon OpenSearch Service index as its vector database foundation. Additionally, the Agent Executor function generates SQL queries that are run against the AWS Glue database through Athena.

Deploy the solution with the AWS CDK

The AWS Cloud Development Kit (AWS CDK) is an open source software development framework for defining cloud infrastructure in code and provisioning it through AWS CloudFormation. Our AWS CDK stack deploys resources from the following AWS services:

AWS Key Management Service (AWS KMS)

Application Load Balancer

Amazon Bedrock

Amazon Elastic Container Registry (Amazon ECR)

Amazon Elastic Container Service (Amazon ECS)

AWS Fargate

AWS Glue Data Catalog (for the AWS Glue database component)

AWS Identity and Access Management (IAM)

AWS Lambda

Amazon OpenSearch Service

Amazon Simple Storage Service (Amazon S3)

Amazon Virtual Private Cloud (Amazon VPC)

Refer to the instructions provided in the README.md file for deploying the solution using the AWS CDK. After you have completed all the necessary setup, you can deploy the stack with the following command:

Amazon Bedrock features

Amazon Bedrock is a fully managed service that offers a choice of high-performing FMs from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities you need to build generative AI applications with security, privacy, and responsible AI.

In this section, we discuss some of the key Amazon Bedrock features and resources that we use in our solution.

Agents for Amazon Bedrock

Agents for Amazon Bedrock enables you to build and configure autonomous agents in your application. An agent helps your end-users complete actions based on organization data and user input. Agents orchestrate interactions between FMs, data sources, software applications, and user conversations.

For example, you can ask a question such as, “Which EC2 instance is recommended for machine learning model inference and how much does it cost?†The agent can help break down complex tasks into smaller tasks:

“To answer this question, I will:

1. Call the GET::ChatBotBedrockAgentActionGroup::getUc2Response function to get the latest EC2 instance type and pricing details for machine learning inference.

2. Call the GET::x_amz_knowledgebase_WSGY5QH4ZD::Search function to get recommendations on which EC2 instance type is best for machine learning inference.

3. Return the recommended EC2 instance type and the pricing details to the user.

I have checked that I have access to the GET::ChatBotBedrockAgentActionGroup::getUc2Response and GET::x_amz_knowledgebase_WSGY5QH4ZD::Search functions.â€

Then the agent will gather information through these three steps and integrate them into a final answer:

“The AWS Inferentia and Trainium instances are well-suited for machine learning model inference workloads. The Inf1 and Trn1 instances deliver high performance inference using dedicated ML chips like Inferentia and Trainium at lower costs compared to GPU-based instances.According to the latest information, AWS Inferentia and Trainium instance prices range from $0.228 per hour for an inf1.xlarge instance to $24.78 per hour for a trn1n.32xlarge instance. The cheapest Inferentia instance is the inf1.xlarge at $0.228 per hour, while the most expensive is the inf2.48xlarge at $12.9813 per hour. The cheapest Trainium instance is the trn1.2xlarge at $1.3438 per hour, while the most expensive is the trn1n.32xlarge at $24.78 per hour.â€

Before you create your agent, you should set up action groups and knowledge bases that you want to add to your agent:

Action groups define the tasks that you want your agent to help customers carry out.

Knowledge bases provide a repository of information that the agent can query to answer customer queries and improve its generated responses. For more information, see Knowledge bases for Amazon Bedrock.

After you complete the AWS CDK deployment, you can verify your agent along with its corresponding knowledge base and action group by completing the following steps:

On the Amazon Bedrock console, choose Agents in the navigation pane.

Choose the name of your agent.

Choose the working draft.

You can review the action group and knowledge base in the working draft.

Knowledge Bases for Amazon Bedrock

Knowledge Bases for Amazon Bedrock is a fully managed capability that helps you implement the entire RAG workflow (managed RAG), from ingestion to retrieval and prompt augmentation, without having to build custom integrations to data sources and manage data flows. For this post, we created a knowledge base for Amazon Bedrock using the AWS CDK; it’s based on the database of EC2 instance documentation stored in an S3 bucket.

Action groups

An action group consists of the following components that you set up:

An OpenAPI schema that defines the APIs that your action group should call. Your agent uses the API schema to determine the fields it needs to elicit from the customer to populate for the API request.

A Lambda function that defines the business logic for the action that your agent will carry out.

For each action group in an agent, you define a Lambda function to program the business logic for carrying out an action group and customize how you want the API response to be returned. You use the variables from the input event to define your functions and return a response to the agent. In our use case, we used Amazon Bedrock FMs, converting text into SQL queries with specified constraints. This process empowers the extraction of data from Athena tables, effectively addressing inquiries related to data.

The following screenshot shows an Athena table and sample query.

Sample questions and answers

After the AWS CDK deployment is complete, you can either test the agent on the Amazon Bedrock console or through the Streamlit app URL listed in the outputs of the chatbot stack on the AWS CloudFormation console, as shown in the following screenshot.

In the UI of the chatbot, you can view the source of the response. If the response comes from the knowledge base, you will see a link related to the documentation. If the response is sourced from the Amazon EC2 pricing table, you will see the SQL query text converted from the relevant table. The chatbot is also capable of answering questions that require information from both data sources. The following screenshots show some sample questions and answers with different data sources.

Each response from an Amazon Bedrock agent is accompanied by a trace that details the steps being orchestrated by the agent. The trace helps you follow the agent’s reasoning process that leads it to the response it gives at that point in the conversation.

When you show the trace in the test window in the console, a window appears showing a trace for each step in the reasoning process. You can view each step of the trace in real time as your agent performs orchestration. Each step can be one of the following traces:

PreProcessingTrace – Traces the input and output of the preprocessing step, in which the agent contextualizes and categorizes user input and determines if it is valid

OrchestrationTrace – Traces the input and output of the orchestration step, in which the agent interprets the input, invokes APIs and queries knowledge bases, and returns output to either continue orchestration or respond to the user

PostProcessingTrace – Traces the input and output of the postprocessing step, in which the agent handles the final output of the orchestration and determines how to return the response to the user

FailureTrace – Traces the reason that a step failed

Customizations for your own dataset

To integrate your custom data into the solution, follow the structured guidelines in this section and tailor them to your requirements. These steps are designed to provide a seamless and efficient integration process, enabling you to deploy the solution effectively with your own data.

Integrate knowledge base data

To prepare your data for integration, locate the assets/knowledgebase_data_source/ directory and place your dataset within this folder.

To make configuration adjustments, access the cdk.json file. Navigate to the context/config/paths/knowledgebase_file_name field and update it accordingly. Furthermore, modify the context/config/bedrock_instructions/knowledgebase_instruction field in the cdk.json file to accurately reflect the nuances and context of your new dataset.

Integrate structural data

To organize your structural data, within the assets/data_query_data_source/ directory, create a subdirectory (for example, tabular_data). Deposit your structured dataset (acceptable formats include CSV, JSON, ORC, and Parquet) into this newly created subfolder.

For configuration and code updates, make the following changes:

Update the cdk.json file’s context/config/paths/athena_table_data_prefix field to align with the new data path

Revise code/action-lambda/dynamic_examples.csv by incorporating new text to SQL examples that correspond with your dataset

Revise code/action-lambda/prompt_templates.py to mirror the attributes of your new tabular data

Modify the cdk.json file’s context/config/bedrock_instructions/action_group_description field to elucidate the purpose and functionality of the action Lambda function tailored for your dataset

Reflect the new functionalities of your action Lambda function in the assets/agent_api_schema/artifacts_schema.json file

General updates

In the cdk.json file, under the context/config/bedrock_instructions/agent_instruction section, provide a comprehensive description of the intended functionality and design purpose for your agents, taking into account the newly integrated data.

Clean up

To delete your resources when you’re finished using the solution and to avoid future costs, you can either delete the stack on the AWS CloudFormation console or run the following command in the terminal:

Conclusion

In this post, we illustrated the process of using the AWS CDK to establish and oversee a set of AWS resources designed to construct a chatbot on Amazon Bedrock. If you’re interested in connecting to your data source and developing your own chatbot, you can begin exploring with Amazon Bedrock.

About the Authors

Jundong Qiao is a Machine Learning Engineer at AWS Professional Service, where he specializes in implementing and enhancing AI/ML capabilities across various sectors. His expertise encompasses building next-generation AI solutions, including chatbots and predictive models that drive efficiency and innovation. Prior to AWS, Jundong was an Engineering Manager in Machine Learning at ACV Auctions, where he led initiatives that leveraged AI/ML to address intricate issues within the automotive industry.

Kara Yang is a data scientist at AWS Professional Services, adept at leveraging cloud computing, machine learning, and Generative AI to tackle diverse industry challenges. Passionately dedicated to innovation, she consistently pursues new technologies, refines solutions, and delights in sharing her expertise through writing and presentations.

Kiowa Jackson is a Machine Learning Engineer at AWS ProServe, dedicated to helping customers leverage Generative AI for creating and deploying novel applications. He is passionate about placing the benefits of GenAI in the hands of users through real-world use cases.

Praveen Kumar Jeyarajan is a Principal DevOps Consultant at AWS, supporting Enterprise customers and their journey to the cloud. He has 13+ years of DevOps experience and is skilled in solving myriad technical challenges using the latest technologies. He holds a Masters degree in Software Engineering. Outside of work, he enjoys watching movies and playing tennis.

Shuai Cao is a Senior Data Science Manager focused on Generative AI at Amazon Web Services. He leads teams of data scientists, machine learning engineers, and application architects to deliver AI/ML solutions for customers. Outside of work, he enjoys composing and arranging music.

Source: Read MoreÂ